Agentic AI Enterprise Use Cases: 30+ Production Deployments Across 8 Industries (Not Just Demos)

McKinsey's 2025 global survey found that 62% of organizations are experimenting with AI agents. Deloitte's Emerging Technology Trends study found only 14% have production-ready solutions. Gartner projects more than 40% of agentic AI projects will be cancelled by the end of 2027.

The gap between experimentation and production is not closing. And the reason is not what most people think. It is not model quality. It is not compute cost. It is not integration complexity. The consistent finding across every major enterprise AI report published in the past twelve months is that agents fail because they act on incomplete context. They see the structured 10–20% of enterprise data — the ERP tables, the CRM fields, the transaction logs — and they are completely blind to the 70–85% that lives in contracts, emails, policy documents, Slack threads, PDFs, and meeting notes.

This guide is different from every other agentic AI use cases list on the internet. Every use case here is drawn from production deployments — not hypotheticals, not vendor demonstrations, not proof-of-concepts that never shipped. Across 30+ implementations spanning retail, logistics, banking, healthcare, manufacturing, real estate, smart cities, and financial services, one pattern is consistent: the use cases that survive production are the ones where agents operate on complete context with governed execution.

Agentic AI enterprise use cases refer to real-world deployments where AI agents autonomously detect signals, reason across structured and unstructured enterprise data, execute multi-step workflows, and produce auditable decision trails — moving beyond prescriptive analytics into governed, autonomous execution across business functions.

What Is Agentic AI?

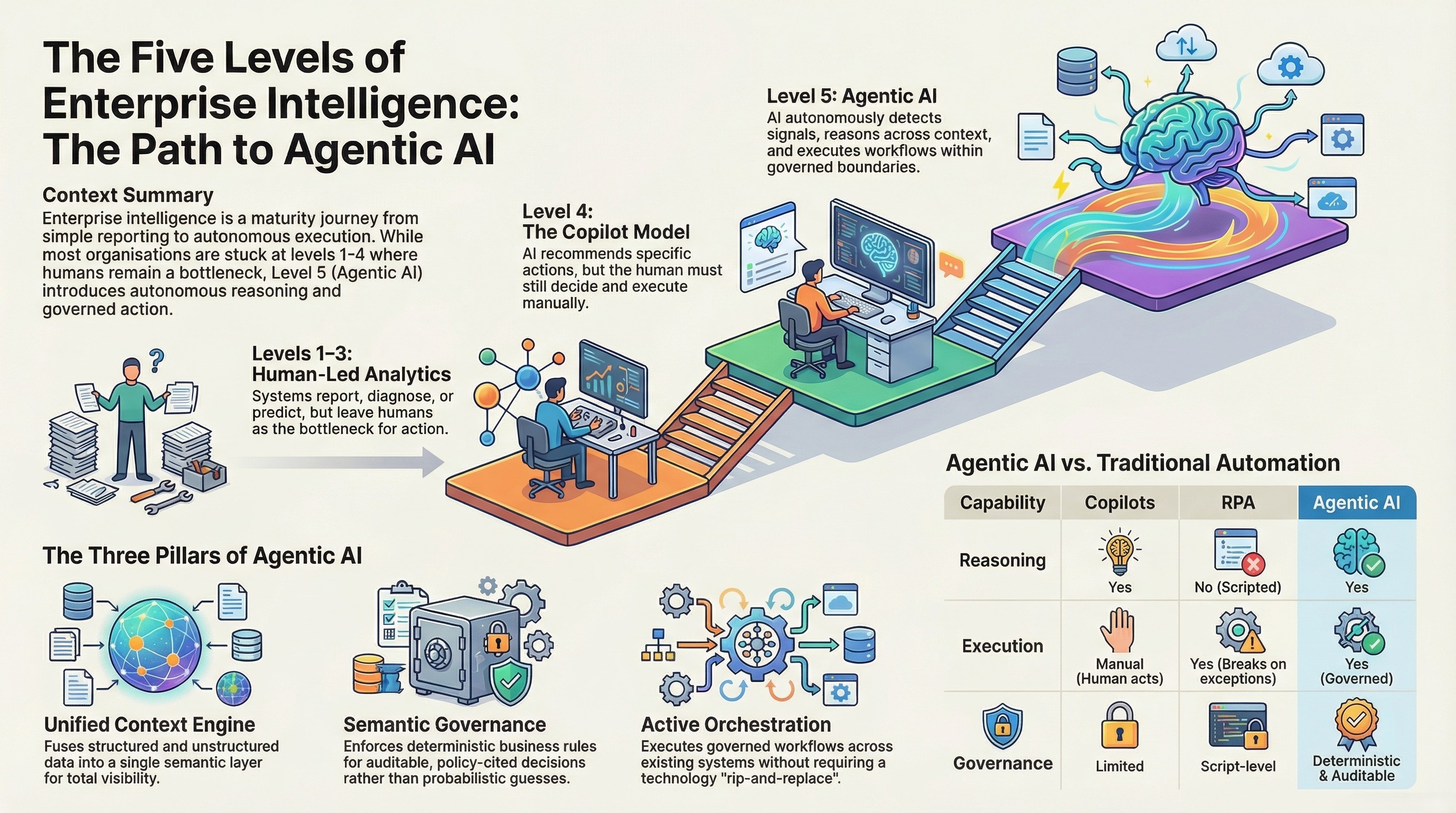

The term "agentic AI" is applied to everything from chatbots with slightly improved prompts to fully autonomous workflow engines. In enterprise settings, the distinctions matter enormously because the maturity level of your AI directly determines the risk it carries and the value it can deliver.

At the descriptive level, enterprise systems generate reports. Revenue summaries, inventory counts, operational dashboards. This is what most organizations have today — tools that tell you what happened, on a schedule measured in days or weeks. Coverage is roughly 20% of enterprise data. Action is manual.

At the diagnostic level, systems begin to answer why. Why did revenue dip in a particular region? Why did fulfilment times spike last quarter? This still operates primarily on structured data and still requires humans to interpret findings and decide what to do.

At the predictive level, systems forecast what will likely happen. Demand projections, risk models, financial forecasts. Many organizations believe they are investing in AI when they purchase predictive analytics tools. The limitation is that prediction without action still leaves humans as the bottleneck for every decision.

At the prescriptive level — Level 4 — systems recommend what to do. This is the copilot model. The AI suggests a staffing adjustment, flags a billing anomaly, or recommends a marketing action. The human still decides and acts. For organizations processing thousands of transactions daily, this bottleneck is significant.

Agentic AI is Level 5 enterprise intelligence where AI agents autonomously detect signals, reason across the full data context (structured, unstructured, and external), execute multi-step workflows across enterprise systems, and produce auditable decision trails — all within deterministic governance boundaries that define which actions are autonomous and which require human approval.

The critical difference is infrastructure. Agentic AI requires three capabilities that no other category combines. A unified context engine that fuses all data types into a single semantic layer so agents see the full picture. A semantic governor that enforces deterministic business rules — not probabilistic confidence scores — so every decision is auditable and policy-cited. And an active orchestrator that executes governed workflows across existing enterprise systems without requiring a rip-and-replace of current technology.

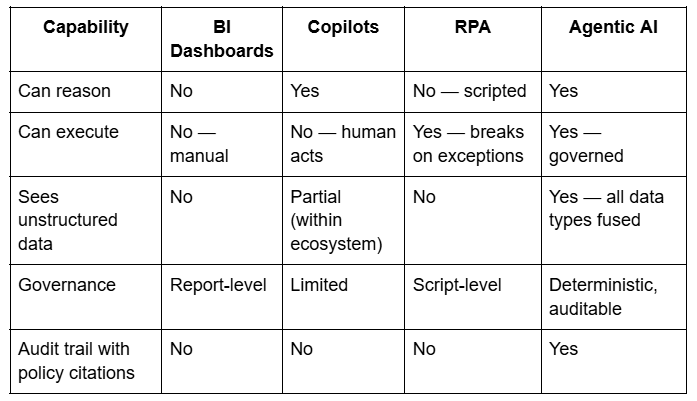

Here is how the enterprise intelligence landscape breaks down:

This distinction determines what an organization can actually automate. Copilots assist individual users. RPA automates structured transactions. Agentic AI automates entire enterprise workflows — from signal detection through reasoning, execution, and documentation — with governance and audit trails built into every step.

Why Most Enterprise Agentic AI Projects Fail

Before examining the use cases that succeeded, it is important to understand why the majority do not.

Enterprise data breaks down into three layers. Structured data — ERP tables, CRM fields, POS records, transaction logs, financial databases — represents approximately 10 to 20 percent of total enterprise data volume. This is the data that BI tools, dashboards, and most AI platforms can access. Semi-structured data — application logs, API responses, JSON exports, system events — represents roughly 5 to 10 percent. Unstructured data — documents, emails, chat conversations, media files, PDFs, contracts, policy documents — represents 70 to 85 percent. This is where the majority of enterprise context actually lives.

Most enterprise AI tools operate exclusively on the structured slice. When an AI agent makes a decision based on that narrow view, it is not providing intelligence. It is providing confidently incomplete answers at machine speed.

In one production deployment, an AI agent tasked with vendor payment automation processed structured billing data flawlessly — invoice amounts, due dates, approval statuses. But it could not access the contract documents with negotiated rates stored in a document management system, the email threads containing revised payment terms, or the flagged compliance exceptions in internal communications. The result was over ₹12 crore in early payments approved, contract terms violated, and negotiated discounts forfeited. The agent performed exactly as designed. The context was incomplete.

This pattern repeats across every industry and every use case category. Agents fail not because models are bad, but because they act on 20% of the information they need. The use cases that follow all share one characteristic — they solved the context problem first, then deployed agents. That is why they are in production and not in the 40% cancellation pile.

Agentic AI Use Cases in Retail and E-Commerce

Use Case 1: National Retail Operations Intelligence (700+ Stores)

A rapidly scaling value retail chain with a pan-India footprint of over 700 stores across hundreds of cities faced a fragmentation problem that scaled with every new location. Store support, inventory queries, SOP compliance, and employee training were handled through manual helpdesks and disconnected systems. Each store operated with partial visibility — local POS data, local inventory, local knowledge — and no unified intelligence layer connecting them.

The deployment covered three agent types working in coordination. A voice support agent operating in Hindi and English handled store-level helpdesk queries, reducing the burden on centralised support teams. An inventory intelligence agent provided real-time pricing, stock, and promotional information per store, giving store managers answers that previously required calls to regional offices. A knowledge and training agent built on retrieval-augmented generation over POS and SOP documentation enabled on-demand training guidance for new employees and compliance reference for experienced staff.

The governance layer included an admin console with analytics and ticketing integration, designed for high-volume, multi-language environments. The result was a measurable reduction in manual helpdesk burden, faster store-level issue resolution, improved inventory visibility at the individual store level, and faster onboarding through on-demand training access.

Use Case 2: E-Commerce Analytics and Decision Automation

A high-velocity e-commerce operation needed faster answers to recurring business questions — product performance, promotional effectiveness, customer behaviour patterns — without queuing requests through analyst teams. Sales, product, inventory, promotion, and customer behaviour data was scattered across multiple systems with no conversational access layer.

An agentic analytics layer was deployed with data ingestion across all commercial data sources, a conversational interface for instant business queries, and automated KPI monitoring with exception alerting. The agent did not just surface insights — it monitored thresholds continuously and flagged anomalies before they became problems.

The outcome was shorter analysis cycles for recurring questions, better visibility into product performance and promotional effectiveness, and a significant reduction in reporting dependency on human analysts. Questions that previously took days to answer through the BI team were answered in seconds through natural language.

Use Case 3: Competitive Intelligence Monitoring for Enterprise

A major industrial manufacturer competing in highly price-sensitive markets needed always-on visibility into competitor pricing, promotions, product availability, and market positioning. The data environment included over 10 million data points across ERP systems, external market feeds, and competitor catalogs. Traditional BI tools could see approximately 20% of this data — the structured internal slice.

The deployment delivered continuous e-commerce and channel monitoring covering pricing, MRP and discount structures, offers, availability, and ratings across competitor portals. An agentic Q&A layer mapped directly to the 31 strategic questions leadership needed answered regularly. Analytics views surfaced pricing gaps, competitive threats, and portfolio movement in real-time.

The architecture was built with full governance and audit trails, scaling from proof-of-concept to production without architectural changes. The measured results were striking: 93% answerability on the 31 strategic leadership questions (up from single digits with previous BI tools), a 12 to 26% pricing gap identified and corrected immediately, and always-on monitoring replacing manual checks across competitor portals that had previously required dedicated analyst hours every day.

Agentic AI Use Cases in Supply Chain and Logistics

Use Case 4: Global Multi-Entity Logistics Consolidation

An Indian multinational logistics and warehousing company serving customers across India, the UK, Europe, and the US faced the classic multi-entity problem. Each geography operated its own reporting, its own KPI definitions, and its own dashboards. Leadership needed a single operational view but could not get one because the data was fragmented across entities with inconsistent definitions.

The agent deployment focused on cross-entity KPI standardisation and consolidated reporting, operational dashboards with variance explanations, and a data quality and governance layer that enforced consistent definitions across geographies. The agents did not just aggregate data — they standardised it semantically before presenting it.

The result was a genuinely unified operational view across all entities for the first time, faster leadership reporting and issue identification, and measurably improved consistency of operational metrics. Variances that previously took weeks to investigate were explained automatically.

Use Case 5: Port and Rail Terminal Operations

A global ports and logistics leader with reported revenue exceeding $20 billion operated a portfolio spanning ports, terminals, and logistics services worldwide. Port and rail operations were siloed — terminal data was invisible to logistics agents, rail scheduling operated independently, and exception management was manual.

The deployment digitised terminal workflows, created yard and rail operational dashboards, enabled rail scheduling and visibility with exception management, and provided executive dashboards with operational alerts. The orchestration layer connected terminal operations to inland logistics in a single governed view.

The outcome was improved predictability of terminal-to-rail throughput, more efficient coordination across terminal and inland logistics operations, and executive visibility into operational performance that had never existed in a unified format.

Use Case 6: Pharma Supply Chain Procurement Automation

A pharma sourcing platform managing 1,800+ rare excipients and 7,500+ SKUs needed to automate the procurement discovery and RFQ process across a complex supply chain. Identifying suppliers, managing quality and regulatory documentation, and analysing pricing and lead-time data was overwhelmingly manual.

The agent deployment covered RFQ automation with supplier matching workflows, quality and regulatory document handling support, and analytics on price, lead-time, and vendor performance. The agents automated the discovery and evaluation steps that previously required dedicated procurement staff for every transaction.

Procurement cycles shortened measurably. Sourcing visibility improved because the agent could evaluate multiple suppliers simultaneously against quality, regulatory, price, and lead-time criteria. Manual vendor coordination and follow-up effort dropped significantly.

Agentic AI Use Cases in Banking and Financial Services

Use Case 7: Omnichannel Banking Support Automation

A global fintech provider delivering cloud-based automation for banks and credit unions faced a challenge common across financial services: banking queries arrived through multiple channels — chat, email, phone — with no unified case context. An agent handling an email inquiry had no visibility into a related phone call from the same customer. Compliance and auditability requirements added another layer of complexity.

The deployment created omnichannel intake with intelligent workflow routing, agent-assist summarisation with next-best-action recommendations, and a full auditability, reporting, and SLA monitoring layer. The system was integration-ready with core banking systems from day one.

The result was faster case handling, improved consistency across channels, reduced operational load through automation, and significantly better compliance readiness through built-in audit trails. Every agent action was traceable, every decision was documented, and SLA monitoring was continuous rather than periodic.

Use Case 8: AI CFO Agent for Real-Time Financial Intelligence

An AI CFO platform serving growing businesses, CFOs, and financial advisors needed to deliver continuous cashflow insight, forecasting, and actionable guidance without requiring the cost of dedicated advisory headcount for every client.

The agent architecture included a financial data connection layer integrating accounting and banking exports, forecast and scenario modelling agents that ran continuously, alerting for runway and cash risks with recommended actions, and portfolio views enabling advisors to manage multiple clients from a single interface.

The outcome was faster analysis cycles, earlier detection of cash risks and anomalies, and scalable advisory-quality insight without proportional headcount increases. Advisors managing multiple client portfolios could see risks across all clients simultaneously rather than reviewing each one manually.

Use Case 9: Automotive Leasing Portfolio Intelligence

An independent automotive leasing provider operating manufacturer and dealer network programs needed portfolio-wide visibility into risk, delinquency, maturity, and residual values. The data existed across multiple systems but was not unified in a way that enabled proactive management.

The deployment covered portfolio KPIs across risk, delinquency, maturity, and residuals, dealer network performance analytics, and exception-based alerts for early risk signals. The agents monitored the entire portfolio continuously rather than generating periodic reports.

Better portfolio visibility and faster risk identification were the immediate results. Decision support for program operations improved because leadership had real-time data rather than monthly summaries. The shift from periodic review to continuous monitoring enabled genuinely proactive management through early exception detection.

Use Case 10: Cross-Border Tax Research and Pre-Screening

A tax-tech platform focused on early screening of cross-border transactions needed to automate the identification of withholding tax risks, VAT mismatches, and permanent establishment issues. These assessments were traditionally manual, slow, and error-prone — and errors had significant financial consequences.

The agent deployment included transaction screening workflows with risk classification, evidence collection with explainability notes for every assessment, and escalation workflows to human tax experts for complex cases. The governance layer ensured that every automated assessment was documented and defensible.

Earlier detection of withholding and VAT risk was the primary outcome. Last-minute deal disruptions caused by late-discovered tax issues dropped measurably. Pre-compliance review became faster and more consistent because the agent applied the same analytical framework to every transaction rather than depending on individual analyst judgment.

Use Case 11: Sales and Use Tax Research Automation

A specialised tax research tool needed to automate the source collection, analysis, and documentation workflow for tax professionals. The manual process — hunting for sources, reading and analysing them, drafting memos and position papers — consumed hours per transaction.

The agent automated source collection and summarisation, draft memo and position output generation with citations, and workflow tracking with knowledge base building. Each research cycle produced documented outputs that built the firm's institutional knowledge base.

Research cycles shortened significantly. Documentation quality improved because the agent applied consistent standards to every output. Manual source-hunting time — previously the largest time sink in the workflow — was largely eliminated.

Agentic AI Use Cases in Healthcare

Use Case 12: Inpatient Care Revenue and Operations Analytics

A physician-led clinical enterprise operating hospitalist programs across multiple facilities faced fragmented revenue and operational data. Scheduling, billing, and care-program performance data lived in separate systems. Identifying revenue leakage required manual correlation across all three — slow, inconsistent, and dependent on analyst availability.

The deployment included a revenue and utilisation analytics model, performance dashboards with automated variance explanations, and action lists for billing workflow and operational optimisation. The agents did not just report metrics — they explained variances and recommended specific actions.

Visibility into revenue leakage drivers improved immediately because the agent could correlate billing, scheduling, and documentation data simultaneously. Operational decision-making accelerated because leaders no longer needed to manually correlate data across systems. Performance tracking became more reliable because it was continuous rather than periodic.

Use Case 13: Geriatric Care Program Operations

A geriatric care services provider delivering physician-led programs across assisted living and long-term care settings generated fragmented data across staffing, service delivery, and revenue cycles. No unified operational view existed, and identifying bottlenecks required manual investigation.

Governed agents were deployed for program operations dashboards, staffing and service delivery analytics, and revenue cycle visibility with exception alerts. The governance layer ensured that every automated insight was traceable to specific care-program policies and compliance rules.

Operational bottlenecks were identified faster because the agent could see staffing, service delivery, and revenue data simultaneously. Transparency into service performance improved across all programs. Leadership decision support strengthened because every recommendation came with a documented governance trail.

Use Case 14: High-Volume Healthcare Testing Workflows

A UK-based private healthcare and testing provider processed thousands of patient interactions daily across a workflow spanning booking, specimen collection, laboratory processing, result generation, and patient communication. Each handoff was a potential failure point — a missed notification, a delayed result, a dropped communication.

The deployment automated booking and workflow orchestration, status monitoring with customer notifications, and reporting dashboards with operational analytics. The orchestration layer managed the end-to-end workflow rather than individual steps.

Operations became more scalable with significantly reduced manual overhead. Customer communications were faster with fewer missed handoffs. Service visibility improved through unified reporting that showed the entire patient journey rather than individual system views.

Use Case 15: Healthcare Staffing and Credential Management

A healthcare staffing platform connecting nursing professionals with facilities for flexible shifts needed to match available professionals — with specific credentials, compliance requirements, and scheduling preferences — to facility requests with specific skill requirements, shift timings, and compliance mandates. The data lived in disconnected systems.

The agent deployment covered talent onboarding and credential capture, facility staffing request intake and matching logic, scheduling with notifications and compliance workflows, and reporting for fill-rate and utilisation. The governance layer ensured no match was made without verified credentials and compliance clearance.

Fill cycles shortened measurably. Workforce utilisation improved because the agent could evaluate all available professionals against all open requests simultaneously. Staffing responsiveness for facilities increased because matching happened in minutes rather than hours.

Agentic AI Use Cases in Manufacturing and Industrial Operations

Use Case 16: Competitive Intelligence for Manufacturing

A major HVAC and refrigeration manufacturer founded in the 1940s competed in highly price-sensitive consumer and commercial markets where competitor visibility and pricing moves mattered daily. Over 10 million data points across ERP, market feeds, and competitor catalogs needed to be monitored continuously — and traditional BI tools could access only the structured 20%.

This is the same deployment described in Use Case 3, viewed through the manufacturing lens. The continuous monitoring, agentic Q&A, and pricing gap analytics were specifically designed for a manufacturing enterprise where competitive response speed directly determines market share.

The 93% answerability rate on strategic questions, the 12 to 26% pricing gap identification, and the replacement of manual competitor monitoring with always-on automated intelligence represent a step change in how manufacturing enterprises can compete. The agent did not just report competitor prices — it correlated pricing data with inventory levels, promotional activity, and market positioning to surface actionable intelligence.

Use Case 17: Campus Energy Management

A premier research institution with campus-scale operations needed reliable energy infrastructure monitoring. Utility and sensor data generated continuous streams that were overwhelming to monitor manually, and inefficiencies were typically identified only after they had already caused significant waste.

The deployment included utility and sensor data ingestion with anomaly detection, forecasting and optimisation recommendations, and dashboards with proactive alerting. The agent monitored the entire campus energy profile continuously and flagged anomalies before they became costly.

Energy visibility improved because the agent correlated data across all utility systems simultaneously. Detection of inefficiencies accelerated because anomalies triggered immediate alerts rather than appearing in periodic reports. Operations became more predictable through early alerts that enabled preventive action rather than reactive response.

Use Case 18: Enterprise Group Procurement and Finance Intelligence

One of the UAE's most prominent family business groups — comprising 30+ companies across retail, building, industrial, and services portfolios — needed group-wide visibility into purchase price trends, gross margin impact, vendor performance, and working capital metrics. Each entity had its own reporting, its own definitions, and its own dashboards.

The deployment covered group-wide KPI standardisation, automated alerts for purchase price trends, gross margin impact, early-payment analysis including notional finance cost, and vendor performance covering delivery and returns. Scheduled insight packs were generated automatically for leadership.

The transformation was from entity-by-entity manual review to continuous, standardised group intelligence. Earlier detection of margin erosion and vendor slippage became possible because the agent monitored all entities simultaneously. Variance surprises in quarterly reviews dropped because continuous monitoring caught deviations in real-time.

Agentic AI Use Cases in Smart Cities and Utilities

Use Case 19: Smart Grid Agentic Analytics (25+ City Operations)

A smart infrastructure unit operating at city-scale — touching over 150 million urban lives, running 25+ smart city operation centres, and connecting 2 million+ assets and applications — had monitoring blind spots across the grid. The scale of data flowing from connected infrastructure was beyond human monitoring capacity, and reactive dashboards meant problems were identified only after they caused impact.

The deployment included smart grid data ingestion with operational dashboards, predictive analytics for outages, losses, and field issues, and automated alerts with workflow routing for resolution. The agents transformed grid operations from reactive monitoring to proactive prediction and intervention.

Higher operational visibility across all grid operations was the immediate result. Exception detection and response coordination accelerated because the agent flagged issues before they cascaded. Proactive grid operations replaced reactive dashboards — alerts were triggered by predicted issues rather than observed failures, enabling intervention before customer impact.

Use Case 20: Power Transmission KPI Monitoring

A state power transmission utility responsible for operating and maintaining transmission systems to deliver reliable power across a large state faced the monitoring challenges endemic to aging grid infrastructure. Manual monitoring and reactive response meant that outages and losses were often identified too late.

The deployment covered transmission KPI monitoring with anomaly detection, loss and outage analytics with predictive maintenance indicators, and dashboards with automated alerts for field operations. The agent monitored the entire transmission network continuously and flagged exceptions that warranted investigation.

Grid exceptions and operational risks were identified faster because the agent could process the full data stream from all monitoring points simultaneously. Reliability improved through proactive monitoring that caught degradation patterns before they caused outages. Operational transparency for leadership improved because performance data was continuous and standardised.

Agentic AI Use Cases in Real Estate and Property Management

Use Case 21: Tenant and Customer Support Automation

A major real estate portfolio owner and manager with diversified office, retail, industrial, and residential assets across multiple emirates needed consistent customer service across all property types and geographies. Tenant queries ranged from rental payments and lease terms to maintenance requests and facility issues — and service quality varied significantly across properties.

The deployment created an omnichannel service agent operating across web, WhatsApp, and email. The agent handled tenant query triage, FAQs, rental and payment support workflows, and ticketing with escalation to human teams. A knowledge base was built over policies, tenancy documents, and SOPs to ensure consistent, accurate responses.

Response times dropped and call-centre load decreased measurably. Tenants received consistent 24/7 support regardless of which property they occupied or which channel they used. SLA adherence improved through automated routing and tracking that ensured no query fell through cracks.

Agentic AI Use Cases in Hospitality and Travel

Use Case 22: Luxury Travel Booking Agent

A luxury hospitality brand operating a collection of 16 boutique lodges, camps, and hotels in iconic safari locations across East Africa served high-expectation global travellers. Booking workflows were complex — multi-property itineraries, specific guest preferences, seasonal availability constraints, and a service quality standard that could not tolerate automated responses that felt impersonal.

The deployment included email intake with intent classification and data extraction, a conversational loop to capture missing details from guests, real-time inventory checks with alternative date and property negotiation, hybrid handoff for curated itinerary creation, and automated invoice and PDF document generation.

The human-in-the-loop design was essential — the agent handled the data-heavy aspects (availability checking, date negotiation, document generation) while human concierges focused on the curated, personal elements. Booking turnaround improved with reduced back-and-forth. Accuracy on complex guest requirements increased because the agent systematically captured all details before handoff. Operations scaled without compromising the luxury service standard.

Agentic AI Use Cases in Software Development and Technical Operations

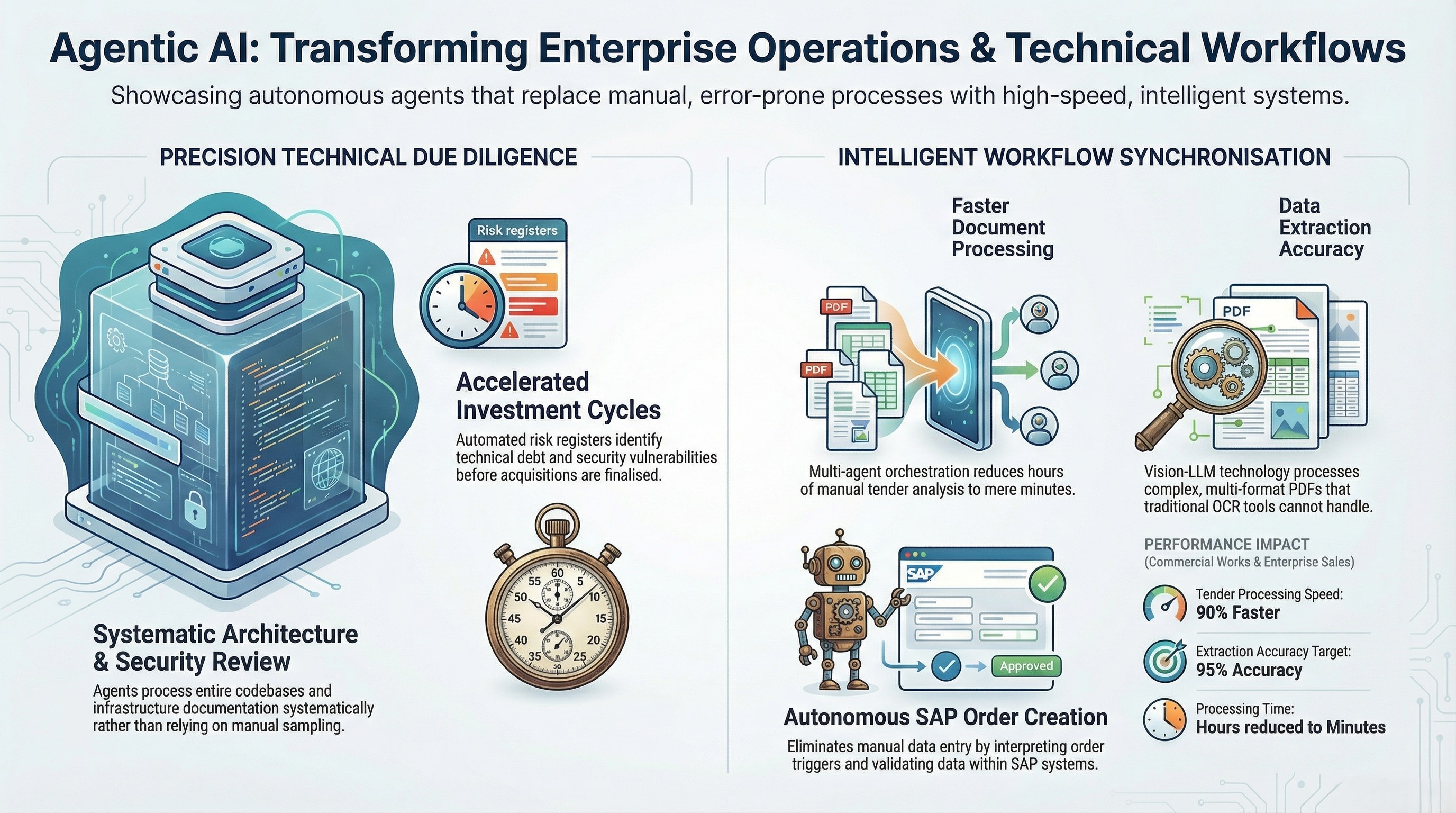

Use Case 23: Technical Due Diligence for Investment Decisions

A long-term holding company partnering with founders and family businesses needed rigorous technical assessment before acquisitions. Evaluating architecture, scalability, security, and integration readiness across target companies was time-consuming and dependent on expensive specialist consultants.

The agent deployment covered code and architecture review with infrastructure and security assessment, scalability, resilience, and integration readiness evaluation, and automated risk register and remediation roadmap generation. The agents processed technical documentation and codebases systematically rather than relying on sample-based manual review.

Investment decisions accelerated because technical risk visibility was clear and structured from the start. Post-deal surprises decreased because the remediation roadmap identified issues before acquisition rather than after. Confidence in scalability and security posture improved because the assessment was comprehensive rather than sample-based.

Use Case 24: Autonomous Document Processing and System Synchronisation

An Australian waterproofing and commercial works specialist with over 20 years of experience needed to process complex tender documents with high data integrity requirements. Tender packages included multi-format PDFs with revisions, technical specifications, pricing schedules, and compliance requirements — all of which needed to be ingested, analysed, and synchronised with core operational systems.

The deployment created an Intelligent Document Workbench using multi-agent orchestration. The system handled tender retrieval, workflow determination, and revision analysis. Vision-LLM extraction processed complex PDFs that traditional OCR could not handle. Deep system integration included full CRUD operations, quote locking, and comprehensive audit logs.

The system was engineered for up to 90% faster tender document processing with a 95% extraction accuracy target for standard formats. Bid risk decreased through automated revision and change detection with full auditability. The hours previously spent on manual document processing for each tender were reduced to minutes.

Use Case 25: SAP Sales Order Automation

An enterprise transitioning away from an end-of-life document management system with high licensing costs needed to automate sales order creation in SAP. The legacy process was manual, error-prone, and dependent on workflows that no longer had vendor support.

The deployment created agentic automation to interpret order triggers, validate data, and create SAP Sales Orders. Governance rules handled exceptions and approvals. Audit logs and reconciliation reporting ensured every order was traceable. The system was designed as an integration-ready replacement for the legacy ECR workflows.

Manual order processing and legacy dependency were eliminated. The order-to-confirm cycle shortened with fewer data-entry errors. Auditability for sales order creation improved because every step was logged and every exception was governed by explicit rules.

Agentic AI Use Cases in B2B Sales and Marketing

Use Case 26: B2B Sales Agent with Governed Execution

A B2B sales operation needed to increase account coverage without proportionally increasing headcount. Opportunity identification, follow-up cadence, renewal monitoring, and pipeline hygiene were all manual and inconsistent across the sales team.

The deployment created an always-on account monitoring agent with signal capture, rule-governed opportunity identification and follow-up orchestration, CRM integration-ready workflows with pipeline hygiene automation, and sales dashboards with leadership alerts.

Account coverage increased without adding headcount because the agent monitored all accounts continuously rather than relying on individual rep attention. Response cycles on opportunities and renewals shortened. Execution consistency improved across the entire team because governed playbooks standardised the follow-up process.

Use Case 27: Influencer Marketing Operations Intelligence

A creator-economy platform bringing brands and creators together needed to automate influencer marketing operations across discovery, campaign delivery, and performance intelligence. Manual campaign management could not scale with the platform's growth.

The agent deployment covered creator discovery enrichment and campaign workflow automation, automated reporting summaries and insight generation, content KPI monitoring with brand-safety checks, and analytics for campaign ROI and engagement.

Manual operations across campaigns decreased significantly. Performance visibility improved because reporting was automated and consistent. Learning capture across brand programs became more systematic because the agent documented outcomes from every campaign in a standardised format.

Use Case 28: Brand Insights and Creative Intelligence

A brand insights studio needed to unify signals across creative performance, audience data, and channel analytics to produce actionable insight narratives for marketing teams. The data was scattered across multiple platforms and required manual synthesis.

The deployment included multi-source ingestion across creative, performance, and audience signals, insight agents producing themes, narratives, and recommendations, and automated reporting packs for leadership.

Creative strategy cycles shortened because insights were synthesised automatically rather than manually. Signal synthesis across channels deepened because the agent could correlate data from all sources simultaneously. Campaign teams received clearer direction because recommendations were specific and evidence-backed.

Agentic AI Use Cases in Education and Training

Use Case 29: Global Teacher Support at Scale

A global teacher community and learning platform serving over a million teachers across 131 countries needed scalable competency insights, learning guidance, and automated support workflows. Manual support could not keep pace with the community's growth.

The deployment included teacher profiles with competency insights, a support agent for program and learning queries, and analytics for program operators and partners. The agent provided personalised guidance at a scale that would have required hundreds of human support staff.

The educator community received scalable support without quality degradation. Access to learning resources and guidance accelerated because the agent was available continuously across all time zones. Program operators gained better visibility into engagement and outcomes across the entire global community.

Use Case 30: Driving Institute Operations Intelligence

A multi-branch driving institute focused on modern training experiences needed visibility into the complete student journey — from enrolment through lessons to testing — and operational metrics like instructor utilisation and slot optimisation across branches.

The deployment covered funnel analytics tracking enrolment through lessons to tests, instructor utilisation and slot optimisation, and customer experience dashboards with alerts.

Operational bottlenecks were identified and resolved faster. Scheduling efficiency improved because the agent optimised instructor allocation across branches. Visibility into conversion drivers and performance metrics gave leadership the data needed to make evidence-based operational decisions.

What Separates Production Agentic AI From Pilot Failures

Across all 30+ use cases documented in this guide, every deployment that reached production and delivered measurable outcomes shares three architectural characteristics. These are not optional features. They are structural requirements.

The first is a unified context engine. In every successful deployment, the platform fused structured data (ERP, CRM, POS, billing, scheduling), unstructured data (contracts, emails, PDFs, policies, chat conversations), and external signals (market feeds, competitor data, regulatory updates) into a single semantic layer before any agent logic was written. The agent saw the full picture from day one. Deployments that tried to start with structured data and "add unstructured later" consistently failed because agent logic built on partial context produced outputs that were technically correct within their narrow view but operationally wrong.

The second is a semantic governor. In every successful deployment, autonomous actions were governed by deterministic rules — not probabilistic confidence scores. Approval hierarchies, compliance thresholds, escalation criteria, and exception handling were encoded as if-then logic. When an agent approved a routine transaction, that approval was governed by a specific rule with a specific threshold. When the same agent encountered an exception above a defined value, it escalated — not because the model was less confident, but because the rule required it. Every decision was auditable and policy-cited.

The third is an active orchestrator. In every successful deployment, agents executed across existing enterprise systems — ERP, CRM, billing, scheduling, communication platforms — without requiring a rip-and-replace of current technology. The orchestrator connected to existing systems of record and coordinated multi-step workflows across them. Human-in-the-loop controls were configurable by risk tier: routine actions proceeded autonomously, high-value or high-risk actions required human approval.

The three architectural requirements for production agentic AI in enterprise settings are: (1) a unified context engine that fuses all data types into a single semantic layer, (2) a semantic governor that enforces deterministic business rules so every action is auditable, and (3) an active orchestrator that executes governed workflows across existing enterprise systems with configurable human-in-the-loop controls.

The use cases that failed — the ones that became part of Gartner's projected 40% cancellation rate — almost always failed for one of five reasons. Context gaps where agents could not see unstructured data. Governance gaps where autonomous actions had no deterministic rules. Data silo architecture where agents could see one system at a time. Absence of meaningful audit trails. Or the pilot-to-production death valley where demos worked on clean data but production environments were too complex, too messy, and too exception-heavy.

How to Evaluate Any Agentic AI Platform

For any enterprise evaluating agentic AI platforms, these ten questions will separate production-ready solutions from demonstration-ware. Ask every vendor. The answers will reveal everything.

What percentage of your enterprise data can your agents actually see and reason over? If the answer is "we connect to your database," you are getting structured-only visibility — the 20% that every BI tool already covers.

Can your agents reason over unstructured data — contracts, emails, PDFs, Slack conversations — not just ERP and CRM fields? Connecting to a document store is not the same as reasoning over its contents.

Is your governance layer deterministic or probabilistic? Can you show the specific rule that triggered a specific agent action? If the answer involves confidence scores, the platform does not have deterministic governance.

Can you show a full audit trail for an autonomous decision, including the policy citation that governed the action? Not a log file — a traceable chain from signal detection through data consultation, rule application, and action execution.

What happens when the agent encounters an exception it was not designed to handle? Does it fail silently, produce a best-guess output, escalate to a human, or halt? In enterprise settings, silent failure is unacceptable.

How long from pilot to production in a real enterprise environment? Can you show proof from an actual deployment — not a sandbox?

Does your platform require replacing existing enterprise systems, or does it orchestrate what is already in place?

What human-in-the-loop controls exist, and are they configurable by risk tier?

Can you show production deployments with measured outcomes — not demonstrations, not pilots?

How do you handle compliance and governance requirements specific to the industry in question?

Any vendor that cannot answer all ten of these questions with specific, verifiable evidence is not ready for enterprise production.

From Pilot to Production in 30 Days

The pilot-to-production timeline does not need to be measured in quarters or years. With the right infrastructure — context fusion, deterministic governance, and system orchestration built into the platform — the deployment path is measured in weeks.

Week one is discovery and workflow mapping. Identify the highest-value workflows where context gaps cause the most operational friction, compliance risk, or financial leakage. Map data sources: which systems hold structured data, where unstructured documents live, what external feeds exist. Define governance rules: which actions can be autonomous, which require human approval, and what thresholds trigger escalation.

Weeks two through four are deployment. The context engine connects to enterprise data sources and builds the unified semantic layer. Governance rules are encoded as deterministic logic. The first agent goes live on the highest-priority workflow — monitored, governed, and producing auditable outputs from day one.

Day thirty marks a live, governed agent in production — executing enterprise workflows autonomously within defined governance boundaries, producing full audit trails for every action, and providing measurable performance data against the baseline.

The architectural principle that makes this timeline possible is that the platform orchestrates existing systems rather than replacing them. No migration. No rip-and-replace. No year-long implementation.

If your enterprise is evaluating agentic AI, the most important step you can take today is understanding your context gap. What percentage of your enterprise data can your current tools actually see? What decisions are being made on incomplete information? What would change if your agents could see the full picture?

See your enterprise context gap in 48 hours. Book a pilot assessment at assistents.ai.

Frequently Asked Questions

What is agentic AI?

Agentic AI refers to AI systems that operate at Level 5 enterprise intelligence maturity — autonomously detecting signals, reasoning across the full enterprise data context (structured, unstructured, and external), executing multi-step workflows across enterprise systems, and producing auditable decision trails within deterministic governance boundaries. Unlike copilots that recommend actions for humans to execute, agentic AI systems act autonomously on routine decisions while maintaining configurable human-in-the-loop controls for high-risk actions.

What are the most common agentic AI enterprise use cases?

The most common production use cases span competitive intelligence and pricing automation, omnichannel customer support with governed workflows, supply chain and logistics coordination across entities, financial operations including cashflow monitoring and payment automation, healthcare operations covering revenue analytics and staffing, smart grid and utility monitoring, document processing and system synchronisation, and B2B sales automation with governed execution playbooks.

How is agentic AI different from copilots and RPA?

Copilots reason but do not execute — the human remains the bottleneck. RPA executes but cannot reason — it follows scripts and breaks on exceptions. Agentic AI combines reasoning, execution, and governance in a single system. It sees all data types (not just structured), acts autonomously within governance boundaries, and produces auditable decision trails. The fundamental difference is that agentic AI requires three infrastructure layers — a context engine, a semantic governor, and an active orchestrator — that neither copilots nor RPA possess.

What is the 80/20 data problem in enterprise AI?

The 80/20 data problem describes the gap between what enterprise AI tools can see and what actually exists. Approximately 10 to 20 percent of enterprise data is structured (ERP fields, CRM records, transaction logs). The remaining 70 to 85 percent is unstructured (contracts, emails, PDFs, policy documents, chat conversations) or semi-structured (API logs, system events). Most enterprise AI tools operate only on the structured slice, meaning agents make decisions based on a fraction of the available context.

Why do most agentic AI projects fail?

The five most common failure reasons are context gaps where agents cannot reason over unstructured data, governance gaps with no deterministic rules for decision thresholds, data silo architecture where agents see one system at a time, absence of meaningful audit trails, and the pilot-to-production gap where demonstrations succeed on clean data but production environments are too complex. Context gaps are the root cause in the majority of failures.

What industries are deploying agentic AI in production?

Production deployments span retail and e-commerce, supply chain and logistics, banking and financial services, healthcare and clinical operations, manufacturing and industrial operations, smart cities and utilities, real estate and property management, hospitality and travel, and B2B sales and marketing. The use cases range from competitive intelligence to document processing to omnichannel support to financial operations.

How long does it take to deploy agentic AI in an enterprise?

With a platform that includes pre-built context fusion, governance, and orchestration capabilities, the typical timeline is 30 days from kickoff to a live, governed agent in production. Week one covers discovery and workflow mapping. Weeks two through four cover context engine deployment, governance rule encoding, and first-agent launch. This is achievable because the platform orchestrates existing systems rather than replacing them.

What is a Semantic Governor in agentic AI?

A Semantic Governor replaces probabilistic model outputs with deterministic decision logic for governed actions. Instead of the model inferring what action to take, the governor encodes explicit business rules — approval hierarchies, compliance thresholds, escalation criteria, exception handling — as if-then structures. Every governed action is traceable to a specific rule and produces a documented audit trail. The distinction matters because enterprise decisions cannot be governed by confidence scores.

Can agentic AI work with existing enterprise systems?

Yes. Production-ready agentic AI platforms are designed to orchestrate existing systems — ERP, CRM, billing, scheduling, communication platforms — without requiring replacement or migration. The orchestration layer connects to existing systems of record and coordinates workflows across them. This is what enables 30-day deployment timelines rather than year-long implementations.

How do you evaluate agentic AI platforms?

The critical evaluation criteria are data coverage (can the platform reason over unstructured data, not just structured), governance type (deterministic rules vs probabilistic confidence), audit trail depth (full decision chain with policy citations vs basic logging), deployment model (orchestrates existing systems vs requires replacement), production proof (real deployments with measured outcomes vs demonstrations), and human-in-the-loop configurability (risk-tiered controls vs blanket restrictions).

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us