Top 8 Agentic AI Use Cases in Data Engineering (Updated 2026) — With Real Client Case Studies

It's Monday morning. A data engineer opens their dashboard and sees red alerts everywhere. Pipelines are failing, data sources are acting up, and the team is scrambling. Sound familiar?

Now imagine those alerts start resolving themselves — not because someone wrote smarter rules, but because the system learned what normal looks like, spotted the deviation, and fixed it before anyone noticed.

That's agentic AI in data engineering. And it's not a future state. The case studies below are live deployments, across 8 industries, on 4 continents.

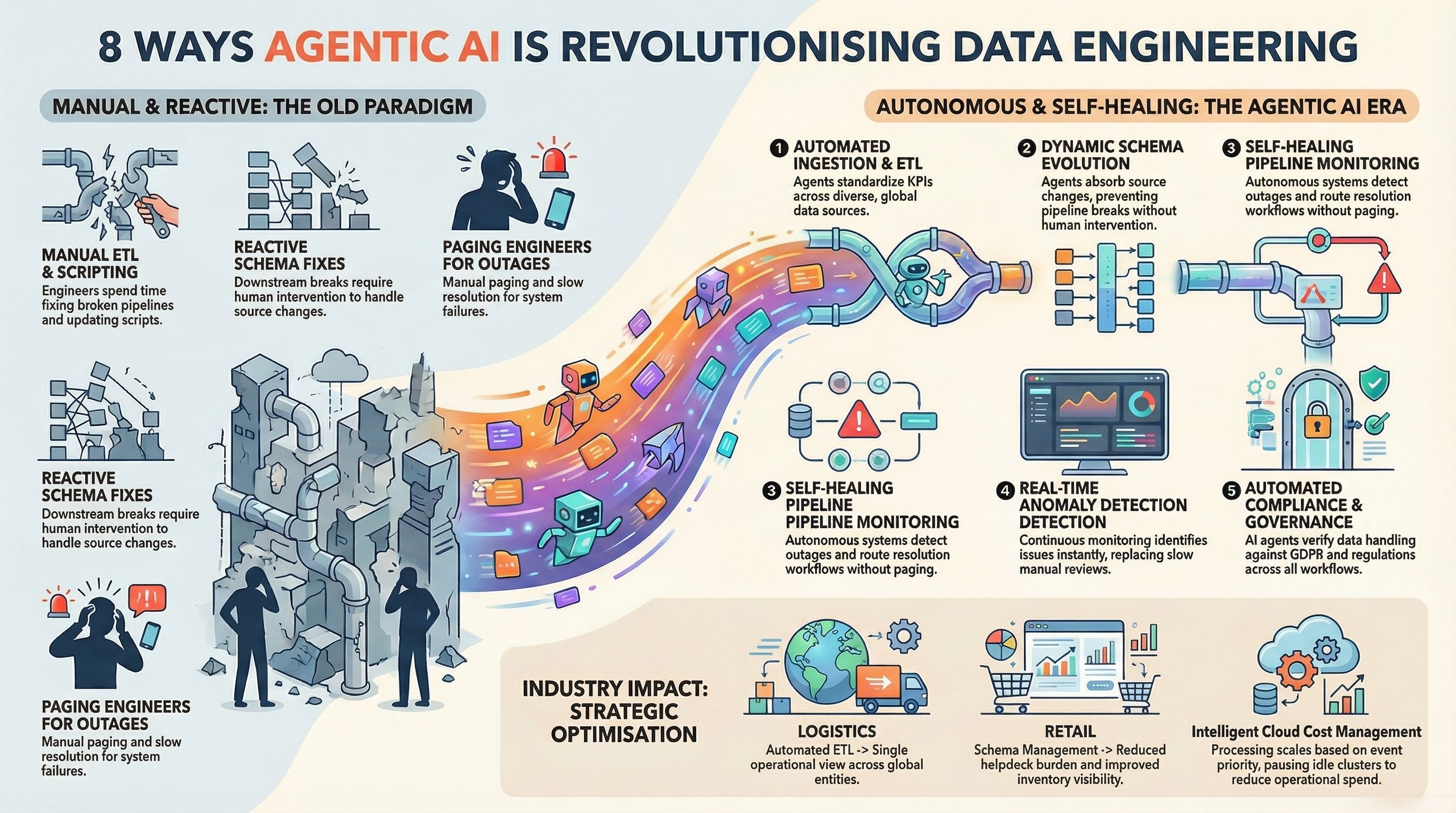

What Is Agentic AI in Data Engineering?

Traditional automation follows rules: if X happens, do Y. Agentic AI adapts. When a data source changes format, a schema drifts, or a new pattern emerges, the agent adjusts without a human rewriting scripts.

Think of it as a team member who never sleeps — watching your pipelines, learning from patterns, and acting when something feels off.

The results teams report:

- Pipelines that fix themselves — agents detect and correct issues without waiting for on-call engineers

- Continuous learning — agents adapt to source changes without manual intervention

- Better uptime — anomalies handled in milliseconds, not hours

- Lower cloud costs — compute resources scaled intelligently

- Cleaner data — missing values, duplicates and outliers caught and corrected automatically

Let's go beyond theory. Here's how agentic AI is actually running in production today.

8 Agentic AI Use Cases in Data Engineering — Backed by Real Case Studies

1. Automated Data Ingestion & ETL

The problem: Multiple data formats, dozens of sources, constant schema updates — managing ETL manually means engineers spend most of their time writing and rewriting ingestion scripts instead of building.

Case Study: A Global Multinational Logistics & Supply Chain Company

An Indian multinational logistics enterprise serving clients across India, the UK/Europe, and the US operates at a scale where data flows across entities, geographies, and systems that rarely agree on format.

Ampcome built an analytics consolidation layer that standardised KPIs across multi-entity global operations. The solution included cross-entity data ingestion, quality governance, and operational dashboards with variance explanations — all automated. Leadership reporting that once required days of manual stitching now runs continuously, with issues flagged before they reach the executive layer.

Results: Single operational view across entities, faster leadership reporting, improved consistency of operational metrics.

The broader pattern: A retail chain with hundreds of store databases feeding into analytics can have its pipelines adapt automatically — without engineers rewriting scripts for every new store or system. Agentic ETL handles discovery, extraction, transformation, and loading across changing sources.

2. Schema Evolution & Management

The problem: A column gets added to a source table. A pipeline breaks. An engineer gets paged. This shouldn't require a human.

Case Study: A National Value Retail Chain (700+ Stores, India)

A rapidly scaling value retail enterprise operates 700+ stores across India, serving mass-market consumers across apparel, general merchandise and FMCG. At that scale, schema changes are constant — new product attributes, updated POS fields, new promo structures.

Ampcome deployed enterprise AI agents that modernise store support, inventory visibility and knowledge access at national retail scale. The system includes a voice support agent (in Hindi and English), an inventory intelligence agent tracking pricing, stock and promos per store, and a knowledge agent trained on POS and SOP documentation. Schema changes in source systems are absorbed by the agents rather than breaking downstream flows.

Results: Reduced manual helpdesk burden, improved store-level inventory visibility, faster onboarding via on-demand training guidance — all running without engineers intervening on schema updates.

The broader pattern: Agentic AI monitors schema changes and adjusts transformations on the fly. It's like having a guardian watching every source table — quietly resolving issues before anyone notices.

3. Data Quality & Anomaly Detection

The problem: Bad data ruins reports, dashboards, and decisions. And most quality issues aren't caught until they've already caused damage downstream.

Case Study: A Major Indian HVAC & Consumer Cooling Brand

A major Indian HVAC&R manufacturer, competing in highly price-sensitive markets, relies on competitor visibility where pricing moves matter daily. Data quality problems — gaps in competitor pricing feeds, anomalous promo data, missing availability signals — could mean missed strategic responses.

Ampcome built continuous e-commerce and channel monitoring agents that track competitor pricing, MRP/discounts, offers, availability and ratings across portals. These agents flag anomalies and pricing gaps as they appear — not after a weekly manual review. An agentic Q&A layer maps clean, validated data directly to leadership questions.

Results: Faster competitive response cycles, earlier identification of pricing gaps and promo shifts, always-on monitoring replacing manual checks.

The broader pattern: For a hospital processing patient records, this same capability means lab results and appointment data remain consistently accurate — anomalies corrected before they can affect care decisions.

4. Pipeline Monitoring & Self-Healing

The problem: When jobs fail, traditional systems send an alert. Someone gets paged. A fix gets deployed hours later. The pipeline sits broken in the meantime.

Case Study: A State Power Transmission Utility (India)

A state power transmission utility is responsible for operating transmission systems that deliver reliable electricity across an entire state. Their data engineering challenge: monitoring a complex grid where outages, losses, and field issues need immediate detection and automated response routing.

Ampcome deployed smart grid analytics with transmission KPI monitoring, anomaly detection, predictive maintenance indicators, loss/outage analytics, and automated alerts with workflow routing for field resolution. When anomalies appear in transmission data, the system doesn't just alert — it routes to the right teams automatically.

Results: Faster identification of grid exceptions, improved reliability through proactive monitoring, better operational transparency for leadership.

The broader pattern: Financial institutions running hundreds of interdependent jobs benefit enormously when a self-healing layer restarts failed jobs, reroutes tasks, or rebalances workloads — reducing downtime from hours to seconds.

5. Metadata & Catalog Management

The problem: Keeping track of what data exists, where it came from, and how it connects is a full-time job. Most teams are flying blind through their own data lakes.

Case Study: A Privately-Held Retail Holding Group (India)

A privately-held retail holding environment where leadership needs governed, cross-functional intelligence across systems and documents to move quickly from insight to action. The challenge isn't lacking data — it's knowing what data exists, what it means, and how it connects across functions and formats.

Ampcome built a unified context engine covering both structured and unstructured data, with a semantic governance layer managing rules, hierarchies and formulas. An active orchestrator integrates with core systems, and an insights-to-action agent layer sits on top of existing dashboards — turning visibility into governed, auditable decisions.

Results: Shift from reactive reporting to proactive execution loops, standardised decision logic across teams, automated task creation and completion tracking.

The broader pattern: In manufacturing, sensor data from dozens of plants can be cataloged automatically — without engineers manually writing descriptions — letting teams access and trust data faster.

6. Cost Management in Cloud Workflows

The problem: Processing large data volumes in the cloud gets expensive fast. Resources get over-provisioned, underutilised clusters sit idle, and no one notices until the bill arrives.

Case Study: A Smart Infrastructure Company Operating at City Scale (India)

A smart infrastructure enterprise operating across 25+ smart city operation centres, touching 150M+ urban lives and connecting 2M+ assets and applications. The sheer volume of data flowing through this infrastructure makes cost-inefficient processing a real operational risk.

Ampcome delivered agentic analytics and automated operational alerting on top of these smart utility systems. Smart grid data is ingested continuously, with operational dashboards, predictive analytics for outages and losses, and automated alert and workflow routing for resolution. Processing is optimised based on event priority — not running everything at maximum compute all the time.

Results: Higher operational visibility across grid operations, faster exception detection and response coordination, more proactive operations via continuous monitoring.

The broader pattern: Streaming workloads in logistics and IoT benefit because agentic systems scale resources to demand — pausing underutilised clusters, scaling up during peak ingestion windows, and doing it without human supervision.

7. Governance & Compliance Automation

The problem: Data privacy regulations — GDPR, HIPAA, local frameworks — are complex, constantly updated, and easy to violate when data flows across systems without governance baked in.

Case Study: A UK Private Healthcare & Testing Provider

A UK private healthcare and testing provider with high-volume consumer workflows and digital service delivery requirements. In healthcare, data governance isn't just good practice — it's a compliance obligation, with real consequences for failure.

Ampcome built platform automation covering the full workflow: booking through processing through reporting, with operational analytics layered on top. Status monitoring, customer notifications, reporting dashboards, and booking workflow orchestration all run through a governed layer.

Results: More scalable operations with reduced manual overhead, faster customer communications, improved service visibility through unified reporting.

Also relevant — A UK Tax Technology Platform: For cross-border transactions, Ampcome built transaction screening workflows with risk classification, evidence collection with explainability notes, and escalation workflows to tax experts. Early detection of withholding/VAT risk means fewer last-minute deal disruptions and faster pre-compliance review.

The broader pattern: Healthcare organisations can rely on agents to continuously verify that patient data is handled correctly — reducing the risk of costly violations without requiring a compliance team member on every data flow.

8. Streaming Data Processing

The problem: In industries where milliseconds matter, streaming pipelines need to process, route, and respond to data in real time — without a human adjusting throughput or managing checkpoints.

Case Study: A Global Ports & Logistics Leader

A global ports and logistics enterprise with record revenue of $20.0B for FY2024, spanning ports, terminals and logistics services worldwide. Managing terminal-to-rail logistics at that scale requires streaming data from multiple systems — yard operations, rail scheduling, exception management — all flowing in real time.

Ampcome delivered a terminal and rail management solution to digitise and optimise port-to-inland logistics operations. The scope included terminal workflow digitisation, yard/rail operational dashboards, rail scheduling and visibility, exception management, and executive dashboards with operational alerts. Data flows continuously across operations, with exceptions surfaced and routed immediately.

Results: Improved operational visibility and exception response, higher predictability of terminal-to-rail throughput, more efficient coordination across terminal and inland logistics.

The broader pattern: A smart factory processing thousands of sensor events per second benefits from the same pattern — streaming agents that manage checkpoints, adjust processing rates, and balance resources so anomalies are caught the moment they occur.

Beyond the 8 Use Cases: What Agentic AI Makes Possible at Scale

The 8 use cases above are foundational. But Ampcome's deployments show what becomes possible once the foundations are solid.

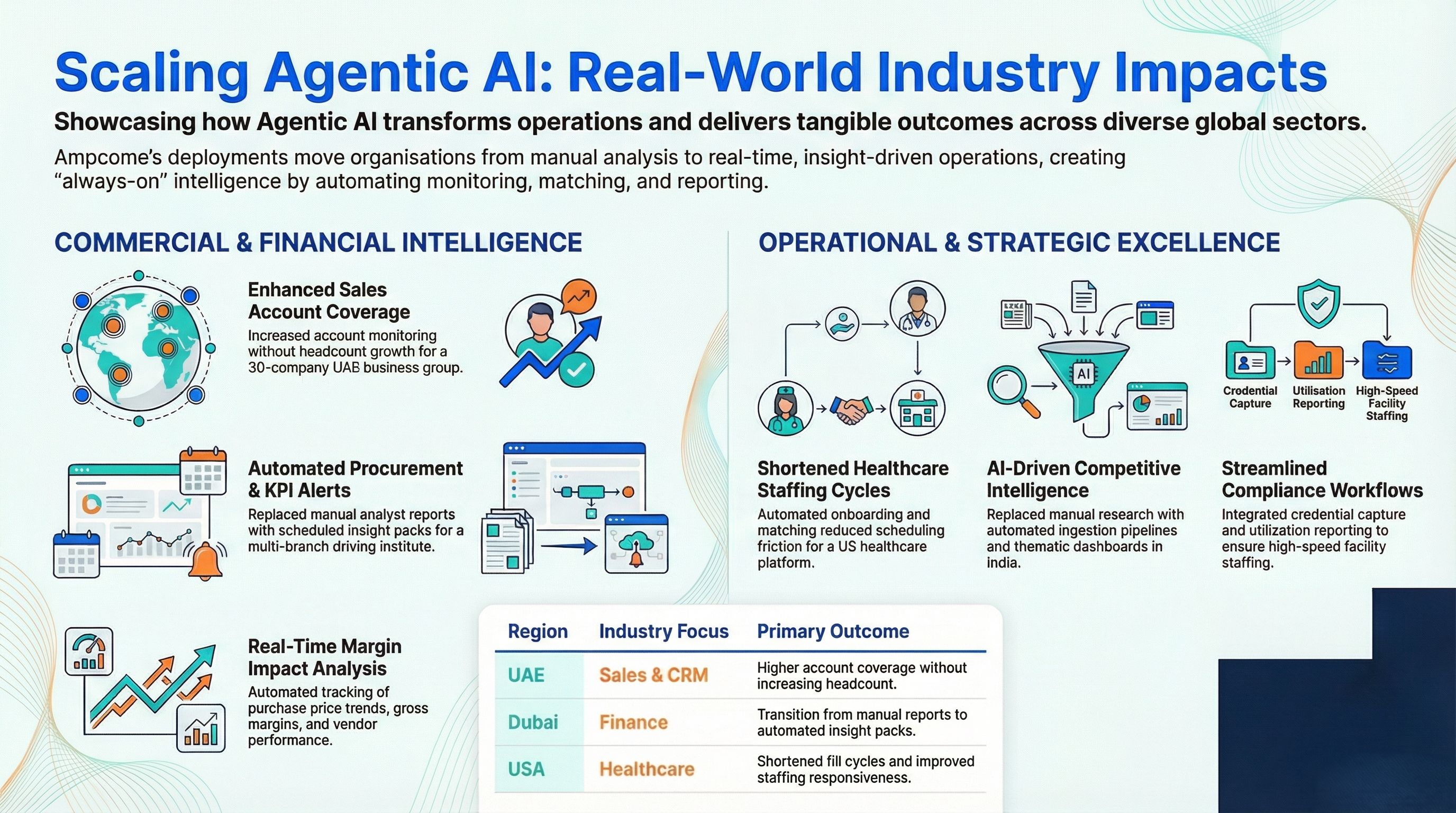

AI-Powered Sales and Account Intelligence For a prominent UAE family business group comprising 30+ companies, Ampcome deployed always-on account monitoring that captures signals, governs opportunity identification, and integrates with CRM workflows. Outcome: higher account coverage without increasing headcount, faster response cycles on opportunities and renewals.

Agentic Finance and Procurement Monitoring For a Dubai-based multi-branch driving institute, automated procurement and finance KPI alerts run across group entities — tracking purchase price trends, gross margin impact, early-payment analysis, and vendor performance on delivery and returns. Leadership gets scheduled insight packs instead of waiting for analyst reports.

Healthcare Staffing Operations For a US healthcare staffing platform, Ampcome built a full AI platform covering talent onboarding, credential capture, facility staffing request intake, matching logic, scheduling, notifications, compliance workflows, and utilisation reporting. Fill cycles shortened, scheduling friction reduced, staffing responsiveness improved for facilities.

Competitive Intelligence at Scale For an Indian market research platform, data ingestion and indicator pipelines, research automation, and alert-driven thematic dashboards replaced manual analysis workflows. Faster production of insight packs, more repeatable research, better signal visibility through automated analytics.

The Role of Data Engineers in the Age of Agentic AI

Agentic AI doesn't replace data engineers — it elevates them.

As agents take over routine monitoring, schema handling, quality checks, and compliance workflows, engineers shift from firefighting to architecture. Their expertise moves toward designing robust infrastructure, optimising data flows, and building systems that anticipate future needs.

The teams that thrive are the ones learning to collaborate with agentic AI — letting it handle repetitive work while applying human judgment to complex challenges that require context, creativity, and strategic thinking.

Challenges to Keep in Mind

The black box problem. When an agent reroutes data, rejects records, or adjusts thresholds, auditors need to understand why. Transparency tooling is still catching up with agent capability.

Security gray areas. Agents acting autonomously can be exploited if guardrails aren't strong. Most production deployments maintain human-in-the-loop approval for high-stakes actions.

Setup requires real planning. Deploying an agent isn't like installing software. Data flow permissions, response behaviour testing, and control limits all need careful design upfront.

Humans remain the final line. Agents can process a million signals per hour, but they can't read business context the way people can. What counts as "normal," "urgent," or "acceptable risk" is still a human call.

What's Next: 2025 to 2030

- Multi-agent coordination — networks of agents managing complex, interdependent data architectures

- Reduced human intervention — pipeline maintenance approaching fully automated operations

- Predictive correction — agents resolving issues before they surface in monitoring dashboards

The shift is from AI assisting data operations to AI orchestrating them — with humans focused on strategy, innovation, and the decisions that genuinely require judgment.

Conclusion

Agentic AI has moved well past the experimental stage. Across national retailers, global port operators, state power utilities, healthcare providers, and fintech platforms, these systems are running in production — handling the tedious, the repetitive, and the mission-critical.

The case studies above aren't hypotheticals. They're deployments Ampcome has built and delivered, across industries where data reliability isn't optional.

If your team is still spending engineering cycles on pipeline firefighting, manual quality checks, or reactive monitoring, the question isn't whether agentic AI can help. It's how quickly you can get it running.

Book a 15-minute discovery call with Ampcome →

FAQs

1. What are the main agentic AI use cases in data engineering?

Automated ETL, self-healing pipelines, anomaly detection, schema management, metadata cataloging, cloud cost optimisation, compliance automation, and streaming data processing.

2. How is agentic AI different from traditional automation?

Rule-based automation follows fixed instructions. Agentic AI learns from patterns, adapts to change, and handles unexpected situations without explicit human instructions.

3. Can agentic AI replace data engineers?

No. Agents handle the repetitive and reactive. Engineers handle architecture, governance, strategic design, and complex decisions that require business context.

4. Which industries see the most immediate value?

Finance, healthcare, logistics, retail, and utilities — where data volumes are high, changes are frequent, and the cost of errors is significant.

5. What does a typical engagement with Ampcome look like?

Most engagements begin with a scoped proof-of-concept targeting one high-value use case, with a clear path to production and expansion across additional workflows.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us