Enterprise AI Agent Platform Comparison 2026: How to Evaluate, Compare & Choose the Right Platform (With Real-World Deployment Data)

Choosing an enterprise AI agent platform in 2026 is one of the most consequential technology decisions a business can make — and one of the most confusing. The market is flooded with vendors promising autonomous workflows, intelligent orchestration, and transformative ROI. Most of them sound identical.

This guide cuts through the noise. It is built on a single competitive advantage that no analyst report can replicate: real deployment data from more than 30 enterprise AI agent implementations across retail, logistics, financial services, healthcare, manufacturing, government, and hospitality.

If you are an enterprise technology leader, CTO, or digital transformation head evaluating AI agent platforms right now, this is the most operationally grounded comparison available.

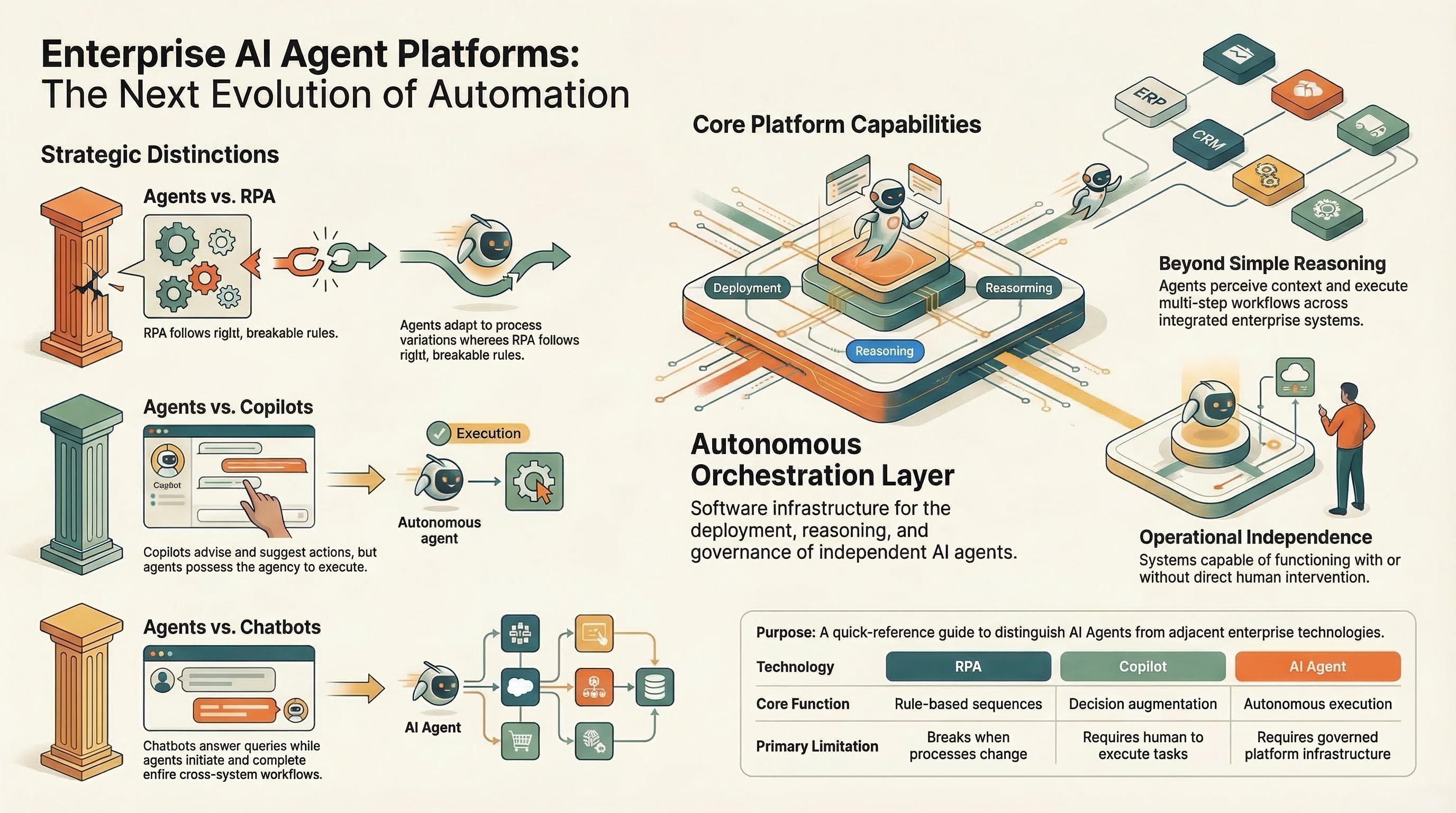

What Is an Enterprise AI Agent Platform? (Definition + How It Differs From RPA, Chatbots, and Copilots)

An enterprise AI agent platform is a software infrastructure layer that enables the deployment, orchestration, and governance of autonomous AI agents — systems capable of perceiving context, reasoning over multi-step workflows, taking action across integrated enterprise systems, and operating with or without human intervention.

That definition matters because the enterprise market is currently drowning in mislabelled products. Here is how a genuine enterprise AI agent platform differs from adjacent technologies:

AI agents vs. RPA (Robotic Process Automation): RPA executes predefined, rule-based sequences. It breaks when processes change. AI agents reason through process variation, handle exceptions autonomously, and adapt without reprogramming. The correct framing is not replacement — it is evolution. Enterprise AI agent platforms can sit on top of existing RPA infrastructure and extend it with contextual reasoning.

AI agents vs. chatbots: Chatbots respond to queries within a defined conversational scope. AI agents initiate, execute, and complete multi-step workflows. A chatbot can answer "what is my invoice status?" An AI agent can retrieve the invoice, identify the discrepancy, route it for approval, and update the ERP — without a human triggering each step.

AI agents vs. copilots: Copilots augment human decision-making by surfacing suggestions within a user interface. AI agents act independently on those decisions, executing downstream tasks across systems. The distinction is agency: copilots advise, agents execute.

AI agents vs. traditional AI/ML pipelines: ML pipelines produce outputs — predictions, classifications, scores. AI agents consume those outputs and take action based on them, embedded within governed, auditable workflows.

Understanding this distinction is the foundation of a sound platform evaluation. The wrong tool category will fail regardless of how well it is implemented.

The Enterprise AI Agent Platform Evaluation Framework (7 Criteria)

Ampcome's evaluation framework for enterprise AI agent platforms is derived from lessons learned across 30+ deployments. These are not theoretical criteria. They are the dimensions that separated implementations that delivered measurable ROI from those that stalled in proof-of-concept.

1. Orchestration and Multi-Agent Coordination

A single AI agent can automate a task. A coordinated network of agents can automate an entire business function.

Evaluate whether the platform supports:

- Multi-agent orchestration: Can multiple specialised agents hand off tasks to each other with maintained context?

- Parallel execution: Can agents operate simultaneously across sub-tasks without creating data conflicts?

- Role-based agent design: Can you define distinct agent roles (intake agent, validation agent, approval agent, escalation agent) with governed boundaries?

- Fallback and retry logic: Does the orchestration layer handle agent failures gracefully, with human escalation paths?

The weakest implementations we have observed treat orchestration as an afterthought — stringing together single agents via APIs rather than designing a coordinated system from the start. This creates brittle architectures that fail under production load.

2. Context Completeness and Memory Architecture

This is the most underrated evaluation criterion and the one that separates platforms that work in demos from platforms that work in production.

Context completeness refers to the platform's ability to give agents access to the full information environment they need to reason accurately. This includes:

- Structured data (databases, ERP records, CRM data)

- Unstructured data (documents, emails, PDFs, SOPs, contracts)

- Conversational history and session memory

- Real-time operational signals (inventory feeds, pricing data, transaction streams)

A platform that gives agents access to only structured data will produce agents that miss critical context. A platform with a robust context architecture — combining a semantic layer over structured data with retrieval-augmented generation (RAG) over unstructured content — produces agents that reason like an experienced domain expert.

Ask vendors directly: how does your platform handle context that spans multiple data types, multiple systems, and sessions that span days or weeks?

3. Governance, Auditability, and Compliance

Enterprise AI agents execute consequential actions: creating purchase orders, routing customer disputes, generating regulatory filings, triggering logistics workflows. Every one of those actions needs to be auditable.

Evaluate:

- Audit trail depth: Does every agent action generate a timestamped, attributable log?

- Approval gates: Can you insert mandatory human review at configurable workflow stages?

- Permission architecture: Can agent access rights be scoped to specific systems, data classes, and action types?

- Explainability: Can the platform produce a human-readable explanation for any agent decision?

- Compliance readiness: Does the platform support SOC 2, GDPR, HIPAA, or sector-specific frameworks relevant to your industry?

In regulated industries — financial services, healthcare, government utilities — governance is not a feature. It is a prerequisite. Platforms that treat it as optional will not survive your security review.

.jpg)

4. Integration Depth

Enterprise AI agents are only as useful as the systems they can act on. An agent that can reason brilliantly but cannot write back to your ERP, CRM, or core operational platform delivers no operational value.

Evaluate:

- Pre-built connectors: SAP, Salesforce, ServiceNow, Oracle, Microsoft Dynamics, and your specific vertical systems

- API flexibility: Can the platform integrate with custom or proprietary internal APIs?

- Bidirectional integration: Can agents both read from and write to target systems, or are they read-only?

- Legacy system support: How does the platform handle systems without modern APIs — screen scraping, RPA bridges, or structured data extraction?

One of the most common implementation failures we observe is underestimating integration complexity. Assess this before you select a vendor, not after.

5. Time-to-Production and Deployment Model

Vendor demos always look fast. Real enterprise deployments involve data mapping, integration work, governance configuration, user acceptance testing, and change management. The gap between demo and production is where most AI agent projects die.

Evaluate:

- Reference deployment timelines: Ask for documented case studies with time-from-kickoff-to-production data

- Deployment methodology: Does the vendor follow a structured implementation approach, or is it ad hoc?

- Proof-of-concept scope: Can you prove value on a single, bounded workflow before committing to platform-wide deployment?

- Internal resource requirements: What does your team need to contribute? Excessive vendor dependency creates long-term risk

A platform that takes 18 months to deploy is not a competitive advantage regardless of its capabilities. Validate timeline claims with reference customers.

6. Human-in-the-Loop Design

The enterprise AI agent market has a maturity problem: many platforms are built for a world where AI judgment is trusted unconditionally. That world does not yet exist in enterprise operations.

The best platforms are designed for progressive autonomy — starting with high human oversight and allowing enterprises to increase agent autonomy as trust is established through evidence.

Evaluate:

- Configurable oversight levels: Can you set per-workflow thresholds that trigger human review?

- Exception handling: How does the platform surface ambiguous or low-confidence decisions for human judgment?

- Override mechanisms: Can human operators intervene in, modify, or reverse agent actions in real time?

- Escalation workflows: Does the platform integrate with ticketing systems (ServiceNow, Jira) for human escalation?

Platforms that make human-in-the-loop feel like a limitation rather than a design feature are not ready for enterprise deployment.

7. ROI Measurement and Evidence

You cannot manage what you cannot measure. Enterprise AI agent platforms should make ROI measurement straightforward, not opaque.

Evaluate:

- Built-in analytics: Does the platform track agent throughput, accuracy rates, escalation rates, and time-saved metrics out of the box?

- Baseline comparison: Can the platform compare agent performance against pre-automation baselines?

- Industry benchmarks: Does the vendor provide benchmarked performance data from comparable deployments?

- Business metric linkage: Can agent performance be connected to business KPIs (revenue, cost, customer satisfaction)?

If a vendor cannot show you how ROI will be measured before you sign, that is a significant red flag.

Platform Comparison Table: How Leading Enterprise AI Agent Platforms Stack Up

The enterprise AI agent platform market currently divides into three broad categories of vendor:

Horizontal AI agent platforms provide general-purpose orchestration and deployment infrastructure. They are flexible but typically require significant configuration effort to serve specific enterprise use cases. Suitable for enterprises with strong internal AI/ML teams.

Vertical AI agent suites are pre-packaged for specific business functions — customer service, IT automation, HR workflows. They deploy faster within their designed scope but hit hard limits when enterprise workflows cross functional boundaries.

Context-complete agentic AI platforms combine orchestration infrastructure with a semantic data layer, pre-built integration architecture, and governed deployment methodology. They are designed specifically for the complexity of real enterprise environments where agents must reason across structured and unstructured data simultaneously.

The right choice for your enterprise depends on the complexity of your workflows, the diversity of your data environment, and the maturity of your internal AI operations team.

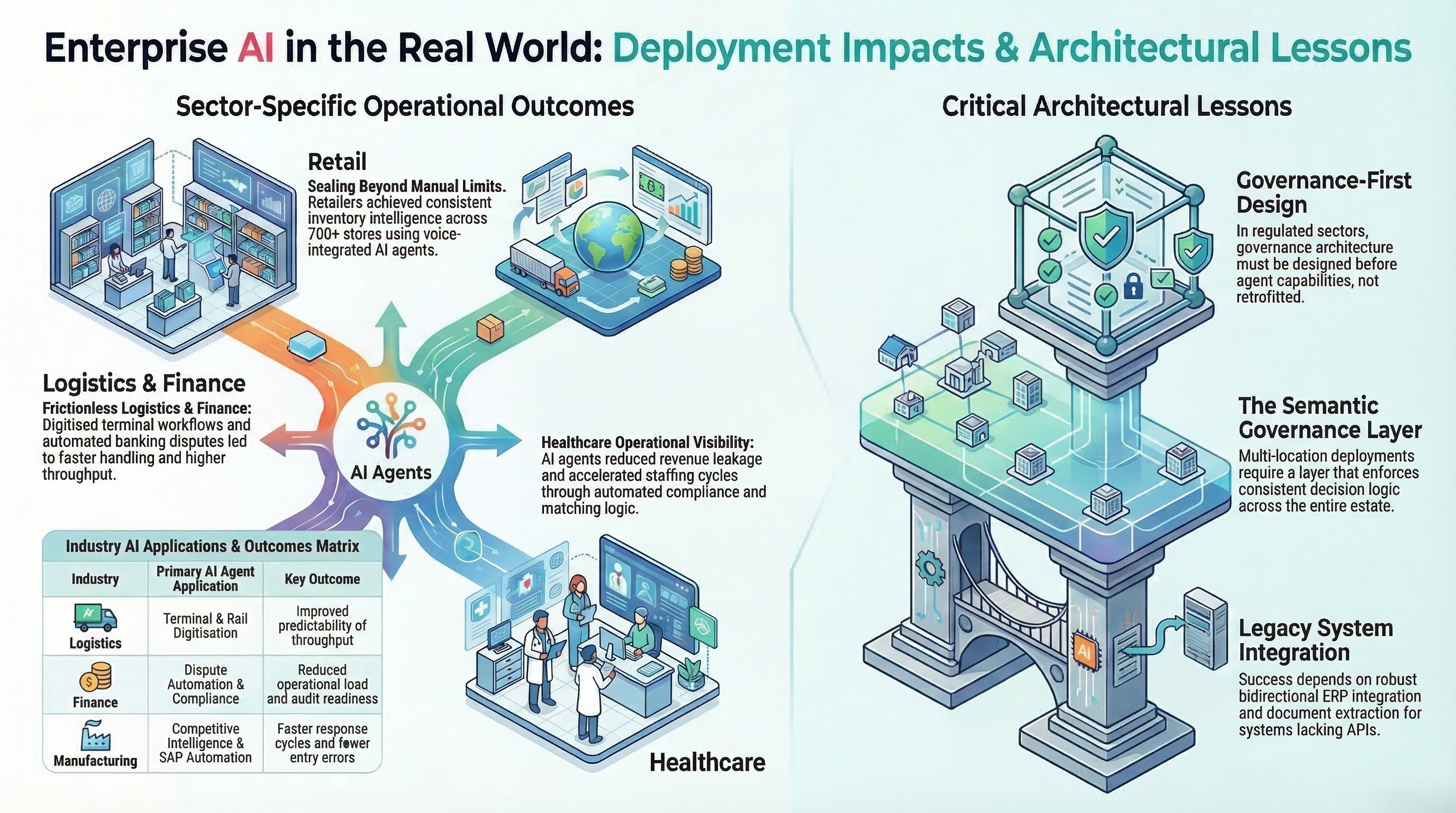

Real-World Deployment Data: What 30+ Enterprise Deployments Actually Showed

The following data is drawn from live production deployments — not pilots, not demos, not simulated environments. Industry labels replace specific client identities.

Retail: AI Agents Across Large-Scale Multi-Store Operations

A large-format retail chain with more than 700 stores across hundreds of cities deployed a three-agent system covering store support, inventory intelligence, and staff training — all delivered via voice in both Hindi and English.

Deployment scope included a voice support agent using speech-to-text, LLM reasoning, and text-to-speech with real-time integration into POS systems and SOPs; an inventory intelligence agent with per-store visibility into pricing, stock levels, and promotional offers; and a knowledge and training agent built on RAG over operational documents.

Outcomes: Measurable reduction in manual helpdesk burden, improved store-level inventory visibility, and faster onboarding via on-demand training guidance — at a scale that manual operations could not support.

The critical architecture insight: agents operating at 700+ locations require a semantic governance layer that enforces consistent definitions and decision logic across the entire estate. Without it, agents produce inconsistent outputs that erode trust.

Logistics: Port and Inland Terminal Digitisation

A global port and logistics operator deployed an agentic system to digitise terminal and rail management — replacing manual coordination across port-to-inland logistics operations.

Scope included terminal workflow digitisation, yard and rail operational dashboards, rail scheduling and visibility, exception management, and executive dashboards with operational alerts.

Outcomes: Higher predictability of terminal-to-rail throughput, more efficient coordination across terminal and inland logistics, and improved operational visibility for leadership — with measurable reduction in coordination friction.

The key deployment lesson: logistics AI agents require deep integration with operational systems that frequently lack modern APIs. Platforms without robust legacy integration capabilities — including document extraction, structured data parsing, and bidirectional ERP integration — cannot serve this use case.

Financial Services: Dispute Automation and Compliance Workflow

A financial technology provider serving banks and credit unions deployed omnichannel AI agents for banking support, covering dispute intake, workflow routing, agent-assist summarisation, next-best-action recommendations, and SLA monitoring.

The platform integrated across chat, email, and phone channels with full auditability and reporting built in.

Outcomes: Faster case handling, reduced operational load via automation, and improved compliance readiness through audit trail generation — critical in a sector where every customer interaction is a potential regulatory event.

This deployment underscored a pattern we observe consistently: in regulated industries, the governance architecture must be designed first and the agent capabilities built within it — not retrofitted after the fact.

Healthcare: Staffing, Inpatient Operations, and Geriatric Care Analytics

Three healthcare deployments across staffing, inpatient hospitalist programs, and geriatric care services demonstrated the range of AI agent applications in a highly regulated, high-stakes environment.

A healthcare staffing platform deployed agents for talent onboarding, credential capture, facility staffing request intake, matching logic, scheduling, compliance workflows, and utilisation reporting. Outcomes included faster fill cycles, lower scheduling friction, and better workforce utilisation.

A physician-led inpatient clinical enterprise deployed agents for revenue management and operational performance analytics, producing dashboards with variance explanations and action lists for billing and operational optimisation. Outcomes included improved visibility into revenue leakage drivers and faster operational decision-making.

A geriatric care services provider deployed agents for program operations analytics and revenue cycle visibility, with exception alerts for leadership. Outcomes included faster identification of operational bottlenecks and improved transparency into service performance.

The common thread across all three: healthcare AI agents must produce explainable outputs that clinical and administrative leaders can trust and act on. Black-box AI has no place in healthcare operations.

Manufacturing: Competitive Intelligence and Procurement Automation

A major HVAC manufacturer operating in a highly competitive, price-sensitive market deployed AI agents for continuous competitive monitoring — tracking competitor pricing, MRP, discounts, availability, and ratings across e-commerce and channel portals.

Scope included always-on monitoring replacing manual checks, agentic Q&A mapped to leadership questions, and analytics views for pricing gaps, threats, and portfolio movement.

Outcomes: Faster competitive response cycles, earlier identification of pricing gaps and promotional shifts, and always-on monitoring replacing labour-intensive manual processes.

A separate manufacturing deployment in the industrial sector automated SAP sales order creation via agentic AI, replacing a high-cost, end-of-life document management platform. Scope included automated interpretation of order triggers, validation logic, SAP integration, exception and approval workflows, and full audit logs.

Outcomes: Reduced manual order processing, a faster order-to-confirm cycle with fewer data-entry errors, and measurably improved auditability for sales order creation and exceptions.

Build vs Buy: When to Build a Custom AI Agent Platform vs Use a Pre-Built One

This is the decision most enterprise technology teams get wrong — not because they lack analytical capability, but because they underestimate the hidden costs on both sides.

Build your own when:

- Your workflows are genuinely unique and no vendor addresses your use case

- You have a mature internal AI/ML engineering team with orchestration experience

- You operate in a regulated environment where vendor data handling cannot be accommodated

- You are willing to commit 12–24 months of engineering time before seeing production results

Buy a pre-built platform when:

- Your use cases map to recognisable patterns (customer support automation, procurement intelligence, sales agent, compliance workflows)

- Time-to-value matters — you need production agents in months, not years

- Your team does not have deep AI agent orchestration expertise in-house

- You need governance and compliance features that would take significant engineering effort to build

The hybrid reality: Most enterprises that "build" end up building on top of foundation models and orchestration frameworks (LangChain, CrewAI, AutoGen) anyway. The real question is whether you need a vendor who has already solved the enterprise integration, governance, and deployment methodology challenges — or whether you are prepared to solve them yourself.

The honest answer for most enterprises: the opportunity cost of building is higher than the cost of buying, because building takes engineering time away from the workflows that actually create competitive advantage.

AI Agent Platform Governance: What Enterprise Security Teams Need to Know

Security and governance are where enterprise AI agent deployments most frequently stall in procurement. Here is what security teams should evaluate:

Data handling and residency: Where does agent reasoning occur? Where is context data stored? Does the platform support on-premise or private cloud deployment for sensitive data environments?

Access control architecture: Agents should operate under the principle of least privilege — accessing only the systems and data scopes required for their specific function. Evaluate whether the platform supports role-based access control at the agent level, not just the user level.

Action reversibility: Can agent-initiated actions — record creation, document generation, transaction routing — be reversed if the agent produces an incorrect output? What is the rollback mechanism?

Model governance: Does the platform allow you to specify which foundation models are used for which agent functions? Can you restrict agent reasoning to internally approved models?

Hallucination prevention: What mechanisms does the platform use to prevent agents from generating fabricated outputs, particularly in document generation or customer-facing communication workflows?

Incident response: If an agent produces a harmful output or executes an unintended action, what is the platform's incident response capability? How quickly can agents be suspended, and what forensic data is available?

Security teams that engage with these questions upfront — before procurement — save their organisations from costly post-deployment remediation.

How to Calculate ROI Before You Choose a Platform

ROI for enterprise AI agent platforms comes from two sources: cost reduction (automating tasks that previously required human effort) and revenue acceleration (enabling humans to spend more time on high-value activities).

Step 1: Map your highest-friction workflows. Identify the three to five workflows in your organisation where manual effort is highest, error rates are significant, or cycle times are a competitive disadvantage. These are your target deployments.

Step 2: Baseline current cost. For each workflow, calculate: number of FTEs involved × average hourly cost × hours per week spent on the workflow. This is your cost baseline.

Step 3: Estimate automation rate. Based on workflow complexity, estimate what percentage of current manual effort an AI agent could handle. For well-defined, document-heavy workflows, automation rates of 70–90% are achievable. For judgment-intensive workflows, 40–60% is more realistic for initial deployment.

Step 4: Calculate payback period. Total platform cost (licensing + implementation + internal resource allocation) ÷ monthly cost savings = payback period in months. Most enterprise AI agent deployments with well-scoped initial use cases achieve payback within 6–14 months.

Step 5: Model the compounding effect. AI agents improve over time as they process more data and as governance frameworks allow progressive autonomy increases. Year 2 ROI is typically 40–60% higher than Year 1 from the same agent deployment, without additional capital expenditure.

Industry benchmarks from our deployment data: manufacturing customers have reported faster order-to-confirm cycles with meaningfully fewer data-entry errors. Financial services customers report improved case handling efficiency and reduced manual operational load. Healthcare staffing customers report faster fill cycles and better workforce utilisation. These are not projections — they are production outcomes.

The Bottom Line: How to Make the Right Platform Decision for Your Enterprise

The enterprise AI agent platform market in 2026 is at a critical inflection point. The gap between platforms that work in demos and platforms that deliver production ROI at enterprise scale is wide — and it is not closing as fast as the marketing would have you believe.

The evaluation framework in this guide is designed to help you close that gap. Seven criteria, each derived from production implementation experience rather than vendor claims. The platforms that perform well against all seven are rare. The ones that perform well against two or three and weak on the rest are the majority.

Here is the practical path forward:

Start with governance and integration. If a platform cannot meet your security requirements and cannot integrate with your core systems, its agent capabilities are irrelevant. Eliminate vendors on these criteria first.

Demand deployment evidence, not capabilities. Any vendor can demonstrate what an agent can do in a controlled environment. Ask for documented case studies from comparable industries with time-to-production data, outcome metrics, and the freedom to speak with reference customers.

Scope your first deployment tightly. The fastest path to enterprise-wide AI agent adoption is proving ROI on a single, well-bounded workflow — then expanding from that proof. Enterprises that attempt platform-wide transformation on day one almost always stall.

Evaluate the methodology, not just the product. The vendor's implementation methodology is as important as the technology. A great platform deployed badly will fail. Ask for the implementation playbook before you sign.

Plan for progressive autonomy. Set your initial agent deployment to high human oversight. Build the evidence base. Then progressively reduce oversight as trust is established. This is how enterprises build durable confidence in AI agent systems — and how they avoid the high-profile failures that create lasting organisational resistance.

The enterprise AI agent opportunity is real. The deployment complexity is real. The gap between them is where the right platform partnership makes all the difference.

Ampcome has deployed AI agents across retail, logistics, financial services, healthcare, manufacturing, hospitality, real estate, utilities, and government. If you are evaluating enterprise AI agent platforms and want to understand how our deployment experience maps to your specific use case, [request a consultation].

Frequently Asked Questions

What is an enterprise AI agent platform?

An enterprise AI agent platform is a software infrastructure layer that enables businesses to deploy, orchestrate, and govern autonomous AI agents — systems that can perceive context from multiple data sources, reason through multi-step workflows, take action across integrated enterprise systems, and operate with configurable levels of human oversight.

How is an enterprise AI agent platform different from RPA?

RPA executes predefined, rule-based sequences and breaks when processes change. Enterprise AI agents reason through process variation, handle exceptions autonomously, and adapt without reprogramming. AI agent platforms can extend or replace RPA infrastructure depending on workflow complexity.

How long does it take to deploy an enterprise AI agent?

Deployment timelines vary by platform and workflow complexity. A well-scoped initial workflow on a purpose-built enterprise platform typically reaches production in 2–4 months. More complex, multi-system deployments with significant legacy integration requirements run 4–8 months. Enterprises building custom agent infrastructure from scratch should plan for 12–24 months before production-ready deployment.

What does an enterprise AI agent platform cost?

Pricing models vary: per-agent, per-workflow, platform licensing, or consumption-based. Total cost of ownership should include licensing, implementation services, internal resource allocation, and ongoing maintenance. For a meaningful evaluation, request total cost of ownership data for a comparable deployment from any vendor you are seriously considering.

What governance features should an enterprise AI agent platform have?

Essential governance features include: full audit trails with timestamped logs of every agent action, configurable human-in-the-loop approval gates, role-based access control at the agent level, action reversibility mechanisms, explainable outputs, and compliance framework support (SOC 2, GDPR, HIPAA, or sector-specific requirements). Platforms that cannot demonstrate these features in production — not just in a roadmap — are not enterprise-ready.

How do you measure ROI from an enterprise AI agent platform?

ROI measurement should combine cost reduction metrics (FTE hours recovered, error rate reduction, cycle time improvement) and revenue acceleration metrics (increased throughput, faster customer response, more accounts covered without additional headcount). Establish a documented baseline before deployment and measure against it at 90-day intervals.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us

.jpg)

.jpg)