Enterprise AI Agents in 2026: Mid-Year State of Adoption, Real Deployments & What's Actually Working

The shift has happened. Enterprise AI agents are no longer a pilot-stage experiment discussed in boardrooms and deferred to "next year." As of mid-2026, 54% of enterprises have integrated AI agents into core operations — not as assistants that answer questions, but as autonomous systems that execute workflows, process documents, monitor compliance, and coordinate decisions across entire business functions.

This report is different from the trend pieces published at the start of the year. It is grounded in real deployment data — across industries including logistics, financial services, retail, healthcare, energy, and real estate — and examines what is actually working, what is still breaking, and what enterprise leaders should prioritize for the second half of 2026.

If you are evaluating AI agents for your enterprise, scaling a deployment that has stalled, or trying to understand where the market actually stands versus where the hype says it stands, this is the mid-year data you need.

What is the current state of enterprise AI agent adoption in mid-2026?

The short answer: more than half of enterprises now run AI agents in production, but most are still in the early stages of cross-enterprise scale. The gap between adoption and impact is closing — but execution remains the differentiating factor.

Key statistics: from pilot purgatory to production

The data from the first half of 2026 tells a consistent story across multiple research sources:

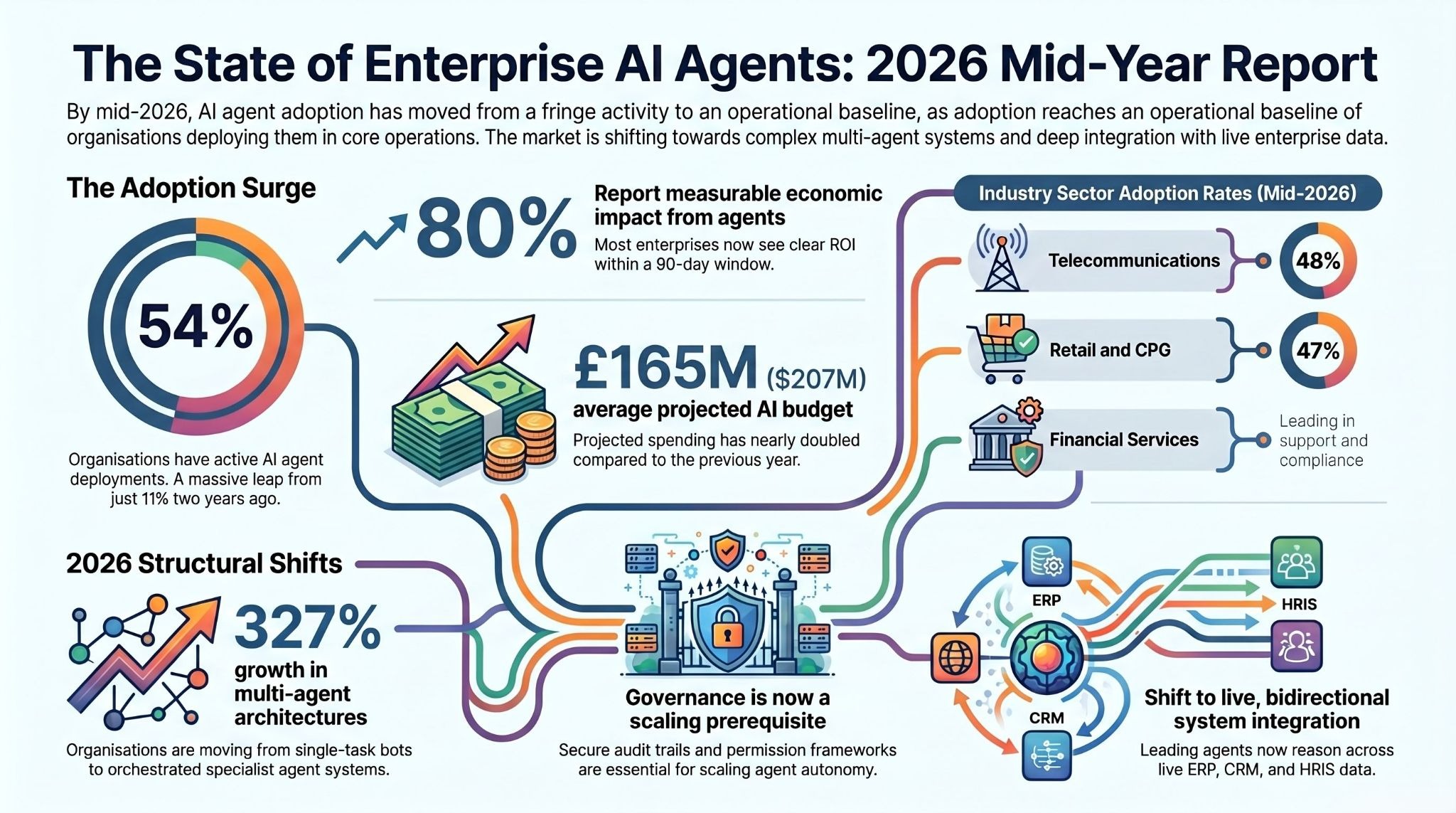

- 54% of organizations are actively deploying AI agents across core operations, up from 11% two years ago and 33% in mid-2024 (KPMG, Q1 2026 AI Pulse Survey).

- 88% of organizations report using AI in at least one business function, up from 78% a year ago (McKinsey, State of AI 2025).

- 80% of respondents report measurable economic impact from AI agents today (State of AI Agents Report, 2026).

- $207 million is the average AI budget organizations are projecting for the next 12 months — nearly double the figure from the same period last year (KPMG).

- 40% of enterprise applications will integrate task-specific AI agents by end of 2026, up from less than 5% in 2025 (Gartner).

- 62% of organizations are at least experimenting with AI agents, with 23% reporting full-scale deployment of an agentic system (McKinsey).

The headline shift from H1 2026 is not the numbers themselves — it is the change in where organizations sit on the curve. Two years ago, AI agent adoption was a fringe activity. By mid-2026, it has become a baseline expectation for enterprise operations teams, and the organizations that are not deploying are increasingly on the defensive explaining why.

Which industries are leading adoption right now?

Adoption is not evenly distributed. The industries leading mid-2026 deployment are:

- Telecommunications: 48% of companies now actively deploying agentic AI (NVIDIA State of AI 2026). Use cases concentrate in customer-facing automation and network operations.

- Retail and CPG: 47% adoption rate, led by inventory intelligence, customer support automation, and supply chain coordination.

- Financial services: Customer support automation (23% of deployments), software development augmentation (18%), and back-office processing including invoice management, disputes, and compliance monitoring.

- Healthcare: Software development and clinical research automation, with growing deployment in patient intake, documentation, and scheduling workflows.

- Manufacturing and logistics: Predictive maintenance, demand forecasting, yard management, and agentic analytics over operational data.

- Energy and utilities: Smart grid monitoring, anomaly detection, automated alerting, and energy optimization agents.

The pattern across all sectors is consistent: organizations are starting with high-volume, rule-bound workflows where errors are costly and the ROI of automation is measurable within 90 days.

What has changed since January 2026?

The first half of 2026 has brought three structural shifts that were not visible at the start of the year:

1. The move from single agents to multi-agent systems. Enterprises are transitioning away from deploying one agent for one workflow. Multi-agent architectures — where a manager agent orchestrates specialist agents across research, execution, and review — have grown by 327% in less than four months (Databricks, 2026 State of AI Agents Report). This is not an incremental upgrade. It represents a fundamental shift in how enterprises think about automating complex, multi-step business processes.

2. Governance is no longer an afterthought. The 2026 Gartner Hype Cycle for Agentic AI explicitly identifies governance, security, and cost-focused profiles as emerging alongside core agent technologies. Organizations that launched pilots in 2025 without robust audit trails and permission frameworks are now rebuilding those foundations — at significant cost. The enterprises scaling fastest in H1 2026 built governance infrastructure before scaling agent autonomy.

3. Integration depth has become the primary differentiator. Earlier generations of enterprise AI sat on top of data exports and static documents. The leading deployments in 2026 operate with live, bidirectional access to ERP, CRM, HRIS, ticketing, and operational systems. 46% of organizations cite integration with existing systems as their primary deployment challenge (State of AI Agents Report, 2026). The platforms that solve this problem — not just connecting to systems, but reasoning across them with relational intelligence — are separating from those that don't.

Ampcome builds and deploys enterprise AI agents through assistents.ai — an enterprise agentic AI platform deployed across 12 industries, connecting to 300+ enterprise systems with full SOC 2 Type II, GDPR, HIPAA, and ISO 27001 compliance.

Schedule a demo to see what's possible in your environment.

What are AI agents actually doing in enterprise operations today?

This is the section most "state of AI" reports skip. They cite the statistics. They describe the trends. They do not show you what is actually running in production. What follows is drawn from live enterprise deployments across industries, describing scope of work and outcomes without identifying specific organizations.

Finance and accounts payable automation

In financial services and enterprise finance functions, AI agents are tackling the workflows that have absorbed the most manual effort for decades.

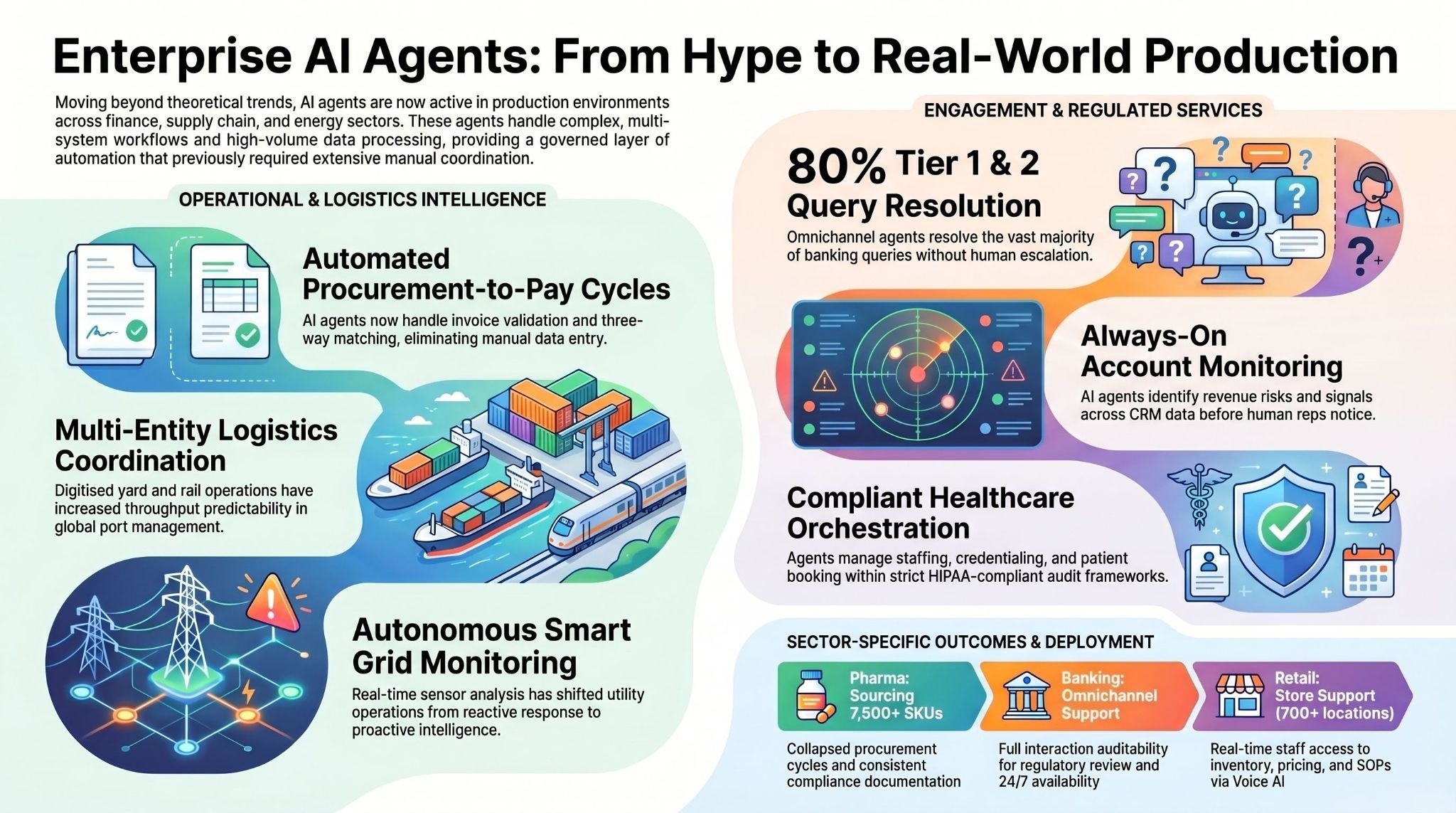

One deployment at a global logistics and supply chain organization automated the entire procurement-to-pay cycle: purchase order matching, invoice validation, three-way matching against goods receipts, and exception routing to human reviewers when discrepancies exceed defined thresholds.

The scope included integration with core ERP systems, automated audit log generation, and scheduled insight packs delivered to finance leadership. The measurable outcomes included elimination of manual data entry for standard invoices, reduction in late-payment penalties through faster cycle times, and improved vendor relationship management through consistent, accurate processing.

In the banking sector, AI agents are being deployed for omnichannel customer support with built-in workflow automation: agents that handle intake across chat, email, and phone; summarize cases for human agents; recommend next-best actions; and generate auditable records of every interaction for compliance review. One deployment in this category targeted 80% resolution of Tier 1 and Tier 2 queries without human escalation, while maintaining full auditability for regulatory review.

For enterprise finance teams more broadly, AI agents are now handling: cashflow monitoring and forecasting; scenario modeling for financial planning; automated KPI alerts for margin control, vendor performance, and working capital; and portfolio views that allow finance advisors to manage multiple client accounts simultaneously from a single interface.

Supply chain and logistics intelligence

The supply chain domain has seen some of the most operationally significant deployments of H1 2026. The complexity of multi-entity, cross-border logistics — spanning terminals, rail, inland warehouses, and customs documentation — creates exactly the kind of multi-system, high-volume environment where AI agents deliver disproportionate value.

One deployment at a global ports and logistics operator focused on terminal and rail management: digitizing yard operations, building rail scheduling and visibility workflows, managing exceptions automatically, and providing executive dashboards with real-time operational alerts. The architecture required integration across port management systems, rail scheduling platforms, and customer-facing tracking interfaces. Outcomes included higher predictability of terminal-to-rail throughput and more efficient coordination between terminal and inland logistics operations — reducing the manual coordination load that had previously required dedicated teams.

A separate supply chain deployment addressed the procurement side of a pharma ingredients business: automating request-for-quotation workflows, matching supplier capabilities to procurement requirements, handling quality and regulatory documentation, and generating analytics on price, lead-time, and vendor performance. The business problem was that sourcing from a catalogue of 7,500+ SKUs across hundreds of suppliers was creating bottlenecks in procurement cycles. Agents collapsed those cycles significantly while improving the consistency of compliance documentation.

In retail, a national chain operating 700+ stores deployed AI agents targeting three pain points simultaneously: store support (handling staff queries about inventory, promotions, and operational procedures), inventory intelligence (pricing and stock visibility per location), and training (on-demand access to SOPs via voice AI in Hindi and English). Each agent operated within a governed architecture with an admin console, ticketing integration, and real-time analytics.

Customer service and omnichannel support

Customer service is the most widely deployed category for enterprise AI agents in 2026 — and also the most mature in terms of measurable outcome benchmarks.

The leading deployments are not chatbots with better copy. They are multi-agent systems that: classify incoming queries across channels; retrieve context from CRM, product, and knowledge base systems; draft responses grounded in live data; escalate to humans when confidence falls below threshold; and generate analytics on resolution rates, handle time, and escalation patterns.

Across multiple customer service deployments in 2026, the consistent outcomes include: reduction in average handle time; increase in first-contact resolution rates; 24/7 availability without corresponding increase in headcount; and significant improvement in consistency — agents apply the same logic to every interaction, eliminating the variance that comes from team size, shift schedules, and individual knowledge gaps.

One real estate deployment automated end-to-end tenant and customer support: query triage, rental and payment support workflows, FAQ resolution, ticketing and escalation to human teams, and a knowledge base layer over tenancy documents, policies, and SOPs. The 24×7 tenant experience was a key outcome alongside SLA adherence through automated routing.

A driving institute deployment optimized customer journey workflows: enrollment through to lesson booking, instructor utilization, slot optimization, and customer experience dashboards. The measurable result was reduction in operational bottlenecks and better visibility into conversion and performance drivers — the kind of outcome that directly affects unit economics.

Sales, marketing, and CRM automation

AI agents are fundamentally changing what it means to run a sales operation. The most impactful deployments in this category are not tools that generate email copy — they are systems that monitor accounts continuously, identify signals of intent or risk, and surface recommended actions before the human sales rep would have noticed anything.

One deployment at an enterprise B2B organization built an always-on account monitoring system: capturing signals across communications, product usage, support tickets, and market activity; governing opportunity identification through defined rules; orchestrating follow-up actions; and maintaining CRM hygiene automatically. The outcome was higher account coverage without increasing headcount and faster response cycles on opportunities and renewals — both of which compound directly into pipeline and revenue.

For marketing operations, agentic AI is being deployed to handle competitive monitoring (continuous tracking of pricing, promotions, product changes, and availability across digital channels), campaign performance analytics, and brand insight generation. One competitive intelligence deployment replaced a team of analysts running manual checks across dozens of portals — converting the process from weekly reporting to real-time, continuous monitoring with automated alerts when thresholds are crossed.

Healthcare, compliance, and regulated industries

Healthcare deployments in 2026 are notable for their emphasis on compliance architecture. HIPAA compliance, audit trails, and human oversight layers are not optional features in this vertical — they are deployment prerequisites.

Live deployments in H1 2026 span: healthcare staffing platforms that automate talent onboarding, credential capture, facility matching, scheduling, and compliance tracking; geriatric care operations platforms providing revenue cycle visibility and program performance analytics; and physician-led inpatient enterprises using AI agents for revenue management, utilization analytics, and billing workflow optimization.

Across these deployments, the consistent pattern is that AI agents handle the high-volume, structured portions of the workflow — intake, matching, scheduling, documentation, reporting — while humans retain decision authority over clinical and exception-handling functions. The governance architecture is not a constraint on performance; it is what makes deployment in regulated environments possible at all.

A UK private healthcare provider deployed agents to automate the end-to-end patient service workflow: booking orchestration, status monitoring, customer notifications, and operational analytics. The operational outcome was faster customer communications, fewer missed handoffs, and improved service visibility through unified reporting.

Energy, utilities, and smart grid operations

The energy sector has emerged as one of the more technically sophisticated deployment environments for agentic AI in 2026. The combination of continuous sensor data, regulatory reporting requirements, and the cost of unplanned outages creates a compelling ROI case for autonomous monitoring agents.

One deployment at a state power transmission utility built a complete smart grid monitoring layer: KPI dashboards for transmission operations, anomaly detection across outage and loss data, predictive maintenance indicators, and automated alerts for field operations teams. The architecture required ingesting data from grid sensors and operational systems, running continuous anomaly detection models, and routing alerts to the right field teams within defined SLA windows. The measurable outcome was faster identification of grid exceptions and more proactive operations — shifting the organization from reactive incident response to continuous operational intelligence.

A second energy deployment focused on campus-scale energy management at a research institution: sensor data ingestion, energy consumption forecasting, optimization recommendations, and proactive alerting. The business problem was that energy consumption across a multi-building campus was generating costs and inefficiencies that were invisible without continuous monitoring. Agents provided the visibility layer and automated the alert routing that allowed facilities teams to act on optimization opportunities they had previously missed entirely.

What results are enterprises actually seeing? Real deployment outcomes

Across the deployments described above and the broader dataset of enterprise AI agent implementations in 2026, the measurable outcomes cluster around four categories.

Processing speed and throughput gains

The most consistently reported outcome across enterprise AI agent deployments is reduction in processing cycle time. Specific benchmarks from production deployments include:

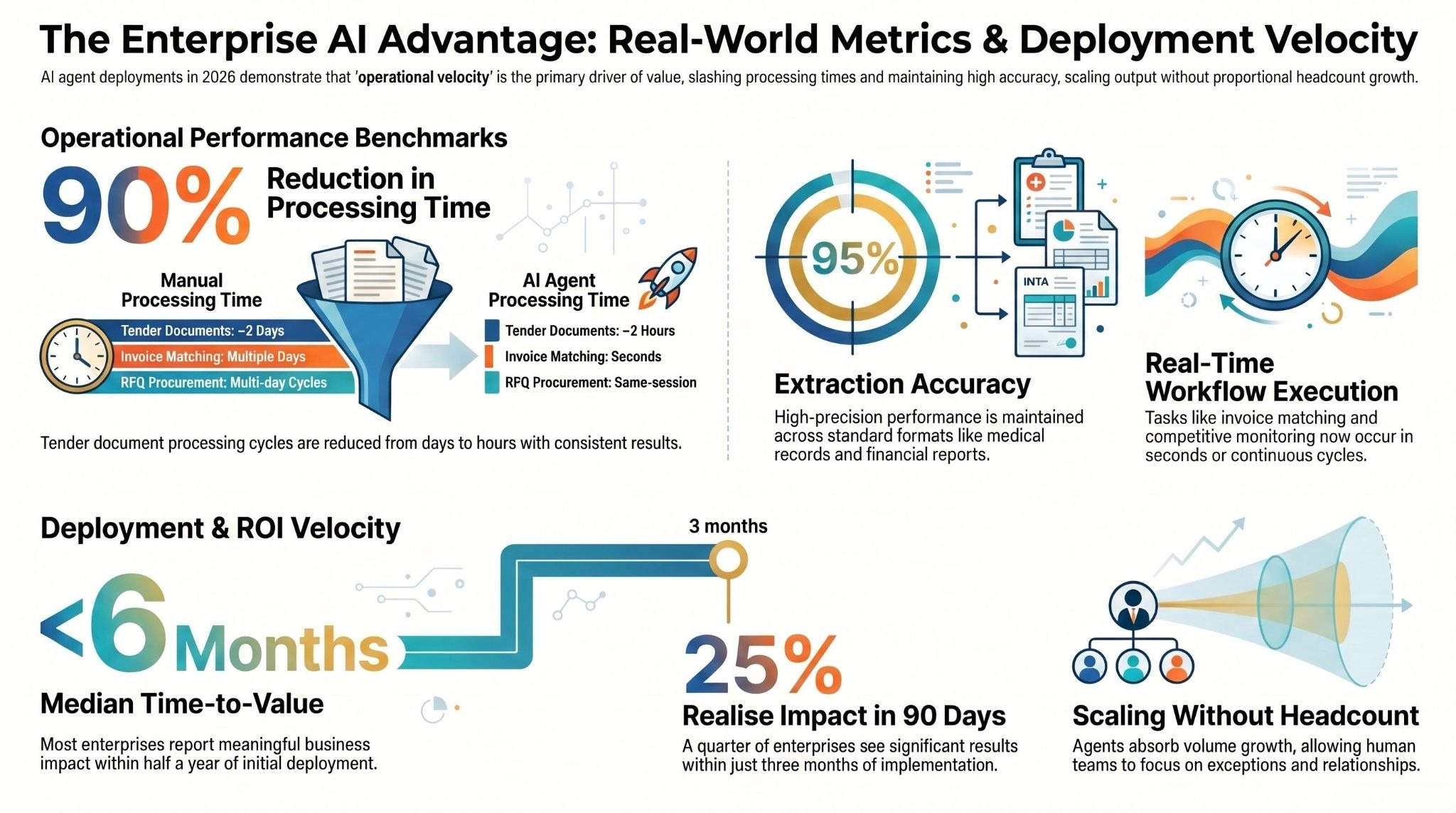

- Tender document processing engineered to run approximately 90% faster than manual processing, with approximately 95% extraction accuracy on standard document formats.

- Invoice matching and routing reduced from days to seconds in accounts payable workflows.

- Competitive intelligence monitoring converted from weekly manual reporting cycles to real-time continuous monitoring.

- RFQ processing in procurement reduced from multi-day cycles to same-session completion for standard sourcing requests.

- Healthcare staffing fill cycles reduced significantly, with measurable improvement in facility utilization rates.

These are not marginal improvements. A 90% reduction in processing time for tender documents means a business that previously needed two days to turn around a bid response can now do it in hours. That kind of operational leverage changes what is competitively possible.

Headcount and operational cost impact

AI agents in production are not primarily replacing workers — they are allowing organizations to handle significantly higher volumes without proportional headcount growth. The framing that consistently emerges from deployments is: scalable advisory-like insight without added headcount.

The most operationally significant pattern is in functions where demand is growing faster than organizations can hire: customer support at scale, financial analysis across multiple client portfolios, competitive monitoring across hundreds of data sources, and compliance review across growing regulatory requirements. Agents absorb the volume growth; human teams focus on exception handling and relationship management.

One advisory platform deployment specifically enabled a financial advisory practice to serve multiple client portfolios simultaneously with continuous insight generation — a capability that would have required multiple additional analysts without agentic AI.

Accuracy and error reduction benchmarks

Document processing accuracy is one of the clearest measurable outcomes in enterprise AI agent deployments. Specific targets from production deployments include extraction accuracy targets of approximately 95% on standard document formats — consistent across tender documents, medical records, procurement documentation, and financial reports.

Beyond document extraction, accuracy improvements are measurable in: CRM data hygiene (pipeline forecasts built on stale data are a well-documented revenue problem); compliance monitoring (catching policy gaps in real time versus discovering them during quarterly audits); and inventory intelligence (pricing and stock data that is accurate per location rather than aggregated and delayed).

Time-to-value: how long does deployment actually take?

G2's data shows that more than 25% of enterprises report meaningful impact within three months of deploying AI agents, with a median time-to-value of six months or less. This aligns with deployment patterns observed in production environments.

The organizations that reach value fastest share three characteristics: they start with a single, high-volume workflow rather than trying to automate everything simultaneously; they have clean, accessible data in the target systems; and they invest in governance infrastructure before scaling agent autonomy rather than retrofitting governance after problems emerge.

Platforms like assistents.ai are specifically designed to reduce the time-to-value curve for enterprise deployments. With pre-built agents for Finance, Sales, Customer Support, HR, Marketing, and Compliance — each with domain-specific knowledge and pre-configured system connectors — the gap between proof of concept and production deployment is measured in weeks rather than quarters.

What is stopping enterprises from scaling AI agents in 2026?

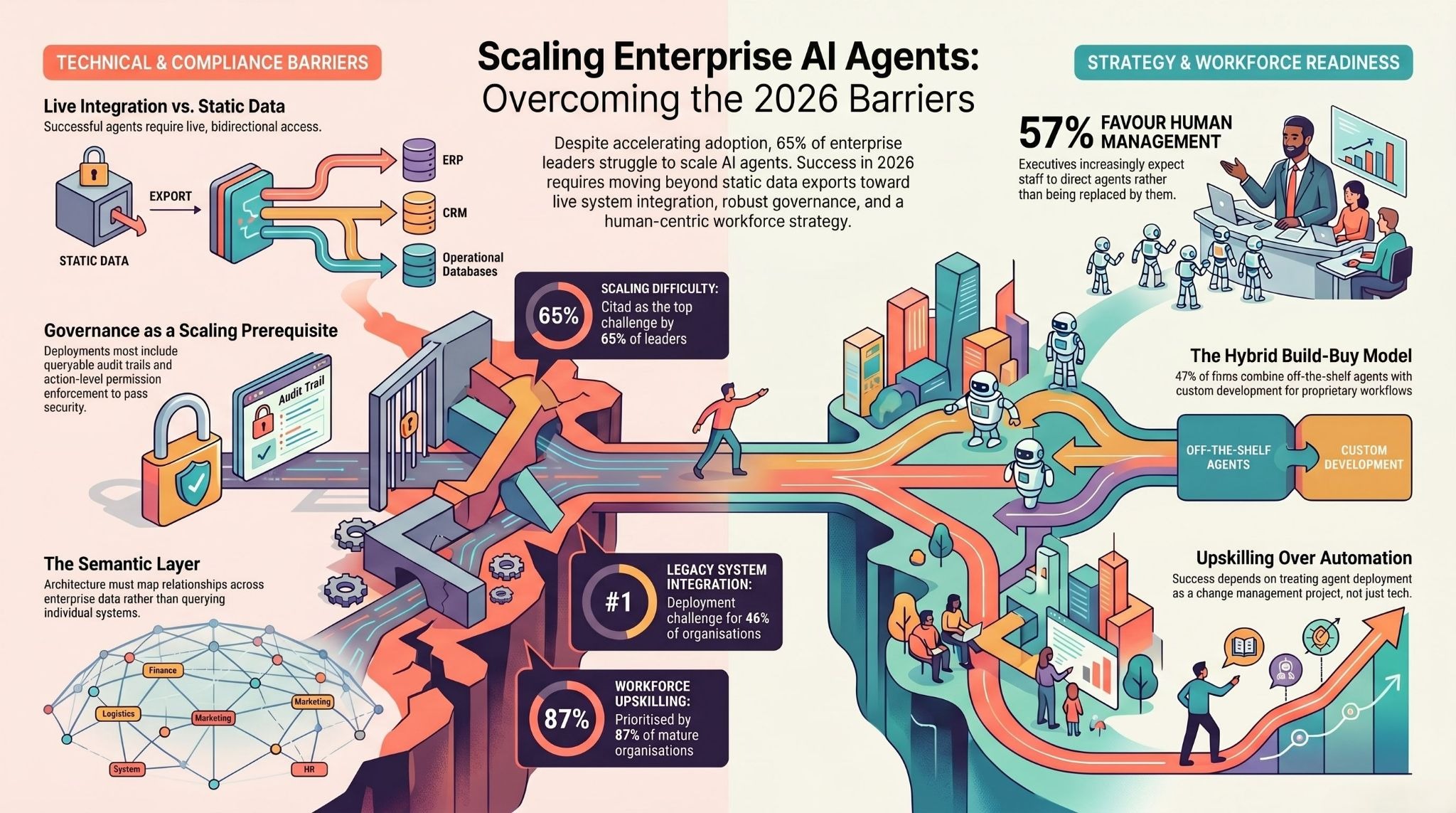

Adoption is accelerating. Outcomes are measurable. So why are 65% of enterprise leaders still citing scaling as their top challenge (KPMG, Q1 2026)? The answers are consistent across the data.

Integration with legacy systems

Integration with existing systems is the #1 deployment challenge for 46% of organizations in 2026 (State of AI Agents Report). This is not a technology limitation — it is an architecture problem. Most enterprise AI agent platforms were designed to sit on top of data exports and document stores. The production-grade deployments that are generating the outcomes described above operate with live, bidirectional access to the systems where work actually happens: ERP, CRM, HRIS, ticketing, operational databases.

The platforms that solve this problem — building a semantic layer that maps relationships across enterprise data rather than just querying individual systems — are the ones enabling the most impactful deployments.

assistents.ai connects to 300+ enterprise systems including SAP, Salesforce, Oracle, ServiceNow, Workday, and HubSpot, and the platform's three-layer architecture (Context Engine, Semantic Layer, Action Engine) is specifically built to reason across connected systems rather than querying them individually.

The practical implication for enterprise teams evaluating AI agents: ask specifically how the platform handles live system access, bidirectional data flow, and relationship reasoning across multiple connected applications. Platforms that require data exports or operate only on static document stores will hit a scaling ceiling quickly.

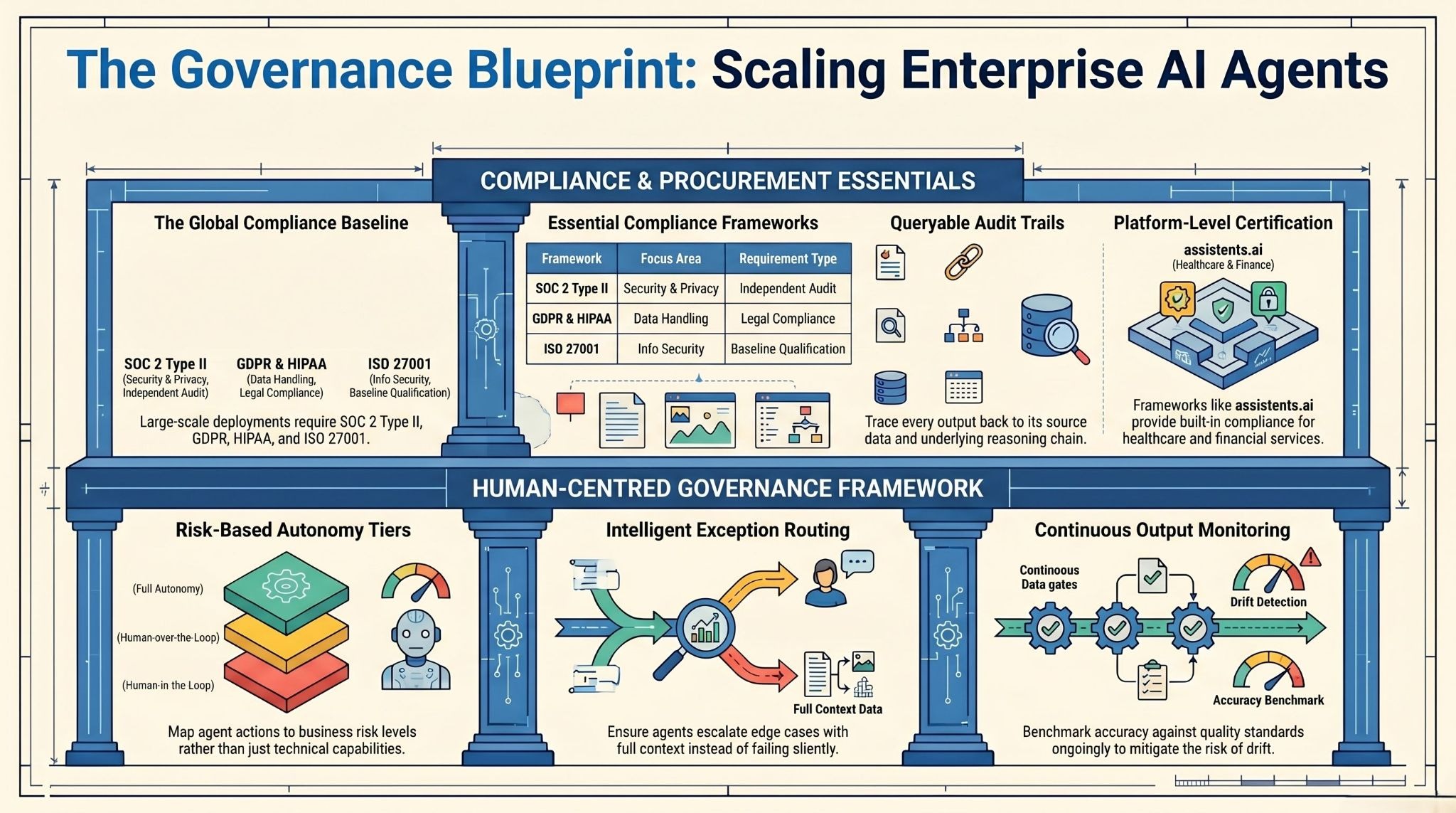

Governance, auditability, and compliance gaps

The second major scaling barrier is governance. Organizations that launched pilots in 2025 with minimal audit trail infrastructure are now discovering that the path to broader deployment requires rebuilding the permission and logging architecture they skipped in the rush to demonstrate capability.

The specific gaps that are blocking scale in H1 2026:

- Audit trails: Enterprise procurement committees and legal teams require complete, queryable records of every agent action. Deployments that cannot produce this documentation cannot pass enterprise security review.

- Permission enforcement: Agents operating with broad system access create security and compliance risks. Production-grade deployments enforce role-based permissions at the action level, not just at the authentication layer.

- Explainability: As AI agents take on higher-stakes decisions — routing exceptions, flagging compliance issues, updating financial records — the ability to explain why an agent took a specific action becomes a regulatory and operational requirement.

Organizations deploying on platforms with SOC 2 Type II, GDPR, HIPAA, and ISO 27001 compliance — like assistents.ai — are finding it significantly easier to pass enterprise security review and deploy in regulated environments. Compliance is not a feature; it is a deployment prerequisite for any serious enterprise environment.

Workforce readiness and change management

87% of organizations are prioritizing workforce upskilling and reskilling as their AI strategies mature (KPMG). The human side of AI agent deployment is consistently underinvested relative to the technology side.

The deployments that succeed in 2026 treat agents as a change management project as much as a technology project. Teams need to understand what agents can and cannot do, how to handle escalations, how to interpret agent-generated outputs, and how to maintain meaningful human oversight without creating bottlenecks that negate the efficiency gains.

The clearest signal from H1 2026 data: 57% of executives now expect people to manage and direct AI agents — not be replaced by them (KPMG). The organizations building toward that operating model are scaling faster than those still framing AI agents as a headcount reduction exercise.

Build vs buy: the hybrid model most enterprises are choosing

47% of organizations are now taking a hybrid approach — combining off-the-shelf agents with custom development (State of AI Agents Report, 2026). This reflects the maturation of the market rather than indecision.

The pattern is consistent: enterprises start with pre-built agents for well-defined, high-volume workflows (invoice processing, customer support triage, CRM hygiene), demonstrate ROI, and then invest in custom agent development for proprietary workflows that no off-the-shelf product addresses.

The risk of the pure build path is speed. Custom agent development from scratch takes months and requires significant ML engineering resources. The risk of the pure buy path is flexibility. Pre-built agents that cannot adapt to proprietary workflows hit operational ceilings quickly.

Platforms that support both paths — pre-built agents for common enterprise workflows and a development layer for custom deployment — are where the most successful enterprise deployments are concentrated.

How should enterprises govern AI agents in 2026?

Governance is not the opposite of speed — it is what makes scale possible. The organizations that have deployed AI agents most broadly and most reliably in 2026 built governance infrastructure first and expanded autonomy second.

What does human-in-the-loop mean at scale?

Human-in-the-loop is often treated as a synonym for "humans approve everything" — which defeats the purpose of autonomous agents entirely. The operational definition that works in production is more nuanced: humans set the rules, define the exception thresholds, review the edge cases, and monitor the outputs. Agents execute within those boundaries at full autonomy.

This means every production deployment needs:

- Defined autonomy tiers: Which actions can agents execute fully autonomously? Which require human review before execution? Which require explicit human approval? These tiers should map to business risk, not to agent capability.

- Exception routing: When agents encounter situations outside their defined parameters, they should escalate to humans with full context — not fail silently or execute anyway.

- Continuous output monitoring: Agent outputs should be monitored against quality and accuracy benchmarks on an ongoing basis, not just at launch. Drift in output quality is a real operational risk.

The deployments that have maintained human-in-the-loop governance most effectively are those that built the oversight layer into the product architecture rather than treating it as a separate compliance exercise.

SOC 2, GDPR, HIPAA, and ISO 27001: the compliance baseline

For enterprise AI agents operating in 2026, the minimum compliance baseline for most large-organization deployments is:

- SOC 2 Type II: Demonstrates that the platform has been independently audited for security, availability, processing integrity, confidentiality, and privacy controls — not just attested to by the vendor.

- GDPR: Covers data processing requirements for organizations with EU operations or EU customer data. Agents that process personal data need to operate within GDPR-compliant data handling frameworks.

- HIPAA: Required for any deployment handling protected health information. Healthcare deployments that do not operate on HIPAA-compliant infrastructure cannot pass procurement review.

- ISO 27001: The international standard for information security management systems. Increasingly required by enterprise procurement teams as a baseline vendor qualification.

Critically, these certifications need to apply to the underlying platform, not just the deployment environment. assistents.ai is certified across all four frameworks — SOC 2 Type II, GDPR, HIPAA, and ISO 27001 — which is why it can deploy in healthcare, financial services, and regulated enterprise environments without creating compliance risk.

Audit trails and explainability: what procurement teams actually ask for

The audit trail requirement is not theoretical. In enterprise procurement reviews — particularly in financial services, healthcare, and public sector — procurement teams are explicitly asking for:

- Complete, queryable logs of every agent action

- Evidence that agents operated within defined permission boundaries

- The ability to trace any specific output back to its source data and reasoning chain

- Documentation of how exception scenarios were handled

The organizations that are winning procurement approvals fastest are those that can demonstrate these capabilities from the first proof-of-concept — not as a retrofit to a working prototype.

How do AI agents compare to RPA for enterprise automation in 2026?

The short answer: RPA and AI agents are complementary, not competing. The organizations getting the most value from agentic AI in 2026 are not replacing their RPA infrastructure — they are building AI agents on top of it.

Where RPA still wins

RPA remains the right tool for:

- Deterministic, rule-based processes where every step is defined, every input is structured, and the workflow does not change. Payroll processing, standard data migration, and structured report generation are examples where RPA is still the optimal solution.

- System integration where AI is unnecessary. If the business logic is fully captured in rules and the data is clean and structured, adding AI reasoning adds cost and complexity without adding value.

- High-volume, zero-tolerance-for-error workflows where deterministic execution is more valuable than adaptability.

The installed base of RPA in large enterprises is enormous. UiPath alone has one of the largest enterprise automation install bases in the market. Ripping out working RPA infrastructure to replace it with AI agents is a mistake that multiple organizations are discovering in 2026.

Where agentic AI replaces or surpasses RPA

AI agents are superior to RPA in:

- Unstructured data processing. Tender documents, emails, customer messages, medical records, and contract documents are inherently unstructured. RPA cannot reason over unstructured inputs; AI agents can extract, classify, and act on them reliably.

- Multi-step decision-making. When a workflow requires judgment — evaluating supplier risk, assessing invoice exceptions, routing escalations based on case context — RPA requires explicit rules for every scenario. AI agents can reason through novel situations within defined governance boundaries.

- Cross-system orchestration. RPA bots typically operate on one system at a time. AI agents can operate across multiple systems simultaneously, synthesizing context from CRM, ERP, and support systems in a single reasoning step.

- Dynamic environments. Workflows that change frequently break RPA bots. AI agents adapt to interface and process changes more resilently.

The hybrid model: RPA as the foundation, AI agents on top

The most effective architecture in 2026 treats RPA as the reliable execution layer for structured, deterministic tasks and AI agents as the reasoning layer for unstructured inputs, exception handling, and cross-system orchestration.

Practically, this means: an AI agent might receive an unstructured invoice via email, extract the relevant data fields, validate them against purchase orders in the ERP system, and then trigger an existing RPA bot to execute the final payment workflow. The AI handles the reasoning; the RPA handles the deterministic execution. Neither is redundant.

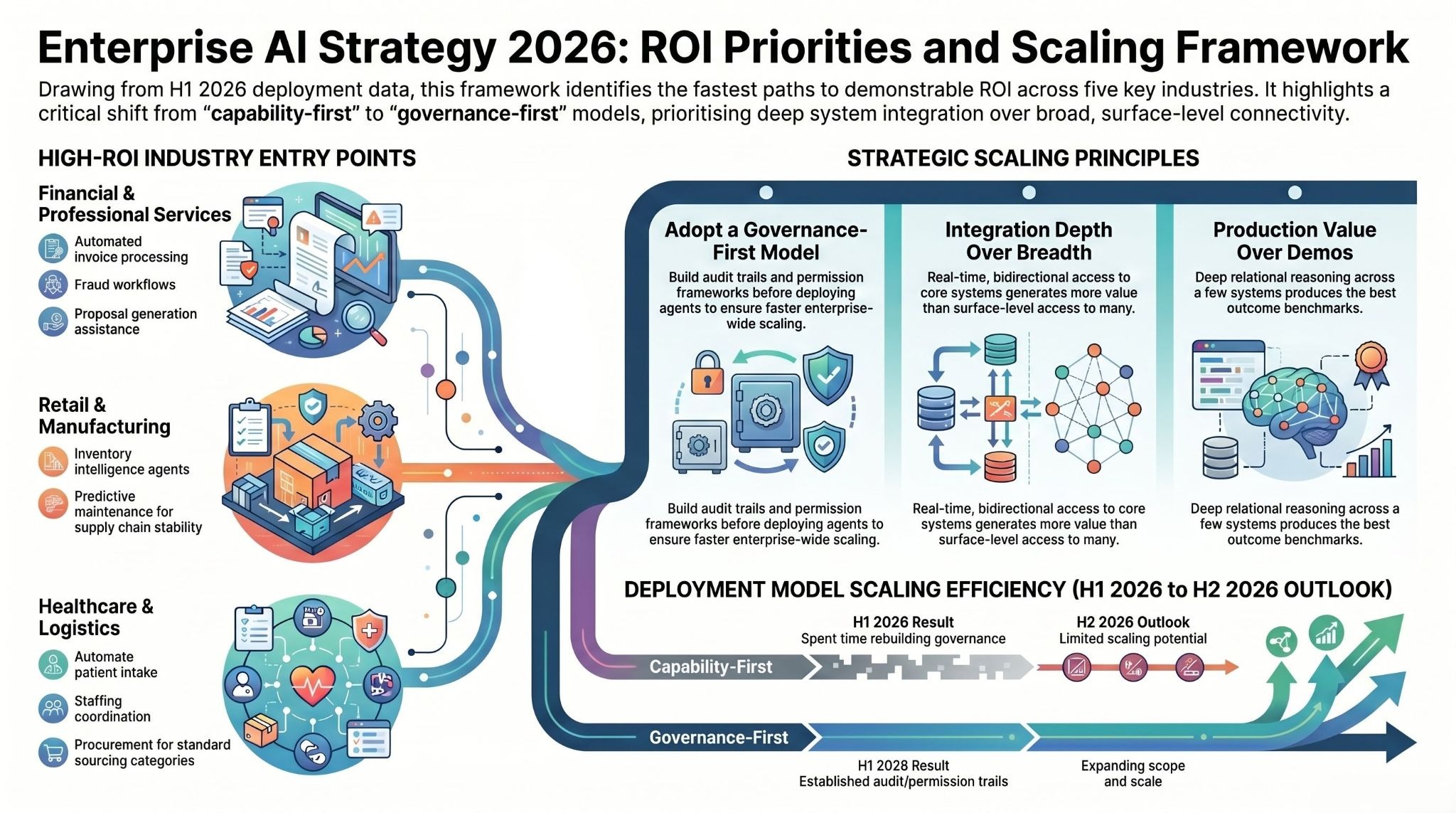

What should enterprises prioritize for the second half of 2026?

Based on the deployment data from H1 2026, here are the highest-ROI priorities for the second half of the year — organized by enterprise maturity stage.

High-ROI starting points by industry

For organizations that have not yet deployed AI agents in production, the fastest paths to demonstrable ROI in H2 2026 are:

- Financial services: Invoice processing and accounts payable automation, customer support tier-1 resolution, and dispute and fraud workflow automation. These are high-volume, well-defined workflows with measurable before/after metrics.

- Retail: Inventory intelligence agents that monitor pricing, stock, and promotional data across locations. Customer support automation for high-volume query types. Supply chain exception monitoring.

- Healthcare: Patient intake and scheduling coordination, healthcare staffing optimization, and compliance documentation automation. Start with workflows that do not require clinical judgment.

- Manufacturing and logistics: Predictive maintenance monitoring, supply chain analytics, and procurement automation for standard sourcing categories.

- Professional services: Competitive intelligence monitoring, proposal generation assistance, and client reporting automation.

The governance-first deployment model

The clearest lesson from H1 2026 is that governance-first deployments scale faster than capability-first deployments. Organizations that launched with broad autonomy and minimal oversight are spending H1 2026 rebuilding the governance layer. Organizations that built audit trails, permission frameworks, and exception routing into their initial deployment are expanding scope in H2 2026.

The practical implication: if you are starting a new AI agent deployment in H2 2026, build the governance architecture before you build the agent. Define your autonomy tiers, permission matrix, and audit requirements upfront. It takes slightly longer to get to first deployment. It takes significantly less time to get to enterprise-wide scale.

Integration depth over integration breadth

The temptation in H2 2026 is to connect as many systems as possible as quickly as possible. The deployments that are generating the best outcomes are doing the opposite: going deep on a small number of systems before expanding breadth.

A finance agent that has bidirectional, real-time access to your ERP, your accounts payable system, and your vendor database — and can reason across all three simultaneously — generates more value than an agent with surface-level access to ten systems. Depth of integration enables the kind of relational reasoning that produces the outcome benchmarks described in this report. Breadth of integration without depth produces demos, not production value.

The bottom line: where enterprise AI agents stand at mid-2026

The conversation about enterprise AI agents has definitively moved from "should we deploy?" to "how do we scale?" The data from H1 2026 is unambiguous: more than half of enterprises are running agents in production, outcomes are measurable across every major industry vertical, and the organizations that built governance infrastructure first are scaling fastest.

The remaining gap is not in technology capability — it is in execution. Integration depth, governance architecture, and workforce readiness are where the difference between deployments that compound in value and deployments that plateau after the initial pilot is being made.

For H2 2026, the highest-leverage actions are: starting with a single high-volume workflow rather than a broad pilot; building audit trail and permission infrastructure before expanding agent autonomy; going deep on a small number of system integrations before expanding breadth; and choosing a platform that is designed for enterprise compliance requirements from day one.

The market is moving at the pace Gartner predicted — 40% of enterprise applications integrating AI agents by end of 2026. The organizations that are executing on that trajectory right now will have compounding advantages that become increasingly difficult to close by 2027.

Frequently asked questions about enterprise AI agents in 2026

How long does it take to deploy an enterprise AI agent?

For well-defined, high-volume workflows with clean data and accessible system integrations, production deployments are reaching measurable outcomes within four to eight weeks.

More complex deployments — spanning multiple systems, multiple departments, or custom workflow logic — typically reach full production within three to six months. Gartner's data suggests 40% of enterprise applications will integrate AI agents by end of 2026, which implies a significant acceleration in deployment velocity relative to prior generations of enterprise software.

Platforms with pre-built agents and pre-configured connectors — like assistents.ai, which offers purpose-built agents for Finance, Sales, Customer Support, HR, Marketing, and Compliance — can compress the early stages of deployment significantly. The enterprise deployments described in this report used a proven four-week deployment model for initial production rollout, with expansion happening in subsequent phases.

What is the difference between an AI agent and a chatbot?

A chatbot responds to queries within a single conversation thread. It does not take actions, it does not connect to live systems, and it does not execute multi-step workflows. It generates text.

An AI agent interprets a goal, plans a sequence of actions, accesses live systems to gather context, executes steps across multiple applications with permission checks at each stage, and produces outcomes — not just outputs. An AI agent can match an invoice to a purchase order and route it for payment. A chatbot can tell you what invoice matching is.

The distinction matters practically because many enterprise software vendors are rebranding chatbot products as "AI agents" in 2026. The evaluation criteria that separates agents from chatbots: Does it execute actions in live systems? Does it reason across multiple data sources simultaneously? Does it maintain state and context across multi-step workflows? Does it produce an audit trail of every action?

Which enterprise workflows benefit most from AI agents?

Based on production deployment outcomes in 2026, the highest-value workflows are:

- High-volume document processing (invoices, tender documents, medical records, procurement documents)

- Cross-system data reconciliation (three-way matching, CRM hygiene, compliance monitoring)

- Omnichannel customer support (query triage, context retrieval, response drafting, escalation routing)

- Continuous monitoring workflows (competitive intelligence, compliance gaps, grid operations, inventory)

- Sales and account management (opportunity monitoring, pipeline hygiene, next-best-action recommendation)

The common characteristics: high volume, structured inputs or extractable unstructured inputs, clear definition of "correct" output, and significant cost of manual processing errors.

How do AI agents integrate with SAP, Salesforce, and ServiceNow?

Production-grade enterprise AI agent platforms connect to ERP systems like SAP through APIs and native connectors that allow bidirectional read and write access. This means agents can query SAP for purchase order data, validate it against invoice data from another system, and create or update SAP records as part of an automated workflow — with full audit trails.

assistents.ai connects to 300+ enterprise systems including SAP, Salesforce, Oracle, ServiceNow, Workday, HubSpot, Slack, and Microsoft's suite. The platform's Action Engine executes multi-step workflows across connected systems with role-based permission enforcement at every step — meaning agents operate with exactly the access they need, nothing more.

The critical question when evaluating integration depth: does the agent read data from these systems, or does it also write to them? Read-only agents can generate insights and recommendations. Read-write agents with proper governance can execute the workflows those insights imply. The deployment outcomes described in this report came from platforms with genuine bidirectional integration.

What does an AI agent platform need to be enterprise-grade?

Based on the production deployments and evaluation criteria that enterprise procurement teams are applying in 2026, an enterprise-grade AI agent platform needs:

Architecture: A context engine that ingests live data from enterprise systems; a semantic layer that reasons across relationships between entities, not just individual records; and an action engine that executes with permission enforcement and audit trail generation at every step.

Compliance: SOC 2 Type II, GDPR, HIPAA, and ISO 27001 as a minimum baseline. These are not optional for deployments in financial services, healthcare, or any large-enterprise environment.

Integration breadth and depth: Connectivity to the systems where enterprise work actually happens — ERP, CRM, HRIS, ticketing, operational databases — with bidirectional access, not just read connections.

Governance tooling: Configurable autonomy tiers, role-based permission management, exception routing, and complete audit trail generation. Without these, deployment cannot scale past the pilot stage.

Deployment velocity: Pre-built agents for common enterprise use cases reduce time-to-value. The ability to extend with custom agent logic prevents hitting operational ceilings as deployment scope expands.

assistents.ai is built to this specification — deployed across 12 industries, on 6 continents, with a four-week production deployment model and the compliance stack required for regulated enterprise environments. See the platform or schedule a demo to see how it maps to your specific environment.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us

.webp)