Agentic AI in HR: 7 Agentic AI HR Use Cases That Require Full Context to Work in 2026

Here is the pattern playing out inside enterprises right now.

An AI agent is deployed to handle vendor payments. It has access to the ERP system. It can read invoice amounts and due dates. It executes exactly as instructed. And it approves ₹12 crore in early payments — violating contract terms and forfeiting negotiated discounts that lived in a SharePoint folder it was never connected to.

The agent did not fail. The foundation did.

The same failure pattern — an agent acting with confidence on 20% of the information it needed — plays out every day inside HR functions. Only here, the damage is measured in bad hires, compliance penalties, wrongful termination exposure, and employee churn that nobody can trace back to a root cause.

This is the central problem with agentic AI in HR in 2026. Not that the models are bad. Not that the use cases are unclear. But most AI HR agents are flying blind — executing at machine speed in a fraction of the context they need to make decisions that are safe, governed, and actually correct.

This guide breaks down what full-context agentic AI in HR looks like, why it matters now, and what enterprise HR leaders need to demand from any platform they evaluate.

What "Agentic AI in HR" Actually Means (And What It Doesn't)

.jpg)

Before evaluating any AI HR agent, it is worth being precise about the term — because most of what is marketed as "agentic AI in HR" is not agentic at all.

Enterprise AI maturity sits across five levels:

- Level 1 — Descriptive: What happened? (Reporting dashboards)

- Level 2 — Diagnostic: Why did it happen? (Root cause analysis)

- Level 3 — Predictive: What will happen? (Attrition risk scores, headcount forecasts)

- Level 4 — Prescriptive: What should we do? (Copilots, recommendations)

- Level 5 — Agentic: Handle this. (Autonomous execution with governance)

The majority of "AI in HR" tools — including most platforms marketed as agentic — operate at Levels 3 and 4. They surface insights. They make recommendations. But a human is still required to act on every one of them.

True agentic AI in HRM is Level 5. The system identifies the issue, evaluates available options, executes the workflow, routes approvals where required, logs every decision with a policy citation, and improves over time.

The difference is not incremental. The jump from Level 4 to Level 5 is the difference between an AI that tells you an employee is at flight risk and an AI that identifies the employee, reviews their last six months of performance data, cross-references their compensation against current market benchmarks, reads the manager's last three 1:1 notes, drafts a retention action with two options, routes it to the line manager for a one-click approval, and logs the entire decision chain for compliance review.

That is what a genuine AI HR agent does. And it only works if the agent can see the full picture.

The 80% Blind Spot That Makes AI HR Agents Dangerous

Here is the data problem at the core of every failed AI HR deployment.

Structured data — the kind that lives in HRIS fields, ATS status records, payroll tables, and leave logs — represents roughly 10 to 20% of the actual context an HR function runs on. The other 80% lives in formats that most AI tools cannot read, correlate, or reason across:

What structured HR systems see (the 20%):

- Employee records in HRIS platforms

- ATS candidate status fields

- Payroll figures and leave balances

- Performance ratings in review cycles

- Benefits enrollment data

What they cannot see (the 80%):

- Offer letter PDFs with custom clauses, non-standard notice periods, and individually negotiated terms

- Email threads where a hiring manager clarified what they actually needed in a role — after the job description was posted

- Policy handbooks updated six months ago but not yet reflected in the HRIS

- Exit interview transcripts that reveal why three people left the same team in one quarter

- Slack conversations during onboarding where a new hire flagged a problem that nobody escalated

- Compliance documents governing employment law in specific geographies

- Manager notes from 1:1s that explain context no performance rating can capture

- Salary negotiation correspondence that changes what the compensation record actually means

An AI HR agent that operates on the 20% is not just less effective. It is executing wrong decisions faster than any human team can catch and correct them.

This is not a theoretical risk. Across enterprise AI deployments spanning retail, healthcare, financial services, and logistics, the same pattern emerges: agents fail not because the underlying model is poor, but because the context layer was incomplete. Deloitte's research confirms it. Gartner's projections confirm it — over 40% of agentic AI projects are expected to be cancelled by 2027, and context gaps are the primary cited reason.

In HR, where decisions affect people's livelihoods, compliance obligations are stringent, and errors can compound invisibly across hundreds or thousands of employees, the cost of a blind AI agent is disproportionately high.

This is the problem Assistents was built to solve. Not agentic AI that sees your HRIS and calls it context — but agents that read your contracts, your policies, your emails, and your compliance documents alongside your structured data, and act on all of it.

If you want to see what your current HR agents cannot see, a 48-hour assessment will show you the gap.

7 Agentic AI HR Use Cases That Require Full Context to Work

Each of the following use cases is genuinely transformative when the agent has access to complete context. Each one becomes a liability when it does not. The distinction is the entire point.

1. Talent Acquisition and Candidate Screening

The use case sounds straightforward: an AI HR agent scores candidate applications against job requirements and surfaces ranked shortlists for hiring managers.

The context gap: the agent reads the posted job description. It does not read the email thread from three weeks ago where the hiring manager told the recruiter that the technical requirement was secondary and cultural alignment with the team was the actual priority. It does not read the previous hire's exit interview where a pattern in the team dynamic was flagged.

Full-context execution: the agent ingests the job description, the hiring manager's email communications, historical team performance data, and prior role outcome records. It scores candidates against what the role actually requires — not just what was formally documented.

2. Onboarding Automation

The use case: an agent triggers IT provisioning, sends welcome materials, schedules orientation sessions, and monitors completion of onboarding tasks.

The context gap: the agent sees the start date and the standard onboarding template. It cannot read the custom employment contract that specifies a 90-day probation review instead of the standard 60-day process, or the addendum that grants a specific tool access not included in the default provisioning workflow.

Full-context execution: the agent reads the actual employment contract, cross-references it against standard policy, identifies the deviations, and adjusts the onboarding workflow accordingly — automatically, without a human having to manually flag the exception.

A healthcare staffing platform that deployed full-context agentic AI across its talent onboarding workflows saw fill cycles reduce significantly and credentialing accuracy improve — because the agent could simultaneously read regulatory compliance documents, facility-specific requirements, and candidate credential records rather than processing each in isolation.

3. Workforce Scheduling and Compliance

The use case: an AI agent manages shift scheduling, identifies coverage gaps, and allocates staff to meet operational demand.

The context gap: the agent sees shift availability data and current headcount. It cannot read the compliance PDF governing mandatory rest periods between shifts in specific geographies, or the union agreement that restricts certain scheduling patterns for a subset of employees.

Full-context execution: the agent reads scheduling data alongside the compliance documentation and any applicable employment agreements. Every schedule it generates is compliant by construction — not checked for compliance after the fact.

4. HR Policy Q&A and Employee Support

The use case: an AI agent answers employee questions about HR policies — leave entitlements, benefits, reimbursement processes, disciplinary procedures.

The context gap: the agent retrieves answers from the HRIS. It does not know that the parental leave policy was updated by a PDF circulated to all managers in October, that the reimbursement cap was revised in a memo sent to finance leads, or that the disciplinary procedure it is citing was superseded by a new framework three months ago.

Full-context execution: the agent answers from a unified context layer that fuses HRIS data, the current policy document library, and any superseding amendments — and flags when its answer is based on a recent update that may not yet be reflected in system records.

5. Performance Management and Retention

The use case: an agent identifies employees at attrition risk using engagement scores, performance ratings, and tenure data — and prompts managers to act.

The context gap: the engagement score is red. The performance rating is average. The agent flags flight risk. What it cannot see is the manager's note from last week's 1:1 explaining that the employee just accepted a carer responsibility that temporarily impacted their output, and that a flexible working arrangement has already been agreed informally.

Full-context execution: the agent incorporates structured engagement and performance data alongside manager notes, 1:1 records, and any informal agreements captured in email or documentation — surfacing a retention risk assessment that reflects reality, not just the data fields.

6. Payroll and Benefits Exception Handling

The use case: an AI agent processes payroll exceptions, flags anomalies, and routes corrections for approval.

The context gap: this is the ₹12 crore problem, applied to payroll. An agent sees the payroll record and the exception trigger. It approves a correction. It cannot see the email thread where a salary adjustment was agreed under different terms, or the benefits addendum that changes how a specific allowance should be calculated.

Full-context execution: before processing any payroll exception, the agent reads the relevant contract terms, any correspondence related to the employee's compensation, and the current benefits policy — then acts on the complete picture.

7. Compliance and Audit Readiness

The use case: an agent generates compliance reports, tracks required documentation, and flags gaps before audits.

The context gap: the agent reports what the system records show. It cannot see the email where a line manager granted a policy exception informally, the document where a non-standard process was approved by a senior leader, or the communication that changed a standard procedure for a specific business unit.

Full-context execution: the compliance agent reads across structured HR records and the unstructured documentation layer — surfacing not just what the system says happened, but what actually happened, including exceptions and informal decisions that need to be formally recorded before an audit.

A financial services organisation that deployed full-context agentic AI for compliance workflows achieved auditability across omnichannel operations with full policy citations on every decision — moving from reactive compliance reviews to continuous, governed compliance execution.

Why HR Is the Highest-Risk Department for Blind Agentic AI

Every department suffers when AI agents act on incomplete context. HR suffers more than most — for three specific reasons.

Regulatory exposure is high and varied. Employment law differs by geography, changes frequently, and is not always reflected in HRIS configuration. An agent that enforces the documented policy without reading the actual legislative requirement is a compliance liability in every jurisdiction it operates across.

HR decisions are frequently irreversible. A purchasing agent that approves the wrong invoice creates a correctable financial error. An HR agent that scores a candidate incorrectly, triggers a termination workflow prematurely, or sends an incorrect offer letter creates consequences that are expensive to reverse, damaging to relationships, and in some cases legally actionable.

Trust destruction compounds invisibly. Employees do not forgive AI errors the way they tolerate software bugs. An onboarding agent that enforces the wrong policy, a performance system that generates an inaccurate record, or a scheduling agent that violates an agreed arrangement damages employee trust in ways that do not appear in system logs but do appear in exit interviews.

This is why governance is not optional in HR AI deployments. It is the non-negotiable foundation. Every decision made by an AI HR agent needs to be auditable, policy-cited, and defensible — not because compliance requires it (though it does), but because the cost of ungoverned autonomy in HR is uniquely high.

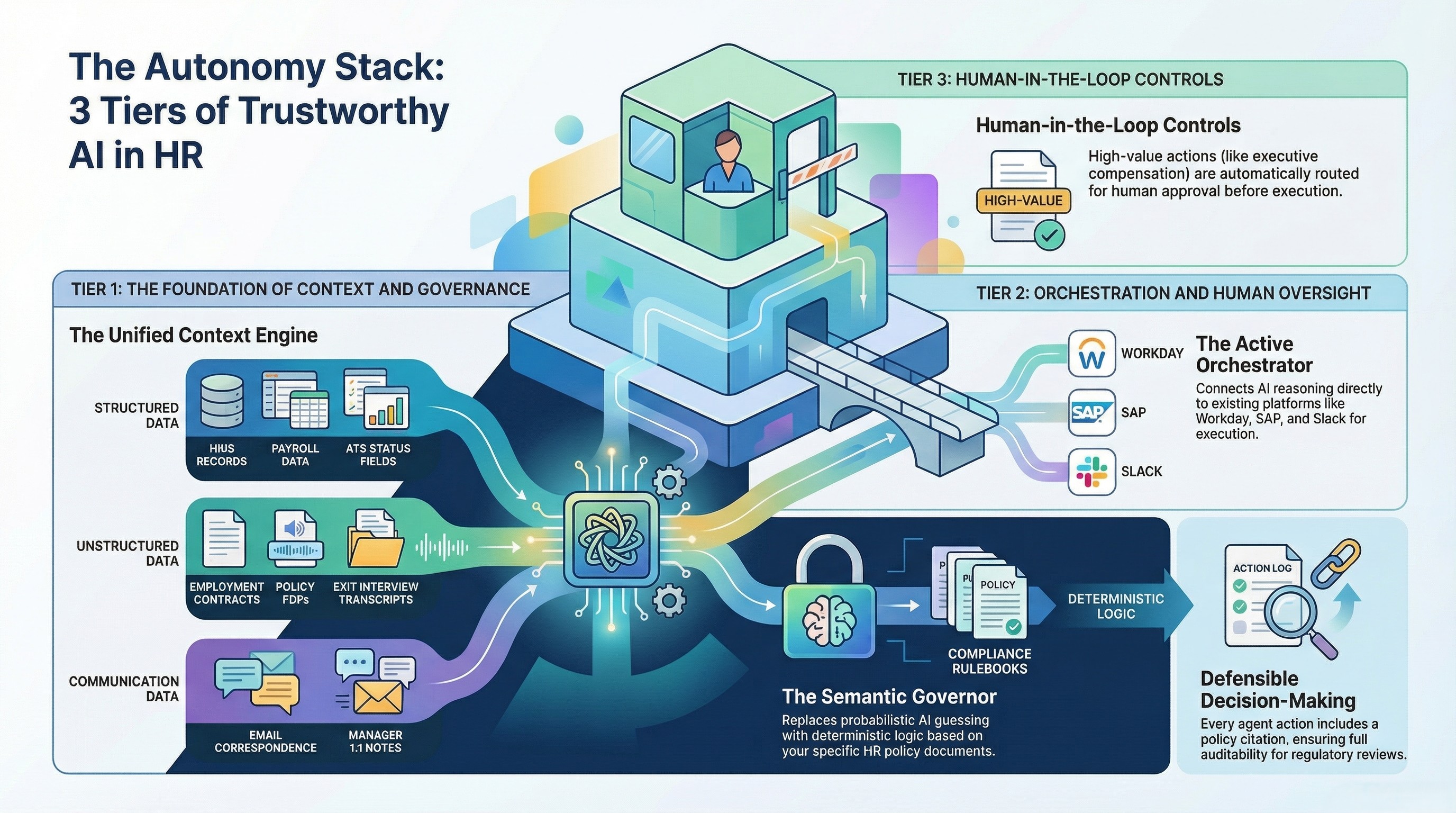

The Autonomy Stack — Applied to HR

Full-context agentic AI in HR is not a single-layer technology. It is built on three interdependent tiers, each solving a distinct problem that the others cannot compensate for.

Tier 1: Unified Context Engine

The problem it solves: The 80% blind spot.

The Context Engine fuses every data source the HR function runs on — structured and unstructured, real-time and historical — into a single semantic layer that agents can reason across simultaneously.

In HR, this means:

- HRIS records, payroll data, ATS status fields, and benefits data (structured)

- Offer letter PDFs, employment contracts, and addenda (unstructured)

- Policy documents and their revision history (unstructured)

- Email correspondence related to compensation, scheduling, and performance (unstructured)

- Manager notes and 1:1 records (unstructured)

- Compliance documentation by geography (unstructured)

- Exit interview transcripts and feedback data (unstructured)

When the Context Engine is in place, an AI HR agent does not answer from what the HRIS says. It answers from everything the HR function knows — including everything that has never lived in a structured system.

Tier 2: Semantic Governor

The problem it solves: The trust problem.

Reasoning capability without governance is not enterprise-grade AI. It is a liability that executes with confidence.

The Semantic Governor encodes your HR-specific rules as deterministic logic — not probabilistic inference. This means:

- Approval hierarchies: any offer above a salary threshold automatically routes to the CHRO, regardless of which recruiter initiated it

- Compliance thresholds: no schedule is generated that violates the minimum rest period defined in the applicable employment agreement

- Escalation trees: any performance action flagged as potential disciplinary automatically routes for legal review before the agent proceeds

- Exception handling: when the agent encounters a situation not covered by existing rules, it escalates rather than guesses

Every decision the agent makes carries a policy citation. Every action is logged with the rule that triggered it. The output is not just auditable — it is defensible in a regulatory review or employment tribunal.

No hallucinations. No black boxes. Every HR decision the agent makes can be read back as: "This action was taken because Rule X required it, based on Policy Document Y, version dated Z."

Tier 3: Active Orchestrator

The problem it solves: The execution gap.

The Orchestrator connects the agent's reasoning to the systems that need to act. In HR, this means native integration with the platforms your function already uses: SAP SuccessFactors, Workday, ServiceNow, BambooHR, Greenhouse, Slack, email, payroll systems, and benefits platforms.

Human-in-the-loop controls are set at the workflow level, not the platform level:

- Standard offer letter for a mid-level role → agent sends automatically

- Offer above executive compensation threshold → agent drafts, routes for CHRO approval, sends on confirmation

- Payroll correction below defined value → agent processes automatically with audit log

- Payroll correction above defined value → agent flags, routes to finance lead, documents decision

The agent executes across your existing HR tech stack without requiring any platform to be replaced. It orchestrates what you already have — giving it the context it was missing.

The enterprises getting agentic AI right in 2026 are not the ones with the best models. They are the ones that solved the context problem first. Assistents exists for exactly this — full-context, governed AI agents that HR functions can trust to execute, not just advise. See it in your environment within 30 days.

Real Enterprise Evidence — What Full-Context HR Agents Deliver

The case for full-context agentic AI in HR is not theoretical. Across deployments in healthcare staffing, large-scale retail operations, global logistics, financial services, and professional services, the pattern is consistent: organisations that give their agents full context see qualitatively different outcomes from those operating with partial data.

A large-scale retail operation with hundreds of locations across a major market deployed agentic AI agents for store support, inventory intelligence, and staff training access. The agents operated across point-of-sale data, inventory records, and — critically — the SOPs, policy documents, and training materials that had previously been accessible only to managers or through manual lookup. The result was zero-training execution at store level: agents could answer staff questions, flag inventory anomalies, and surface the correct policy for any situation without a human intermediary. The system scaled across the entire store network from day one because the Context Engine made every piece of institutional knowledge available to the agent regardless of where it was stored.

A healthcare staffing platform connecting clinical professionals with facilities automated its talent matching, scheduling, and compliance workflows using full-context agents. The agents could read credentialing documents, facility-specific compliance requirements, and regulatory guidelines simultaneously — matching candidates not just on availability but on the full compliance picture. The outcome was faster fill cycles, better credential accuracy, and reduced scheduling friction, all while maintaining the compliance standards that manual processes struggled to guarantee at volume.

A global financial services provider deployed omnichannel AI agents for customer and operational support with full auditability requirements. Every agent decision carried a policy citation. Every escalation followed a deterministic rule. The result was compliance-ready operations at a scale that manual review could not have matched, with SLA automation that reduced operational load while improving consistency.

A multi-entity logistics operation achieved a single operational view across geographies by fusing structured reporting data with unstructured operational communications. Agents could surface leadership intelligence, flag exceptions, and execute workflows across business units that had previously operated in data silos — reducing the time from signal to action from weeks to hours.

The thread connecting all of these: the agents did not work because the underlying models were exceptional. They worked because the context layer was complete.

How to Evaluate Any Agentic AI Platform for HR — 8 Questions to Ask

When assessing an AI HR agent platform, these eight questions separate context-complete solutions from those that will fail in production.

1. Can the agent read unstructured HR data — offer letters, policy PDFs, email threads — not just structured HRIS fields? If the answer is "it connects to your HRIS," that is a no. A genuine full-context platform fuses structured and unstructured data into a unified semantic layer before any agent reasoning begins.

2. Does the governance layer encode HR-specific rules deterministically, or does it rely on the LLM to infer the correct policy? Probabilistic governance in HR is not governance. Ask to see how a specific employment law requirement or compensation threshold is encoded — as a rule, not as a prompt instruction.

3. Is every agent decision auditable with a policy citation, not just an output log? An output log tells you what the agent did. A policy citation tells you why — and gives you a defensible record for compliance review or legal challenge.

4. Can human-in-the-loop thresholds be configured at the workflow level, not just globally? Different HR workflows carry different risk levels. A platform that applies the same oversight model to a standard leave request and an executive compensation decision is not fit for enterprise HR.

5. Does the agent maintain context across multi-step HR workflows, or does it reset between interactions? A candidate screening process, an onboarding journey, or a disciplinary workflow spans days or weeks and involves multiple systems. An agent that loses context between steps is producing decisions in isolation.

6. Can it integrate with your existing HRIS, ATS, and payroll systems without replacing them? Any platform that requires rip-and-replace of your core HR systems is adding twelve months of deployment risk before the first agent runs. The right architecture orchestrates what you have.

7. What happens when the agent encounters an exception — does it escalate correctly or execute incorrectly? Ask for a live demonstration of an edge case: an employment contract clause that contradicts standard policy, a scheduling request that conflicts with a compliance rule, a payroll exception outside defined parameters. The response to that scenario tells you more than the standard demo.

8. How does the platform handle GDPR and employment data compliance within the context layer itself? Fusing unstructured data across HR systems creates new data governance obligations. The platform needs to demonstrate how employee data is handled, retained, and protected within the context engine — not just at the application layer.

From HR Pilot to Production — The 30-Day Path

The failure mode most organisations encounter with agentic AI in HR is the pilot trap: a proof of concept that works beautifully in a controlled environment and collapses when it meets the complexity of real operational data.

The reason is almost always the same. The pilot was seeded with clean, structured, purpose-built data. Production means messy PDFs, email threads, legacy system exports, policy documents in twelve different formats, and contract clauses that nobody has catalogued.

A full-context deployment process addresses this directly:

Week 1 — Discovery and workflow mapping. Identify the highest-value HR workflow to automate first. Map every data source the workflow actually depends on — including the unstructured sources that the existing system cannot access. Define the governance rules: what decisions the agent can make autonomously, what requires human approval, and what must always escalate.

Weeks 2 to 4 — Context engine, governance layer, and first agent. The Context Engine is configured to fuse the identified data sources. The Semantic Governor encodes the defined rules. The first agent is built, tested against real workflow scenarios including exceptions, and validated against compliance requirements.

Day 30 — Live governed agent in production. Not a demo. Not a pilot. A production-grade, governed agent executing real HR workflows with full audit trails.

No platform replacement required. The Orchestrator connects to the HR systems already in use. The agents run on top of the existing stack — giving it the context and governance layer it was missing.

The One Thing to Get Right

Most of the debate in enterprise HR AI circles focuses on the wrong question. The question is not which AI model to use, which HR platform to integrate with, or which use case to start with.

The question is: what can the agent actually see?

An agent that can reason brilliantly but only across 20% of the context it needs is not a productivity multiplier. It is an error multiplier. It executes wrong decisions faster than any human team can intercept them, and it does so with a confidence score attached.

Full-context agentic AI in HR means giving every agent the complete picture — structured records and unstructured documents, real-time data and historical communications, formal policies and the informal exceptions that modify how those policies actually operate.

Your HR agents should not guess at what the policy says. They should know — because they have read it.

So, if your HR function is ready to move beyond dashboards and recommendations — to agents that actually execute, with full context and full governance — Assistents is where that journey starts. Not a pilot that collapses in production. Not another layer on top of a broken data foundation.

A governed agent, live in 30 days, built on everything your HR function already knows. Book a 48-hour assessment and see exactly what your agents are missing.

Frequently Asked Questions

What is agentic AI in HR?

Agentic AI in HR refers to AI systems that autonomously identify issues, evaluate options, execute multi-step workflows, and route approvals within HR functions — without requiring a human to act on every recommendation. Unlike AI assistants or copilots that advise and wait, agentic AI agents act. True agentic AI in HRM combines reasoning, execution, and governance across the full context of an HR function's data.

How is an AI HR agent different from an HR chatbot?

An HR chatbot answers questions from a predefined knowledge base. An AI HR agent executes workflows, integrates with live systems, makes governed decisions, routes approvals, and takes actions — such as updating records, triggering onboarding steps, or processing payroll exceptions — autonomously. The fundamental difference is execution capability combined with governance, not just conversational ability.

What HR workflows can agentic AI automate?

Agentic AI in HR can automate talent acquisition screening, onboarding workflow orchestration, workforce scheduling with compliance validation, policy Q&A and employee support, performance management alerting and retention actions, payroll and benefits exception handling, and compliance documentation and audit readiness. Each workflow requires full-context access to both structured HR system data and unstructured documents to operate safely at scale.

Why do AI agents fail in HR environments?

AI agents fail in HR primarily because they act on incomplete context. Most AI platforms access structured HRIS data — which represents 10 to 20% of the information an HR function actually operates on. The remaining 80% lives in offer letter PDFs, policy documents, email correspondence, manager notes, and compliance documentation that structured systems cannot read. Agents operating on partial context make decisions that are technically correct based on what they can see — and operationally wrong based on what they cannot.

What data does an AI HR agent need to work effectively?

An effective AI HR agent needs access to structured HR data (HRIS records, ATS fields, payroll data, leave balances, performance ratings) and unstructured data (offer letter PDFs, employment contracts, policy documents, compliance guidelines, manager correspondence, exit interview records, and any communications that modify how documented policies actually apply). A unified context engine that fuses both data types is the prerequisite for safe, governed agentic AI in HRM.

How does agentic AI in HR handle compliance and governance?

Compliance and governance in agentic HR AI is managed through a deterministic governance layer — sometimes called a Semantic Governor — that encodes HR-specific business rules, approval hierarchies, compliance thresholds, and escalation logic. Every agent decision is made against this rule layer, producing auditable outputs with policy citations rather than probabilistic inferences. This means every action can be reviewed, justified, and defended in a compliance or legal context.

Can agentic AI in HR integrate with Workday or SAP SuccessFactors?

Yes. Agentic AI platforms built on an orchestration architecture integrate with existing HRIS platforms including Workday, SAP SuccessFactors, BambooHR, Greenhouse, ServiceNow, and other enterprise HR systems. The agents operate on top of existing infrastructure without requiring platform replacement — extending the intelligence and execution capability of systems already in use rather than replacing them.

How long does it take to deploy an agentic AI solution for HR?

A governed, production-ready agentic AI HR deployment can be live within 30 days for the first workflow. Week one covers discovery and workflow mapping. Weeks two through four cover context engine configuration, governance rule encoding, and agent build. Day 30 delivers a live agent in production — not a pilot or proof of concept, but an operational system executing real HR workflows with full audit trails and governance controls.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us

%20IN%202024.webp)