Agentic AI in Clinical Data Management: How Context-Complete Agents Are Replacing Dashboards With Governed Execution in 2026

Sixty-two percent of enterprises are now experimenting with AI agents. Only 14% have anything close to production-ready. That gap — confirmed by Deloitte, McKinsey, and Gartner independently — is not a technology problem. It is a context problem. And nowhere is the context problem more dangerous than in clinical data management.

Clinical environments generate some of the most complex, multi-format, high-stakes data in any enterprise. Electronic health records capture structured fields — patient demographics, billing codes, scheduling entries, lab values.

But the real clinical picture lives in physician notes dictated between rounds, referral letters faxed from outside facilities, insurance pre-authorization emails, discharge summaries saved as PDFs, consent forms scanned into document management systems, and compliance protocols buried in policy wikis. By most industry estimates, 70 to 85 percent of clinical data is unstructured.

When an AI agent in a clinical setting makes a decision — routing a patient inquiry, flagging a billing exception, triggering a staffing adjustment, escalating a compliance alert — it is acting on whatever data it can see. If that agent can only see the structured 20 percent, it is making clinical and operational decisions on an incomplete picture. Not because the model is flawed. Because the context is.

This is the core problem agentic AI must solve in clinical data management. Not smarter models. Not better prompts. Complete context, governed execution, and an audit trail for every autonomous action.

Agentic AI in clinical data management refers to AI agents that can autonomously detect, reason, and execute across clinical workflows — not just structured EHR data, but the full context of patient records, compliance documents, and operational signals — with deterministic governance at every step.

Gartner has projected that more than 40 percent of agentic AI projects will be cancelled by the end of 2027. In clinical environments, where incomplete context does not just cost money but creates compliance exposure and care-quality risk, that failure rate carries far heavier consequences. This guide breaks down why clinical AI agent projects fail, what production-ready agentic AI actually looks like in healthcare, and how clinical organizations can move from pilot to governed production in 30 days.

Why Clinical Data Management Is the Hardest Problem for AI Agents

Clinical data management has always been one of the most challenging domains in enterprise technology. The reason is not volume alone — although clinical environments generate enormous quantities of data daily. The reason is the nature of that data and the consequences of acting on it incorrectly.

Consider what a single patient journey produces. There are structured entries in the EHR: demographics, diagnoses, procedures, billing codes, medication orders, lab results. These live in relational databases and are accessible to traditional BI tools and most AI platforms.

But alongside those structured records, the same patient journey generates referral letters from external physicians (often as faxed PDFs), insurance pre-authorization correspondence (emails and portal messages), clinical notes dictated by attending physicians (free-text, often with abbreviations and contextual shorthand), imaging reports and pathology results (semi-structured documents), consent forms and advance directives (scanned documents), and internal communications between care teams about treatment decisions, family concerns, or discharge planning.

Traditional clinical data management platforms operate almost exclusively on the structured slice. EHR analytics dashboards, billing intelligence tools, and population health platforms are optimized for relational data — they answer "what happened" with precision but cannot answer "why" or "what should we do next" because those answers require reasoning across the unstructured 80 percent.

This is where the promise of agentic AI meets the reality of clinical complexity. An AI agent tasked with automating patient intake workflows needs to see the referral PDF, the insurance status, the clinical history, and the scheduling availability simultaneously. An agent monitoring revenue cycle performance needs to cross-reference billing codes with physician documentation, payer contracts, and denial correspondence. An agent managing staffing decisions needs to see credential records, compliance requirements, facility requests, and scheduling constraints in a unified view.

When any of those data sources is invisible to the agent, the agent does not pause and ask for help. It acts on what it can see. In clinical settings, that is not just an efficiency problem — it is a compliance risk, a care-quality risk, and an audit liability.

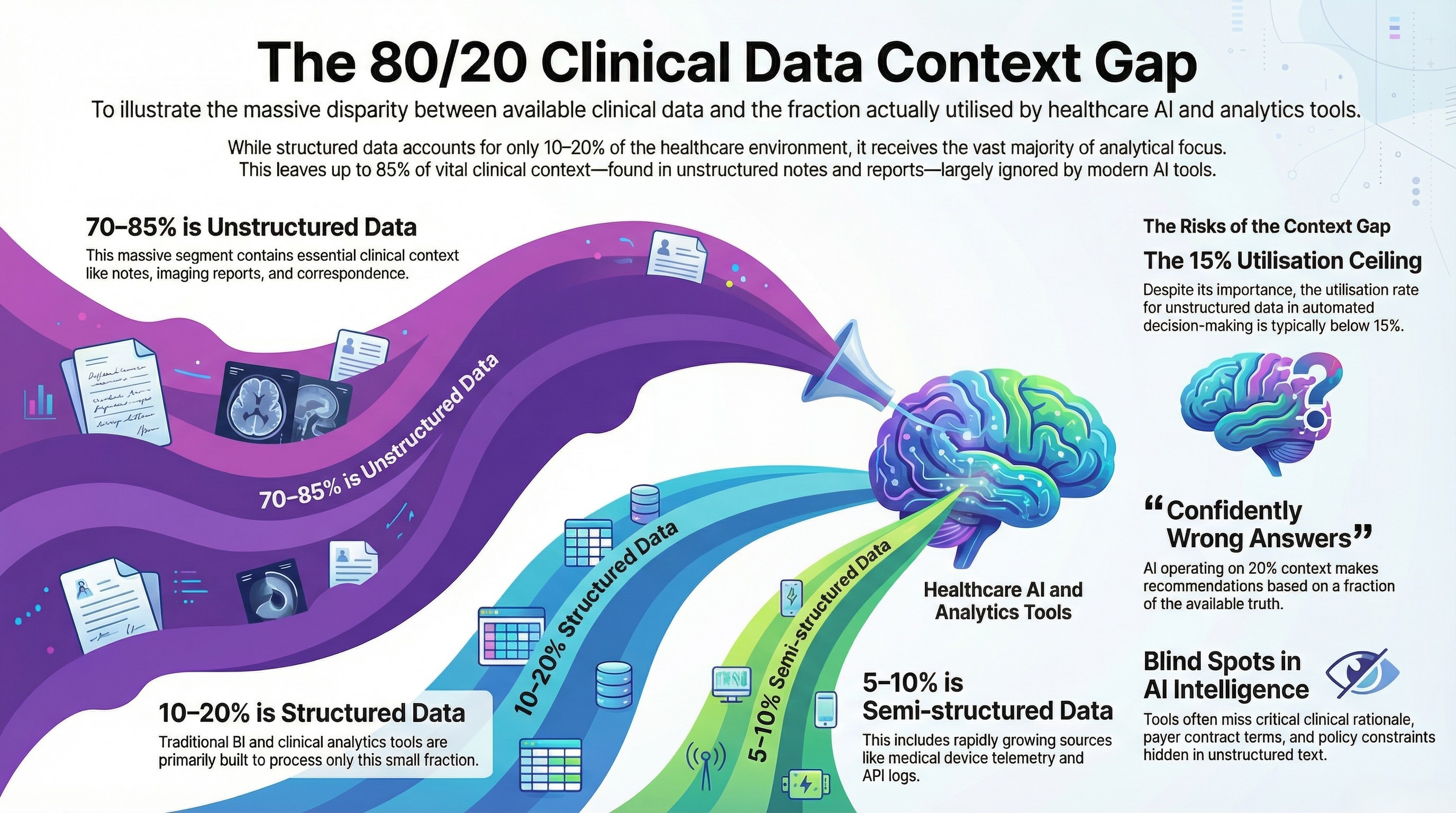

The 80/20 Data Problem in Healthcare

The data reality in healthcare mirrors what we see across every enterprise sector, but the stakes amplify the problem dramatically.

Structured data — the kind that lives in EHR databases, billing systems, scheduling platforms, and lab information systems — represents roughly 10 to 20 percent of the total clinical data environment. This is the data that traditional BI tools, reporting platforms, and most "AI-powered" clinical analytics products are built to process.

Semi-structured data — HL7 and FHIR feeds, API logs, device telemetry from connected medical equipment, and system event logs — accounts for another 5 to 10 percent. This data is growing rapidly with the expansion of connected health devices and interoperability mandates, but most clinical analytics tools still treat it as an afterthought.

Unstructured data — clinical notes, discharge summaries, imaging reports, pathology narratives, referral letters, insurance correspondence, consent forms, policy documents, and internal communications — represents 70 to 85 percent of the clinical data environment. This is where the majority of clinical context actually lives.

The gap between what is available and what is actually used in clinical analytics and decision-making is staggering. Most clinical BI platforms achieve high utilization of structured data — that is what they were designed for. But the utilization rate for unstructured clinical data in analytics and automated decision-making is typically below 15 percent. The semi-structured layer is slightly better utilized in organizations with mature interoperability infrastructure, but still far below its potential.

This means that the vast majority of clinical AI tools — including the copilots and assistants now being marketed aggressively to health systems — are making recommendations and, increasingly, taking actions based on a fraction of the available context. The data they cannot see is not marginal. It contains the negotiated terms in payer contracts, the clinical rationale behind treatment decisions, the exceptions and approvals communicated via email, and the policy constraints that govern what actions are permissible.

An agent operating on 20 percent of clinical context is not providing "AI-powered intelligence." It is providing confidently wrong answers at machine speed.

What 'Agentic' Actually Means in Clinical Data Management

The term "agentic AI" is being applied to everything from chatbots with slightly better prompts to fully autonomous workflow engines. In clinical data management, the distinctions matter enormously because the maturity level of your AI directly determines the risk profile it carries.

At the descriptive level — Level 1 — clinical data management systems generate reports. Patient volumes, wait times, readmission rates, revenue summaries. This is what most health systems have today: dashboards that tell you what happened, on a schedule measured in days or weeks.

At the diagnostic level — Level 2 — systems begin to answer "why." Why did readmissions spike in a particular unit? Why did revenue dip in a specific payer category? This requires more sophisticated analytics but still operates primarily on structured data and still requires humans to interpret and act on the findings.

At the predictive level — Level 3 — systems forecast what will likely happen. Patient flow projections, staffing demand models, financial forecasts. This is where many health systems believe they are investing when they purchase "AI-powered" analytics tools. The limitation is that prediction without action still leaves humans as the execution bottleneck.

At the prescriptive level — Level 4 — systems recommend what to do. This is the copilot model. The AI suggests a staffing adjustment, flags a billing anomaly, or recommends a patient outreach action. The human still decides and acts. The human remains in the loop for every decision, which means the system operates at human speed, not machine speed. For organizations processing thousands of clinical transactions daily, this bottleneck is significant.

At the agentic level — Level 5 — the system detects a signal, cross-references the full patient or operational record (structured and unstructured), checks compliance rules and governance thresholds, executes the appropriate workflow (scheduling, notification, documentation, billing, escalation), and logs a complete audit trail — autonomously, with governance controls determining which actions require human approval and which can proceed independently.

Agentic AI in clinical data management operates at Level 5 maturity, where agents autonomously detect clinical and operational signals, reason across the complete data context including unstructured documents and communications, execute multi-step workflows within deterministic governance boundaries, and produce fully auditable decision trails — all without requiring human intervention for routine actions while maintaining human-in-the-loop controls for high-risk decisions.

The leap from Level 4 to Level 5 is not incremental. It requires fundamentally different infrastructure — a context engine that can fuse all data types, a governance layer that enforces rules deterministically rather than probabilistically, and an orchestration engine that can execute across clinical systems without requiring a rip-and-replace of existing technology.

Why Most Clinical AI Agent Projects Fail (And How to Prevent It)

.jpg)

The 40-plus percent cancellation rate of Gartner projects for agentic AI projects by end of 2027 is not evenly distributed across industries. Healthcare, with its regulatory complexity, data fragmentation, and high consequences for errors, is likely to experience an even higher failure rate unless organizations address the root causes specifically.

Based on production deployments across healthcare organizations — from physician-led clinical enterprises to national testing providers to staffing platforms — there are five consistent reasons clinical AI agent projects fail.

The first and most common reason is context gaps. The agent cannot read the discharge summary PDF, the faxed referral, the insurance authorization email, or the physician's notes from a previous facility. It operates on EHR fields and billing codes — the structured 20 percent — and produces outputs that are technically correct within that narrow view but clinically or operationally wrong when the full picture is considered.

In one production deployment outside clinical settings but directly analogous, an operational AI agent was tasked with automating payment and vendor workflows for a large organization. The agent processed structured billing data flawlessly — invoice amounts, due dates, approval statuses.

But it could not access the contract documents with negotiated rates stored in a document management system, the email threads containing revised payment terms, or the flagged compliance exceptions in internal communications. The result was significant financial exposure — over ₹12 crore in early payments approved, contract terms violated, negotiated discounts forfeited. The agent performed exactly as designed. The context was incomplete.

In clinical settings, the equivalent scenario is an agent processing billing workflows that cannot see the payer contract PDFs, the prior authorization correspondence, or the clinical documentation that supports medical necessity. The financial exposure is real, but so is the compliance risk.

The second reason is governance gaps. Clinical decisions — even operational ones like staffing adjustments, billing escalations, or patient routing — operate within complex regulatory and policy frameworks. HIPAA, state-specific clinical regulations, accreditation requirements, payer-specific rules, and internal clinical protocols all impose constraints on what actions are permissible, who must approve them, and what documentation is required.

Most AI agent frameworks treat governance as an add-on — a set of guardrails bolted onto a probabilistic system. In clinical environments, probabilistic governance is not governance at all. When an agent decides whether a billing exception should be escalated or auto-resolved, that decision must be traceable to a specific policy, a specific threshold, and a specific rule. "The model was 87 percent confident this was the right action" is not an acceptable explanation in a clinical audit.

The third reason is data silo architecture. Clinical environments are among the most data-siloed in any enterprise sector. EHR systems, lab information systems, imaging platforms, billing systems, scheduling tools, pharmacy systems, and document management platforms often operate as disconnected islands. Even within a single EHR platform, different modules may not share data seamlessly.

An agent that can see scheduling data but not billing data, or billing data but not clinical documentation, is operating with artificial blind spots created by infrastructure, not by design. The context fusion problem in clinical settings is architecturally harder than in most enterprise environments because the number of source systems is larger and the data formats are more heterogeneous.

The fourth reason is the absence of meaningful audit trails. In clinical settings, every autonomous action must be traceable, explainable, and defensible. This is not a nice-to-have — it is a regulatory requirement. When an agent takes an action — approving a workflow step, routing a patient inquiry, flagging a compliance exception, adjusting a staffing assignment — the organization must be able to produce a complete record of what data the agent saw, what rules it applied, what decision it made, and why.

Most agent frameworks treat logging as an afterthought. They capture inputs and outputs but not the reasoning chain, the governance rules applied, or the data sources consulted. In a clinical audit, this gap is the difference between a defensible decision and an unexplainable one.

The fifth reason is the pilot-to-production death valley. Clinical AI demos work beautifully on clean, curated datasets. Production clinical data is messy, multi-format, riddled with exceptions, and changes constantly as new patients are admitted, new payer rules take effect, and new compliance requirements emerge.

The organizations that successfully deploy agentic AI in clinical settings are not the ones with the most sophisticated models. They are the ones that solve the context, governance, and audit trail problems before they write a single line of agent logic.

What Context-Complete Agentic AI Looks Like in Clinical Settings

If the failure patterns above define what goes wrong, the solution architecture defines what must go right. Any clinical agentic AI platform must deliver three core capabilities — and any platform that lacks even one of them will hit the same failure patterns at scale.

Unified Context Engine

The context engine is the foundation. It solves the 80 percent blind spot by fusing structured clinical data (EHR records, billing systems, scheduling platforms, lab results) with unstructured data (physician notes, discharge summaries, referral PDFs, insurance correspondence, consent forms, policy documents) and semi-structured feeds (HL7/FHIR messages, device telemetry, API logs, system events).

The fusion is not simply aggregation — dumping all data into a single repository. It is semantic correlation. The context engine builds a unified semantic layer that allows agents to reason across data types simultaneously. When an agent evaluates a billing exception, it can see the billing code, the clinical documentation that supports it, the payer contract terms, and the prior authorization status — all in a single reasoning step, not through a sequence of separate queries.

In production, this capability transforms what agents can do. In one multi-facility clinical enterprise, context fusion across physician notes, billing data, and operational records enabled AI agents to surface revenue leakage patterns and operational bottlenecks that were entirely invisible to the existing BI stack. The structured data showed the symptoms — revenue variances, utilization gaps. The unstructured data revealed the causes — documentation inconsistencies, coding mismatches, process exceptions communicated via email but never captured in the system of record.

Semantic Governor

The governance layer is what makes clinical agentic AI safe enough to trust with autonomous actions. It operates on deterministic logic, not probabilistic confidence scores.

In practice, this means the governor encodes clinical and operational rules as explicit decision logic. Approval hierarchies, compliance thresholds, escalation criteria, and exception handling rules are defined as if-then structures, not model inferences. When an agent decides to auto-resolve a routine billing inquiry, that decision is governed by a specific rule with a specific threshold. When the same agent encounters a billing exception above a defined dollar amount or involving a specific payer category, it escalates — not because the model is "less confident," but because the rule requires it.

Every agent action is auditable and policy-cited. The audit trail does not just record what happened — it records which rule triggered the action, what data the agent consulted, and which governance policy the decision traced to. In a compliance audit, this is the difference between "the AI did it" and "the AI applied Policy 4.2.1, Threshold B, based on the following data inputs, and produced this documented outcome."

In a geriatric care services deployment, governed agents for operational and revenue analytics produced insights and recommended actions that were traceable to specific compliance rules and care-program policies. The governance layer did not slow the agents down — it made their outputs trustworthy enough that clinical leaders could act on them without second-guessing every recommendation.

Active Orchestrator

The orchestration layer is what turns governed intelligence into governed execution. It executes multi-step clinical workflows across systems — intake, triage, scheduling, notification, documentation, billing, escalation — without requiring a rip-and-replace of existing clinical technology.

The orchestrator connects to existing systems of record — EHR platforms, billing systems, scheduling tools, communication platforms — and executes workflows across them. Human-in-the-loop controls are configurable by risk tier. Routine scheduling adjustments might proceed autonomously. Care-plan modifications require physician approval. Billing exceptions above a defined threshold require finance team review.

The key architectural principle is that the orchestrator does not replace clinical systems. It orchestrates them. The EHR remains the system of record. The billing platform continues to process claims. The scheduling tool continues to manage appointments. The orchestrator sits above these systems, coordinating multi-step workflows that previously required human coordination across multiple platforms.

In a national healthcare testing operation, this orchestration capability automated end-to-end workflows from booking through processing to result delivery — reducing manual overhead, eliminating handoff gaps, and providing unified operational analytics across a high-volume consumer health service.

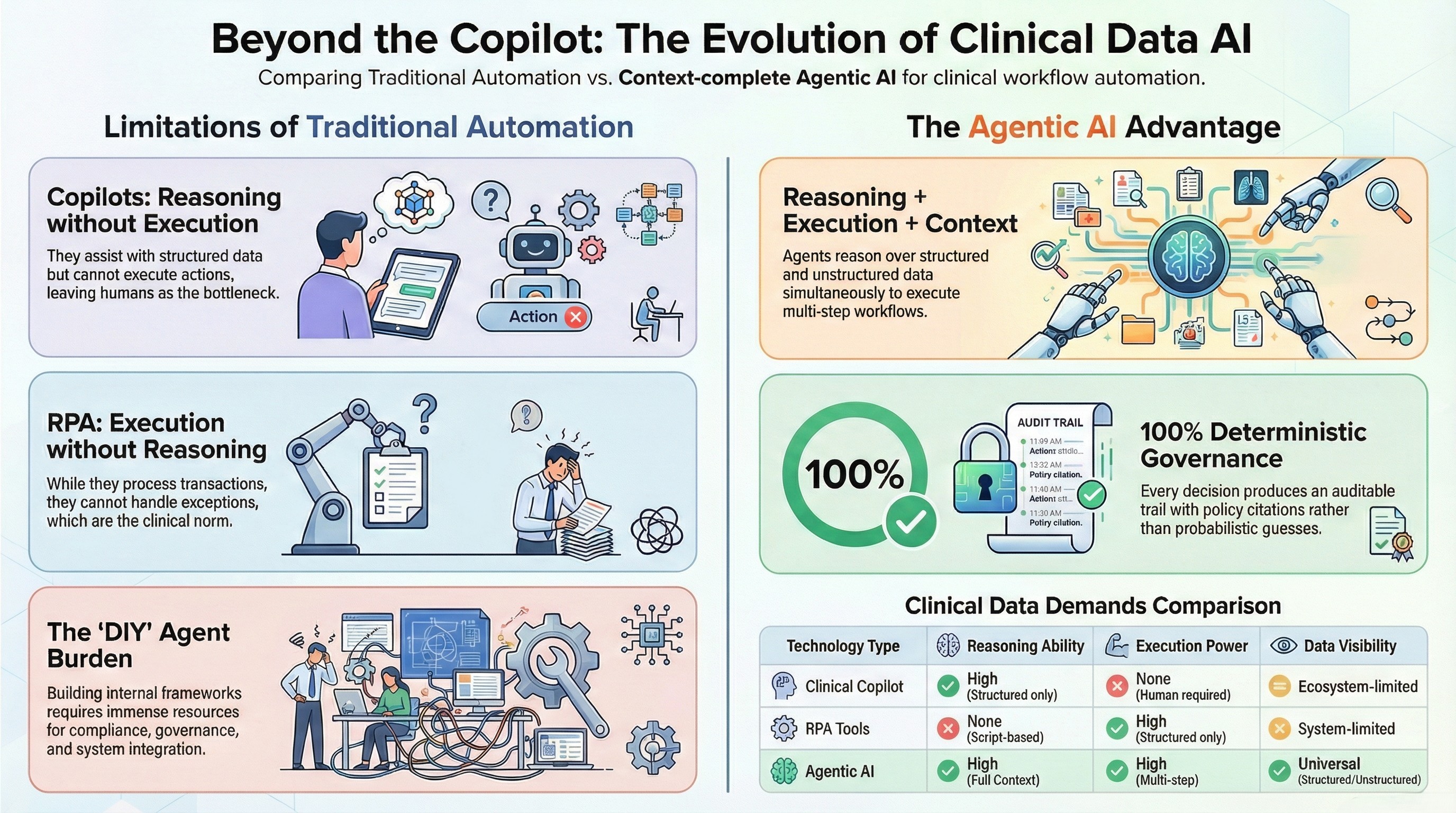

Agentic AI vs Copilots vs RPA in Clinical Data Management

The clinical data management market is crowded with solutions described as "AI-powered," but the capabilities behind that label vary enormously. Understanding the differences is critical for any organization evaluating platforms.

Clinical copilots — the model championed by major technology vendors — are strong at reasoning over structured data within their ecosystem. They can summarize patient records, suggest next steps, and draft clinical documentation. Their limitation is twofold: they generally cannot reason over unstructured data outside their native ecosystem (the referral PDF from an external facility, the payer contract stored in a separate document management system, the internal email thread about a patient exception), and they do not execute. The human remains the bottleneck for every action. In a high-volume clinical operation, copilots reduce the cognitive load on individual clinicians and administrators but do not fundamentally change the throughput of clinical operations.

RPA tools in clinical settings can execute — they can process structured transactions, move data between systems, and follow scripted workflows. Their limitation is that they cannot reason. When a clinical workflow encounters an exception — a non-standard billing code, a patient record with conflicting information, a scheduling conflict that requires judgment — RPA breaks. It follows scripts. Scripts do not handle exceptions. And in clinical environments, exceptions are not edge cases. They are the norm.

DIY agent frameworks — building agents on top of open-source orchestration tools and language models — offer flexibility but require organizations to build everything themselves. The context fusion layer, the governance logic, the audit trail, the system integrations, the human-in-the-loop controls — all must be designed, built, tested, and maintained internally. For most clinical organizations, this is neither practical nor advisable given the compliance requirements and the operational stakes.

Context-complete agentic AI combines reasoning, execution, full-context visibility, and deterministic governance in a single platform. Agents can reason over structured and unstructured clinical data simultaneously. They can execute multi-step workflows across clinical systems. Every action is governed by deterministic rules, not probabilistic confidence. Every decision produces an auditable trail with policy citations. And human-in-the-loop controls are configurable by risk tier, not applied as a blanket restriction on all agent actions.

The distinction matters because it determines what an organization can actually automate. Copilots can assist individual users. RPA can automate structured transactions. Context-complete agentic AI can automate entire clinical workflows — from signal detection through reasoning, execution, and documentation — with governance and audit trails built into every step.

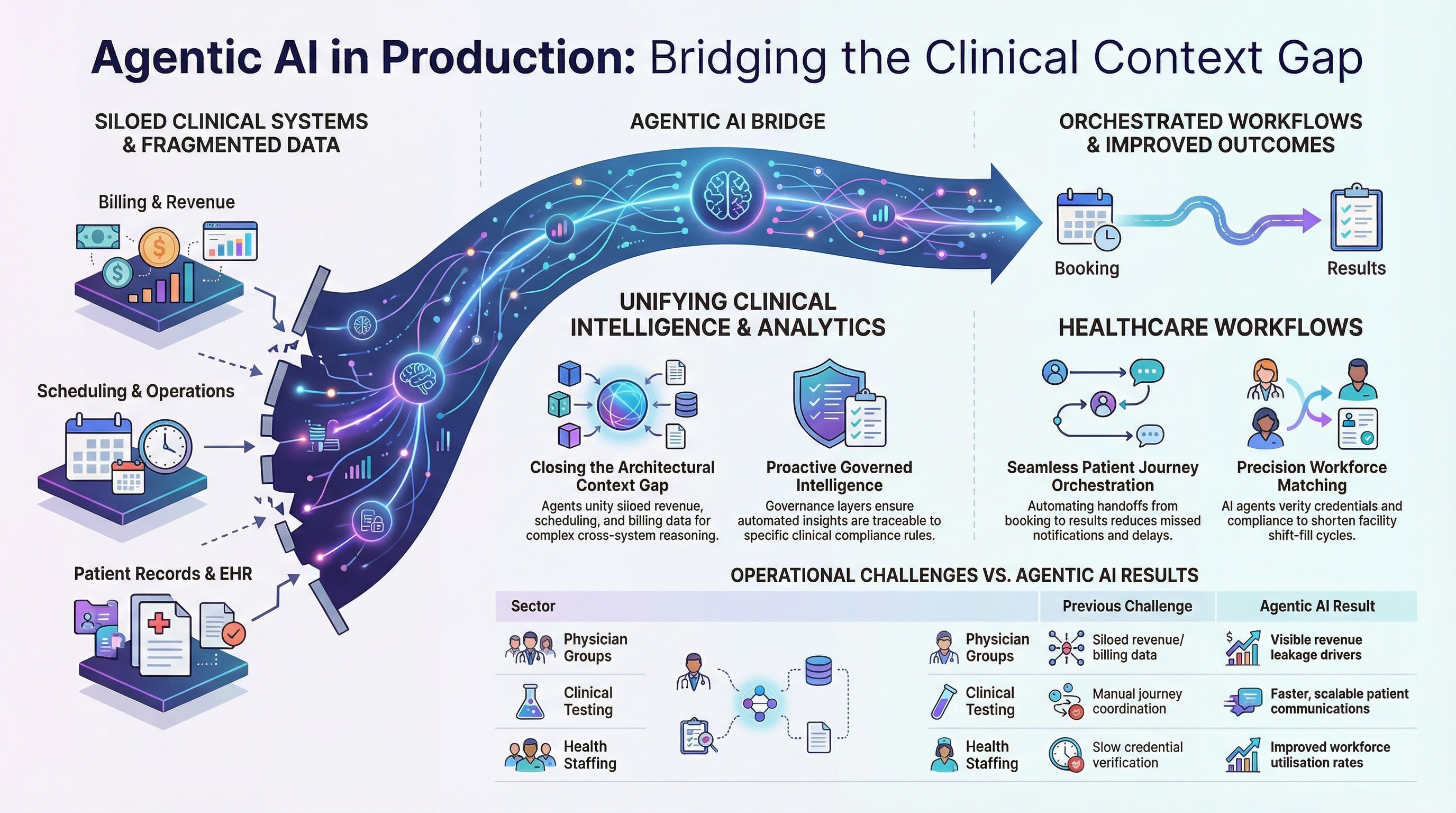

Real-World Clinical Deployments — What Production Looks Like

The gap between demo and production is where most clinical AI projects die. The following deployments — all in production, across different clinical contexts — illustrate what context-complete agentic AI looks like when it survives contact with real clinical complexity.

Multi-Specialty Physician Group (Inpatient Care)

A physician-led clinical enterprise operating hospitalist programs across multiple facilities faced a persistent challenge: revenue and operational data was siloed across scheduling, billing, and care-program systems. Leadership could see individual metrics in individual dashboards but could not get a unified view of what was actually driving performance variances across facilities and programs.

The context gap was architectural. Revenue data lived in one system. Physician scheduling in another. Patient volume data in a third. Operational metrics in a fourth. No single tool could reason across all of them simultaneously.

Agentic analytics agents were deployed to unify the data context and surface insights that required cross-system reasoning. Revenue leakage drivers that were invisible in any single dashboard became immediately visible when the agent could see billing, scheduling, and documentation data together. Operational decision-making accelerated because leaders no longer needed to manually correlate data across systems to answer basic questions.

The outcome was not just faster reporting. It was a fundamentally different quality of insight — the kind that only emerges when the full operational context is visible to the reasoning system.

Regional Geriatric Care Provider

A geriatric care services organization delivering physician-led programs across assisted living and long-term care settings faced fragmented operational data across staffing, service delivery, and revenue cycles. Care-program performance was tracked in one system. Staffing and scheduling in another. Revenue cycle metrics in a third. Identifying operational bottlenecks required manual correlation across all three — a process that was slow, inconsistent, and dependent on analyst availability.

Governed agents were deployed for program operations analytics, staffing intelligence, and revenue cycle visibility. The governance layer ensured that every automated insight and recommended action was traceable to specific care-program policies and compliance rules. Exception alerts surfaced issues — staffing gaps, service delivery variances, revenue cycle anomalies — before they became crises.

The transformation was from reactive, analyst-dependent reporting to proactive, agent-driven operational intelligence with full auditability. Leadership could trust the outputs because every recommendation came with a governance trail.

National Healthcare Testing Provider

A high-volume consumer healthcare and testing operation in the UK processed thousands of patient interactions daily across a workflow that spanned booking, specimen collection, laboratory processing, result generation, and patient communication. Each handoff point was a potential failure point — a missed notification, a delayed result, a dropped communication.

The context gap was operational. No single system had visibility into the end-to-end patient journey. Booking systems, lab systems, result delivery systems, and customer communication tools operated independently. Manual coordination filled the gaps, but manual coordination does not scale with volume.

Agentic orchestration automated the end-to-end workflow — from booking through processing to result delivery — with unified operational analytics providing real-time visibility into throughput, bottlenecks, and exception cases. The result was more scalable operations, faster patient communications, fewer missed handoffs, and a unified view of service performance that had never existed before.

Healthcare Staffing Platform

A healthcare staffing platform connecting nursing professionals with facilities for flexible shifts faced a coordination challenge that is endemic to clinical staffing: matching available professionals (with specific credentials, compliance requirements, and scheduling preferences) to facility requests (with specific skill requirements, shift timings, and compliance mandates) required processing multiple data types simultaneously.

Credential records, compliance documentation, facility requests, scheduling constraints, and historical performance data all fed into the matching decision — but lived in different systems and formats. Manual matching was slow, inconsistent, and could not keep pace with the volume of requests and available professionals.

AI agents were deployed for the full staffing workflow — talent onboarding, credential capture, facility request intake, matching logic, scheduling, notifications, and compliance verification. The governed orchestration ensured that no match was made without verified credentials and compliance clearance. Fill cycles shortened, workforce utilization improved, and staffing responsiveness for facilities increased measurably.

The Evaluation Checklist — 10 Questions to Ask Any Clinical Agentic AI Vendor

For any clinical organization evaluating agentic AI platforms, these ten questions will separate production-ready solutions from demo-ware. Ask every vendor these questions. The answers will tell you everything you need to know.

One. Can your agents reason over unstructured clinical documents — physician notes, referral PDFs, faxed correspondence, insurance emails — not just structured EHR fields? If the answer involves "we can connect to your document management system," ask what happens with the data once connected. Connecting is not reasoning.

Two. Is your governance layer deterministic or probabilistic? Can you show me the specific rule that triggered a specific agent action? If the answer involves confidence scores or model certainty, the platform does not have deterministic governance.

Three. What percentage of our total clinical data environment — structured, unstructured, and semi-structured — can your platform actually see and reason over in a single agent action?

Four. How do you handle HIPAA compliance at the agent execution layer — not just the data storage layer? Data at rest can be encrypted. Data in transit can be secured. But what happens when the agent is reasoning over a patient record to make an autonomous decision? Where does that reasoning happen, what data does it touch, and how is it governed?

Five. Can you show me a full audit trail for an autonomous clinical decision, including the policy citation that governed the action? Not a log file. A traceable chain from signal detection through data consultation, rule application, action execution, and outcome documentation.

Six. What happens when the agent encounters an exception it was not designed to handle? Does it fail silently, produce a best-guess output, escalate to a human, or halt? In clinical settings, silent failure is unacceptable.

Seven. How long from pilot to production in a real clinical environment — not a sandbox, not a demo, not a test dataset? Can you show proof from an actual clinical deployment?

Eight. Does your platform require replacing our existing EHR, billing, scheduling, or communication systems? If the answer is yes, the implementation timeline is measured in years, not weeks.

Nine. What human-in-the-loop controls exist, and are they configurable by risk tier? Can we define that routine scheduling adjustments are autonomous while care-plan modifications require physician sign-off?

Ten. Can you show me production case studies in clinical settings — not demos, not pilots, not proof-of-concepts? Organizations that have deployed, operated, and measured outcomes in real clinical environments.

Any vendor that cannot answer all ten of these questions with specific, verifiable evidence is not ready for clinical production.

How to Move From Pilot to Production in 30 Days

The pilot-to-production timeline in clinical agentic AI does not need to be measured in quarters or years. With the right infrastructure — context fusion, deterministic governance, and system orchestration already built into the platform — the deployment path is measured in weeks.

Week one is discovery and clinical workflow mapping. Identify the highest-value workflows — the ones where context gaps are causing the most operational friction, the most compliance risk, or the most financial leakage. Map the data sources involved: which systems hold structured data, where unstructured documents live, what semi-structured feeds exist. Define the governance rules: what actions can be autonomous, what requires human approval, what thresholds trigger escalation.

Weeks two through four are deployment. The context engine connects to clinical data sources and begins building the unified semantic layer. Governance rules are encoded as deterministic logic. The first agent goes live on the highest-priority workflow — monitored, governed, and producing auditable outputs from day one.

Day thirty marks a live, governed agent in production — executing clinical or operational workflows autonomously within defined governance boundaries, producing full audit trails for every action, and providing measurable performance data against the baseline.

The key architectural principle that makes this timeline possible is that context-complete agentic AI does not require ripping out existing clinical systems. The platform orchestrates what is already in place — EHR, billing, scheduling, communications, document management. No migration. No replacement. No year-long implementation.

If your clinical organization is evaluating agentic AI, the single most important step you can take today is understanding your context gap. What percentage of your clinical data can your current tools actually see and reason over? What decisions are being made on incomplete information? What would change if your agents could see the full picture?

See your clinical context gap in 48 hours. Book a pilot assessment at assistents.ai.

Frequently Asked Questions

What is agentic AI in clinical data management?

Agentic AI in clinical data management refers to AI systems that can autonomously detect signals, reason across the full clinical data context (including structured EHR data, unstructured documents like physician notes and referral PDFs, and external signals), execute multi-step workflows, and produce auditable decision trails — all within deterministic governance boundaries. Unlike copilots that recommend actions for humans to take, agentic AI systems can execute autonomously on routine tasks while maintaining human-in-the-loop controls for high-risk decisions.

How is agentic AI different from clinical decision support systems?

Clinical decision support systems (CDSS) operate at the prescriptive level — they analyze data and recommend actions, but humans must still interpret, decide, and execute. Agentic AI operates at the autonomous execution level — agents can detect, reason, decide, execute, and document without requiring human intervention for every action. The critical difference is that agentic AI includes a governance layer that determines which actions can be autonomous and which require human approval, making it safe for clinical environments.

Why do most clinical AI agent projects fail?

The five most common failure reasons are context gaps (agents cannot reason over unstructured clinical data), governance gaps (no deterministic rules for clinical decision thresholds), data silo architecture (clinical systems are disconnected), absence of audit trails (agent actions are not traceable to specific policies), and the pilot-to-production gap (demos work on clean data but production clinical data is complex and exception-heavy). Context gaps are the root cause in the majority of cases.

Can agentic AI be HIPAA compliant?

Yes, but only if the platform addresses compliance at the execution layer, not just the storage layer. This means deterministic governance rules that enforce HIPAA requirements on every agent action, full audit trails with policy citations, role-based access controls on what data agents can see and act on, and encryption standards (AES-256, TLS 1.3) across all data handling. Platforms that rely on probabilistic governance — where the model "usually" follows HIPAA rules — are not HIPAA compliant in any meaningful sense.

What is the 80/20 data problem in healthcare AI?

The 80/20 data problem describes the gap between what clinical AI tools can see and what actually exists. Roughly 10 to 20 percent of clinical data is structured (EHR fields, billing codes, scheduling entries). The remaining 70 to 85 percent is unstructured (physician notes, referral letters, insurance correspondence, consent forms, policy documents) or semi-structured (HL7/FHIR feeds, device telemetry, API logs). Most clinical AI tools only operate on the structured slice, meaning agents are making decisions based on a fraction of the available clinical context.

How does a Semantic Governor prevent AI hallucinations in clinical settings?

A Semantic Governor replaces probabilistic model outputs with deterministic decision logic for governed actions. Instead of the model inferring what action to take based on training data, the governor encodes explicit rules — approval hierarchies, compliance thresholds, escalation criteria, exception handling logic — as if-then structures. The agent cannot hallucinate a governance decision because governance decisions are not made by the model. They are made by the rule engine. Every governed action is traceable to a specific rule and produces a documented audit trail.

What is context-complete agentic AI?

Context-complete agentic AI is a platform architecture where AI agents reason over the full enterprise data context — structured, unstructured, semi-structured, and external signals — fused into a single semantic layer. Unlike traditional AI tools that operate on one data type or one system at a time, context-complete agents see the full picture before making any decision. In clinical settings, this means an agent processing a billing workflow can simultaneously access the billing code, the clinical documentation, the payer contract, and the prior authorization status — all in a single reasoning step.

How long does it take to deploy agentic AI in a clinical environment?

With a platform that includes pre-built context fusion, governance, and orchestration capabilities, the typical timeline is 30 days from kickoff to a live, governed agent in production. Week one covers discovery and workflow mapping. Weeks two through four cover context engine deployment, governance rule encoding, and first-agent launch. This timeline is achievable because context-complete platforms orchestrate existing clinical systems rather than replacing them — no migration, no rip-and-replace, no year-long implementation.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us

.webp)