AI Agents for QA Testing: How Enterprise Teams Are Cutting Defects and Accelerating Release Cycles in 2026

Enterprise software teams are caught in a contradiction. Development velocity has never been higher — AI-assisted coding tools are generating more code, more features, and more releases than human teams alone could produce. But quality assurance infrastructure hasn't kept pace.

Manual test scripts break the moment a UI changes. Regression suites that once took days now take weeks as application surface area expands. And the cost of a defect reaching production — reputational, financial, regulatory — has never been higher.

AI agents for QA testing are emerging as the answer to this contradiction. Not the AI-assisted testing tools of the previous era, which still required engineers to script every step, but genuinely autonomous agents that perceive application state, generate test cases from requirements, execute and self-heal continuously, and report with enough context for human teams to act — all without intervention between test runs.

This guide covers what enterprise teams actually need to know: what these agents do, where they deliver the highest ROI, how real deployment results compare to expectations, what governance requirements look like in regulated industries, and how to evaluate platforms before committing.

Why Traditional QA Automation Is No Longer Enough

Traditional test automation solved a real problem. It replaced human testers clicking through regression flows with scripts that could do the same work in minutes. For stable applications with predictable interfaces, it worked well.

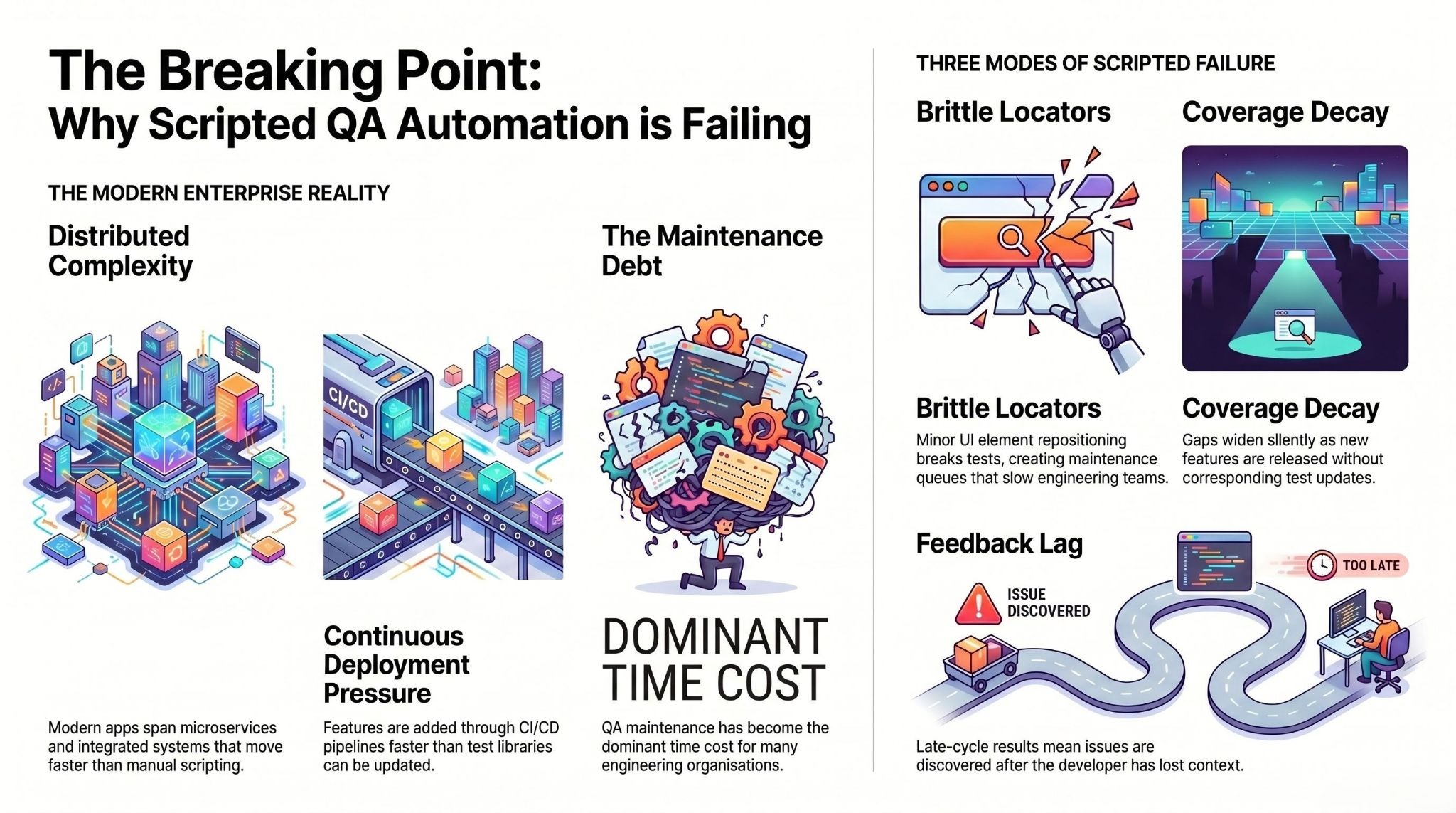

The problem is that modern enterprise applications are not stable. They are distributed across microservices, continuously deployed through CI/CD pipelines, regularly updated across dozens of integrated systems, and increasingly built with AI-generated code that moves faster than any QA team can script against.

Scripted automation has three compounding failure modes in this environment. First, brittle locators — any UI change, even a minor element repositioning, breaks tests and creates a maintenance queue that slows down engineering teams. Second, coverage decay — as features are added faster than test libraries are updated, coverage gaps widen silently until a defect escapes to production. Third, the feedback lag — traditional automation produces results at the end of a cycle, not inline with each code commit, which means issues are discovered long after the relevant context has left the developer's mind.

The numbers reflect this. QA maintenance has become the dominant time cost in many engineering organisations. Teams spend more time debugging broken test scripts than they spend writing new tests or catching new defects. This is the problem AI agents in QA testing are designed to structurally eliminate — not incrementally improve.

What AI Agents Actually Do in QA — Beyond the Hype

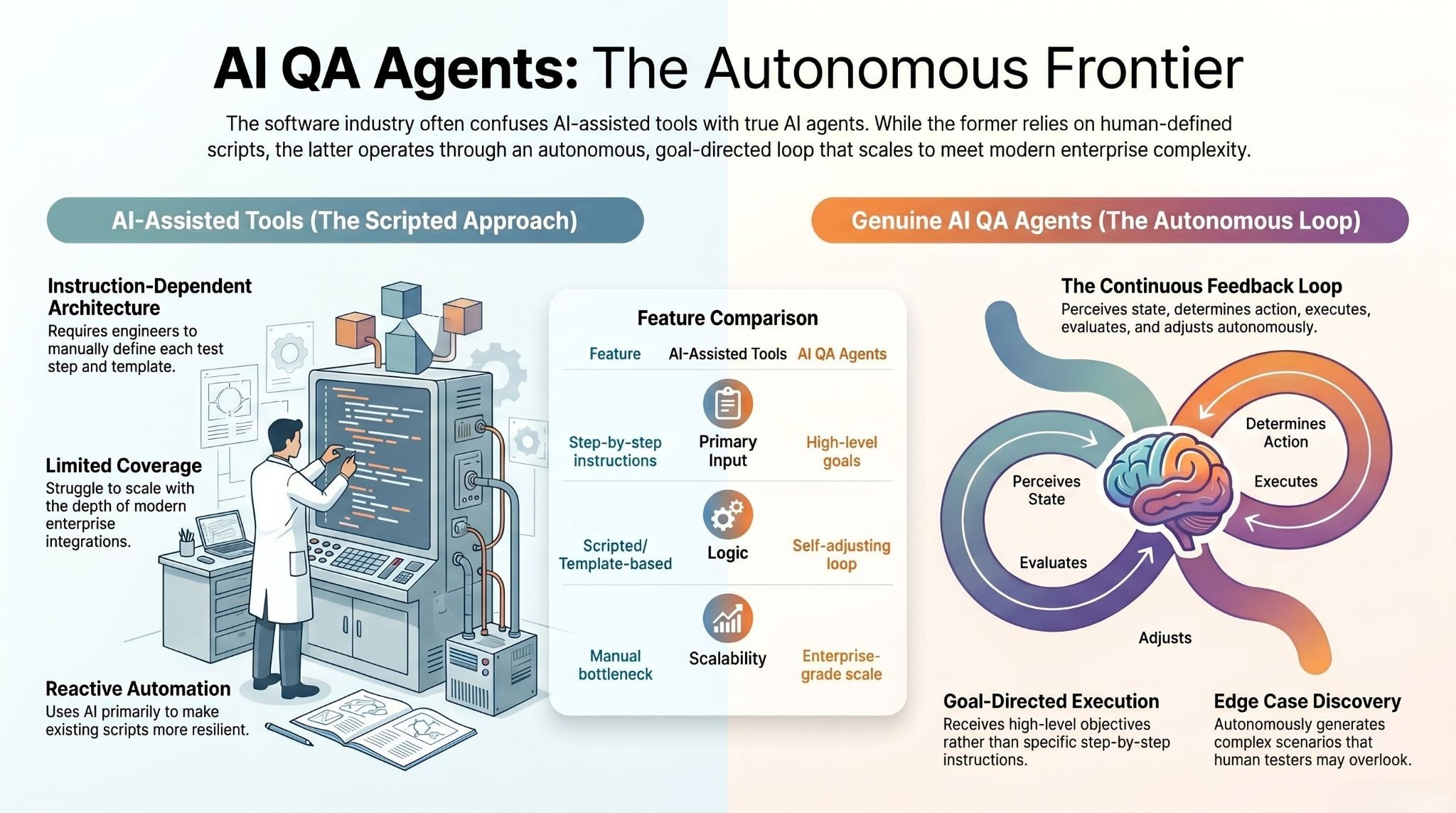

The term "AI agent" is applied loosely across the software testing industry. Chatbots that suggest test cases are called agents. Copilots that generate a Playwright script when prompted are called agents. Understanding what a genuine AI agent does — versus an AI-assisted tool — is the foundation for evaluating any platform.

A genuine AI QA agent operates across a goal-defined scope without requiring step-by-step instruction. It perceives the current state of an application, determines what action serves the testing objective, executes that action, evaluates the result, and adjusts its next action based on what it learned. This loop runs continuously, across thousands of test scenarios in parallel, and the agent updates its behaviour as the application changes.

The difference in practice is significant. An AI-assisted tool requires an engineer to define each test step, then uses AI to make those steps more resilient or auto-generated from a template. An AI agent receives a goal — "validate that the invoice matching workflow in SAP executes accurately under all edge cases" — and autonomously determines how to test for that goal, including generating edge cases a human tester would not think to include.

This distinction matters enormously in enterprise environments where application complexity, integration depth, and compliance requirements make comprehensive scripted coverage practically impossible. The goal-directed agent model is the only architecture that scales with modern enterprise complexity.

Core Capabilities of an Enterprise AI QA Agent

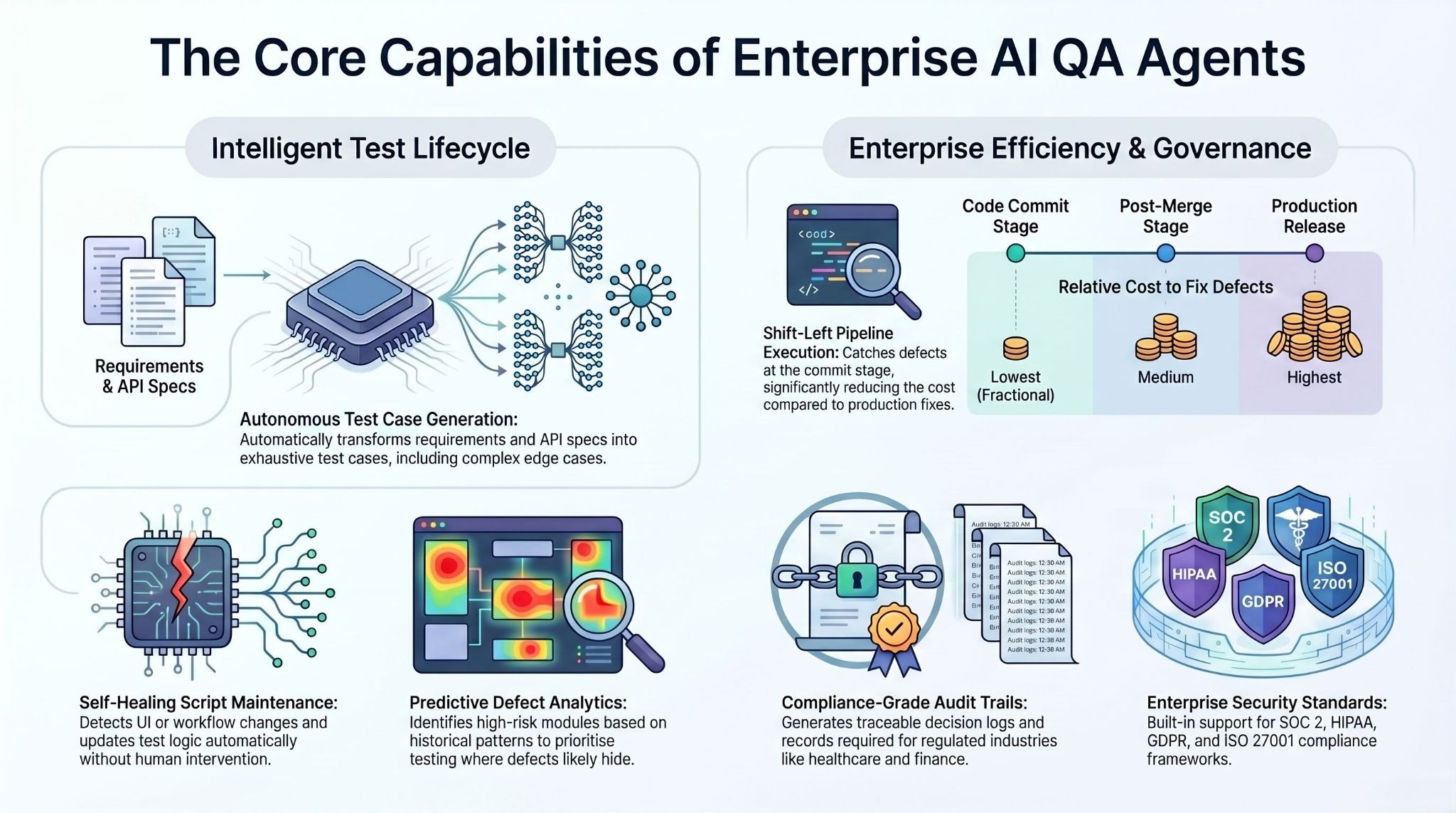

Autonomous Test Case Generation from Requirements

Enterprise AI QA agents ingest requirements directly — user stories, acceptance criteria, API specifications, or natural language descriptions of expected behaviour — and generate test cases from them. This eliminates the manual translation step between specification and test script that has historically been the bottleneck in test creation.

More importantly, AI agents generate test cases that human authors consistently miss: negative paths, edge cases derived from historical defect patterns, boundary conditions, and multi-step interaction scenarios that require state tracking across several application layers. In complex enterprise applications — particularly those integrating multiple backend systems like ERP, CRM, or supply chain platforms — this kind of exhaustive edge case coverage is practically impossible through manual authoring.

Self-Healing Test Scripts

When an application changes — a form field is renamed, a navigation element is repositioned, an API response schema is updated — traditional test scripts fail and enter a maintenance queue. Self-healing AI agents detect these changes, identify the correct target using contextual understanding of the application's structure and intent, and update the test without human intervention.

Advanced self-healing goes beyond locator-level repair. Enterprise-grade agents that detect intent-level changes can recognise when a multi-step workflow has been restructured — not just that a button's ID has changed — and update the full test logic accordingly. This eliminates the maintenance overhead that consumes the majority of traditional QA engineering time.

Predictive Defect Analytics

AI agents accumulate learning across test runs. They identify patterns in where defects have historically occurred — which modules, which integration points, which types of code changes — and apply that learning to prioritise testing effort. High-risk areas receive more intensive coverage before deployment. Low-risk stable modules require lighter touch.

This transforms QA from a uniform coverage exercise into a risk-weighted quality operation. Engineering teams direct human review and exploratory testing to the areas where AI-predicted defect probability is highest, and rely on automated agent coverage for areas with proven stability. The result is better defect detection with fewer total test runs — and faster cycle times.

CI/CD-Native Execution

Enterprise AI QA agents are designed to operate inside the delivery pipeline, not alongside it. Tests trigger on every code commit, pull request, and deployment, providing feedback to developers in the context where it is most actionable — before code merges, not after a release cycle ends.

This shift-left quality model fundamentally changes the economics of defect detection. A defect caught at the commit stage costs a fraction of what it costs to fix after merge, and a fraction again of what it costs once it reaches production. The compounding financial benefit of early defect detection — across an enterprise releasing hundreds of deployments per week — is one of the clearest ROI arguments for AI agent adoption in QA.

Governance, Audit Trails, and Compliance

In regulated industries — financial services, healthcare, pharmaceutical, government — QA is not just a quality function. It is a compliance function. Every test execution, every result, every remediation must be documented in a way that satisfies regulatory audit requirements. AI agents in enterprise QA environments must generate auditable records of what was tested, what the agent observed, what decisions it made, and what actions resulted.

This is where many vendor claims break down in practice. An agent that produces fast results but cannot explain its decisions or generate compliance-grade audit trails is not deployable in a regulated enterprise environment. Platforms with built-in governance layers — traceable decision logs, role-based access controls, exception and approval workflows — are not optional for financial services, healthcare, or pharmaceutical buyers. They are baseline requirements.

assistents.ai provides enterprise AI agents for quality assurance, workflow automation, and operational intelligence — with SOC 2, HIPAA, GDPR, and ISO 27001 compliance built in. Deployed across regulated industries including financial services, healthcare, manufacturing, and logistics. Learn more about the Enterprise AI Agent Platform or explore industry-specific QA deployment models.

AI QA Agents in Practice: Real Enterprise Deployment Results

The most meaningful evidence for AI agents in QA testing comes not from vendor benchmarks but from actual deployment patterns across production enterprise environments. The following deployment snapshots represent real implementations across distinct enterprise contexts.

Financial services — omnichannel workflow validation.

A global financial services enterprise deployed AI agents to validate omnichannel customer service workflows across chat, email, phone, and digital banking interactions. The deployment covered intake routing, agent-assist summarisation, next-best-action logic, and SLA monitoring — a test environment that would require hundreds of manual test cases to cover comprehensively.

The AI agents automated case handling validation at scale, with auditable workflow records satisfying compliance requirements. The outcome: significantly faster case handling and measurable reduction in operational load through automation of quality checks that had previously required manual QA sampling.

Enterprise retail — inventory and knowledge system validation.

A national retail operation running 700-plus stores deployed AI agents across voice support, inventory intelligence, and knowledge management systems — each requiring continuous QA against real-time data at high transaction volumes. The AI agent architecture included Hindi and English language support validation, pricing and stock accuracy testing per store, and POS and SOP document quality checks.

The outcome was reduced manual helpdesk burden, improved store-level inventory accuracy, and faster onboarding validation through on-demand training system testing.

Logistics and supply chain — terminal and rail system QA.

A global ports and logistics operator reporting record revenue of over $20 billion in FY2024 deployed AI agents to validate terminal workflow digitisation, yard and rail operational dashboards, and rail scheduling exception management.

In an environment where operational errors translate directly to throughput losses and contractual penalties, the QA requirement was high-precision and continuous. The agents delivered improved operational visibility, higher predictability of terminal-to-rail throughput, and more efficient coordination across terminal and inland logistics systems.

Healthcare staffing — compliance and credential workflow QA.

A healthcare staffing platform connecting nursing professionals with healthcare facilities deployed AI agents to validate matching, scheduling, and compliance workflows — an environment where QA failures carry regulatory and patient safety consequences.

The agents tested talent onboarding, credential capture, facility staffing request intake, matching logic, scheduling and notification systems, and compliance reporting. The outcome: faster fill cycles, better workforce utilisation, and improved staffing responsiveness — all dependent on validated QA infrastructure.

SAP integration — order processing automation QA.

An enterprise manufacturing operation deploying automated SAP sales order creation via AI agents required continuous QA against order trigger interpretation, validation logic, exception approval workflows, and audit reconciliation reporting.

The AI agents validated the replacement of an end-of-life OpenText ECR environment, testing each component of the agentic order creation pipeline with auditability built in. The outcome was reduced manual order processing dependency, faster order-to-confirm cycles, and improved auditability for sales order creation and exceptions.

Across these deployments, a consistent pattern emerges: the highest ROI from AI QA agents comes not from replacing manual testers on simple workflows, but from achieving comprehensive coverage on complex, multi-system, compliance-sensitive workflows where manual QA was always inadequate in scope — not just slow in speed.

Industries Seeing the Highest ROI from AI-Driven QA

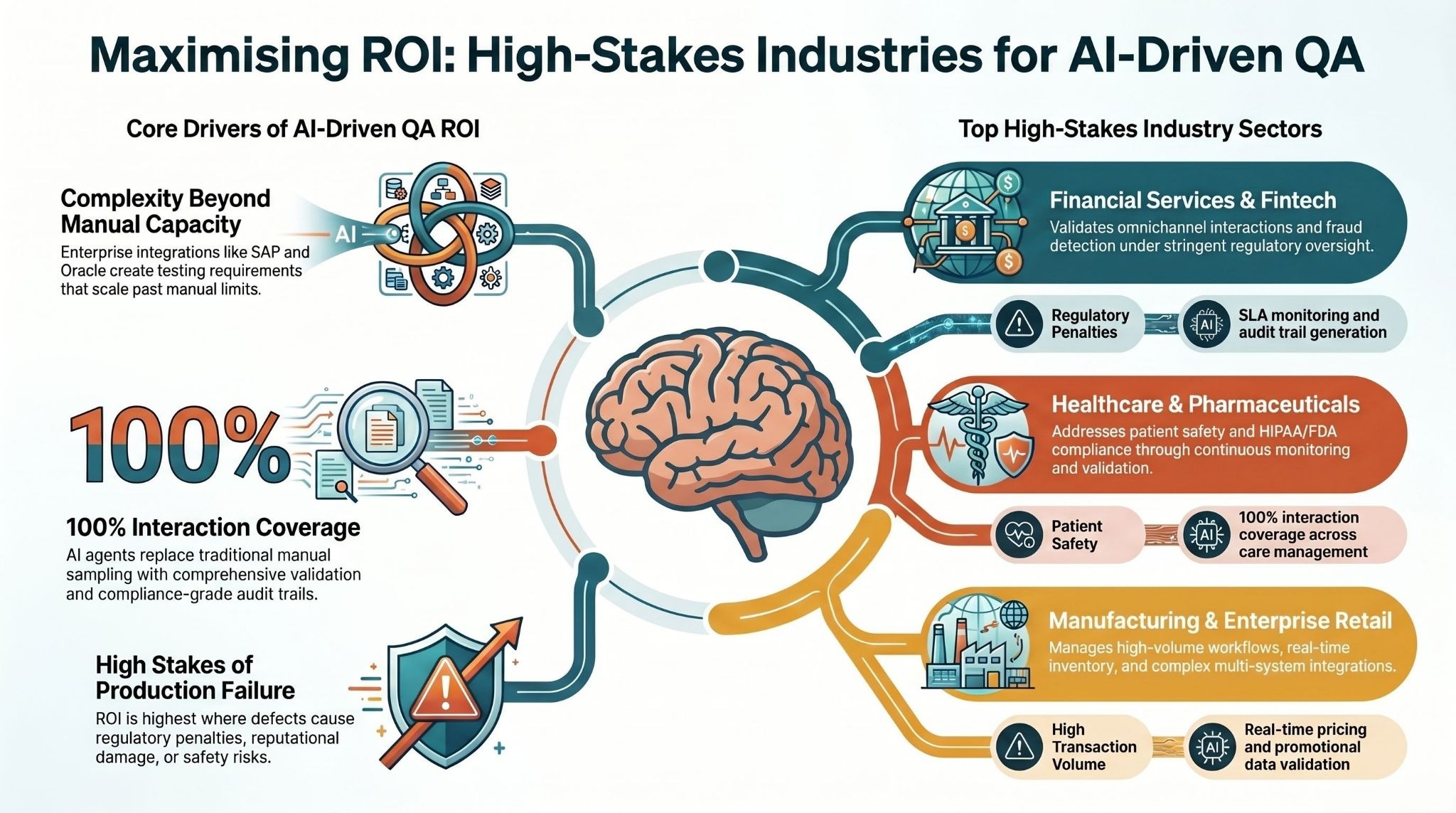

Not all industries benefit equally from AI agents in QA testing. The highest ROI concentrates in environments where application complexity is high, compliance requirements are strict, integration depth is significant, and the cost of a defect reaching production is severe.

Financial services presents the strongest overall ROI case. Banking, insurance, and fintech applications integrate multiple core systems — account management, fraud detection, compliance monitoring, customer communication — and operate under stringent regulatory requirements.

The cost of a compliance failure in production is not just remediation cost; it includes regulatory penalties, reputational damage, and customer attrition. AI agents that cover omnichannel interaction validation, SLA monitoring, and audit trail generation deliver outsized value in this environment.

Healthcare and pharmaceutical operations combine high integration complexity with the highest possible stakes for QA failure. Patient safety, HIPAA compliance, and FDA regulatory requirements create a QA environment where traditional manual sampling is structurally inadequate.

AI agents delivering 100 percent interaction coverage with compliance-grade audit trails address this inadequacy directly. The deployment results across healthcare staffing, inpatient care management, and pharmaceutical sourcing automation confirm this pattern.

Manufacturing and supply chain applications managing high-volume operational workflows — procurement, inventory, order management, logistics — require QA infrastructure that can keep pace with continuous system integration and data complexity.

SAP, Oracle, ServiceNow, and other enterprise system integrations create dense testing requirements that scale beyond manual QA capacity. AI agents designed for multi-system workflow validation, with exception approval and audit trail generation, match this requirement profile precisely.

Retail at enterprise scale — particularly operations with hundreds of physical locations integrated with digital channels, real-time inventory systems, and point-of-sale infrastructure — present a QA surface area that manual testing cannot adequately cover.

The combination of high transaction volume, multilingual support requirements, and continuous pricing and promotional data changes makes AI agent-based QA the only operationally viable model.

Energy and smart grid operations, including transmission monitoring and smart city infrastructure, require continuous QA of data ingestion, anomaly detection, predictive analytics, and automated alerting systems. Failures in these systems carry operational and public safety consequences. AI agents providing continuous monitoring and validation of these workflows deliver measurable reliability improvements.

How to Evaluate AI QA Agents: A Decision-Maker's Checklist

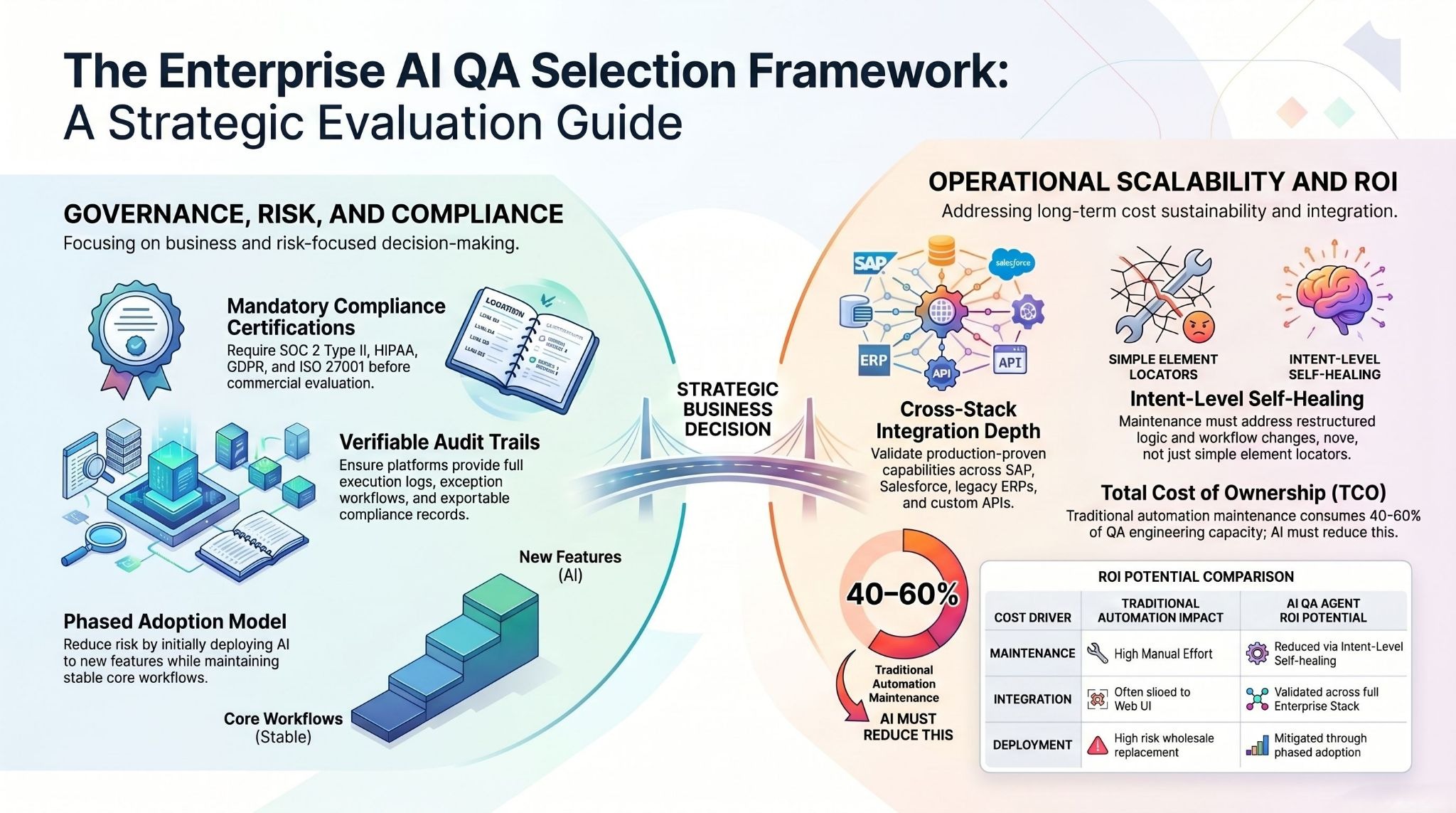

Enterprise AI QA agent evaluation is frequently misframed as a technical decision. It is a business and risk decision, and should be evaluated accordingly. The following checklist reflects the criteria that matter most for enterprise deployment success.

Does it handle your integration stack, not just generic web applications?

Most AI QA agent vendors demonstrate capability on web UI testing. Enterprise environments require validation across SAP, Salesforce, ServiceNow, Oracle, legacy ERP systems, custom APIs, and mobile applications simultaneously. Confirm that the platform has documented, production-proven integration capability across your specific stack before proceeding beyond evaluation.

What is the governance and audit trail model?

In any regulated environment, the answer to "how does the agent document its decisions?" must be complete before deployment. Platforms should provide: full logs of test execution decisions, exception and escalation workflows with human approval steps, role-based access controls, and exportable audit records in formats acceptable to your compliance function. Absence of a credible answer here is a disqualifying condition for regulated industries.

What compliance certifications does the platform carry?

SOC 2 Type II, HIPAA, GDPR, and ISO 27001 are baseline requirements for enterprise AI deployments in most regulated industries. Verify that the platform vendor carries these certifications — not that they are "working toward" them — before evaluating commercial terms.

How does it handle the maintenance problem at scale?

The primary cost of traditional automation is not initial test creation; it is ongoing maintenance as applications change. Confirm that the self-healing model addresses intent-level changes, not just locator-level selector updates. Ask specifically: when a multi-step workflow is restructured, does the agent update test logic, or does it require human intervention?

What does implementation actually look like at your scale?

Enterprise deployments with significant integration depth and compliance requirements have failed when platform implementation timelines were underestimated. Ask for reference deployments of comparable scope — similar integration count, similar compliance environment, similar organisation size — and validate the actual deployment timeline and effort involved.

What is the total cost model, including maintenance overhead?

AI agent platforms are often compared to traditional automation on licensing cost alone. The complete cost comparison must include: engineer time currently spent on test maintenance (often 40–60 percent of QA engineering capacity in traditional automation environments), defect escape costs, and release delay costs attributable to QA cycle time. The ROI argument for AI agents in QA becomes compelling quickly when the full cost of traditional automation is accounted for.

Does the platform support a phased adoption model?

Wholesale replacement of QA infrastructure carries implementation risk. Platforms that support a phased model — AI agents initially covering new features and high-risk integration points while existing automation handles stable core workflows — reduce deployment risk while allowing teams to validate agent performance before full migration.

How to Implement AI Agents in Your QA Pipeline: Step by Step

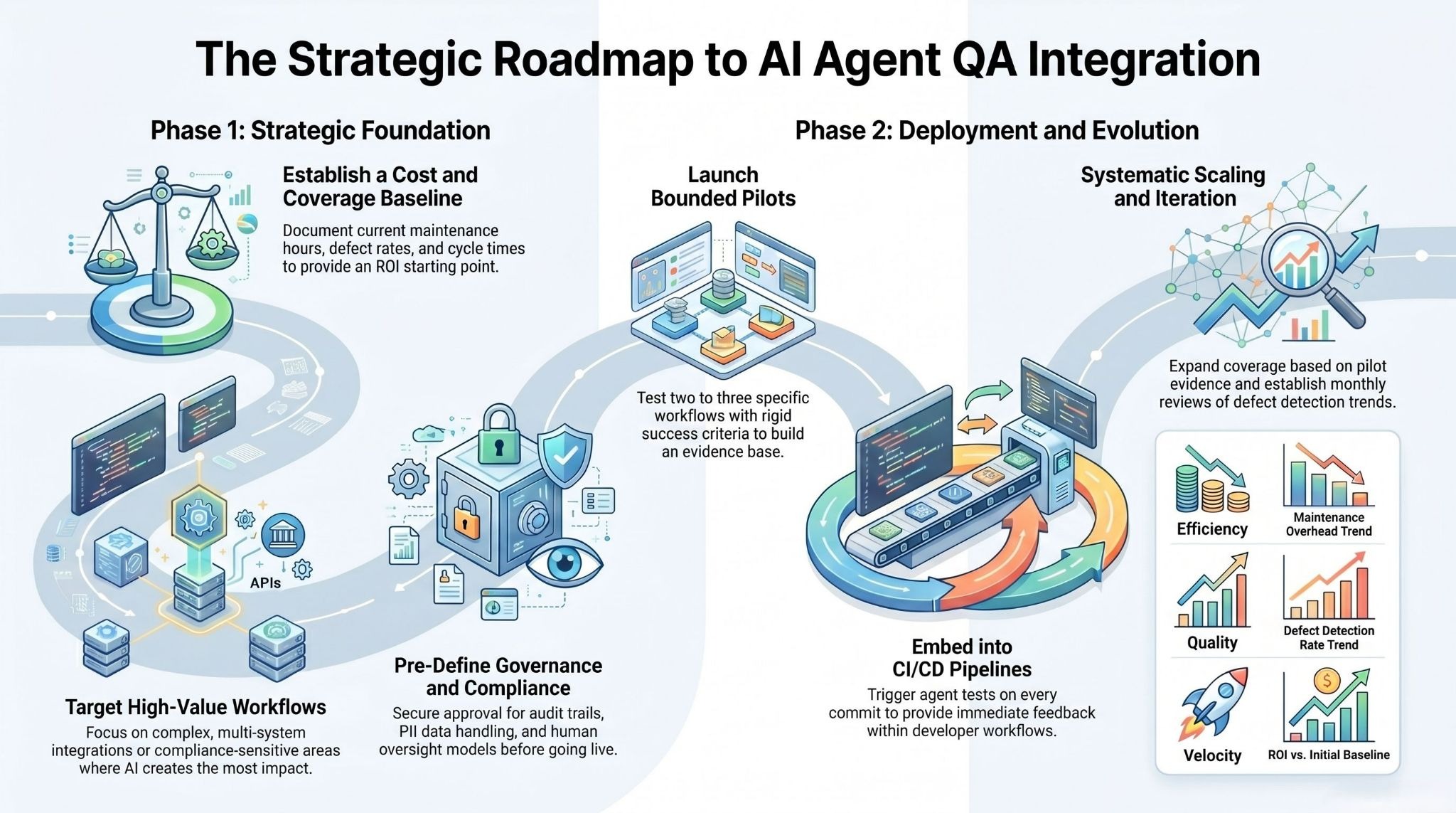

Implementation success in enterprise AI QA deployments correlates more strongly with approach than with platform selection. The following implementation sequence reflects patterns from production enterprise deployments.

Step 1 — Baseline your current QA cost and coverage accurately.

Before selecting a platform or scoping deployment, generate a complete picture of what QA actually costs today. This includes: engineer time on test maintenance per sprint, percentage of defects caught in QA versus production, average QA cycle time per release, and percentage of application workflows with active test coverage. This baseline is both the ROI starting point and the governance document for deployment decisions.

Step 2 — Identify your highest-value deployment targets.

Start with the workflows where AI agent deployment delivers the highest immediate value, not the broadest coverage. Highest-value targets share characteristics: they involve multi-system integration, they have historically high defect rates or maintenance costs, they are compliance-sensitive, or they have been inadequately covered by manual testing due to complexity. These are the workflows where agent capability creates the largest delta versus the status quo.

Step 3 — Establish governance and compliance requirements before go-live.

In regulated environments, the governance architecture must be defined and approved before agents enter any production-adjacent testing environment. This includes: audit trail format and retention, exception approval workflows, data handling for test data that includes PII or PHI, and the human oversight model that governs agent decision-making in edge cases.

Step 4 — Run a bounded pilot with defined success criteria.

A pilot scoped to two to three specific workflows, with clear pre-defined success criteria — defect detection rate, false positive rate, maintenance time reduction, cycle time impact — provides the evidence base for full deployment decisions. Avoid open-ended pilots with vague success criteria; they produce inconclusive results and delay deployment decisions.

Step 5 — Integrate into CI/CD before expanding coverage.

The highest leverage point for AI QA agents is inline CI/CD integration — tests triggering on every commit and pull request, with results visible to developers in the context of their current work. Establishing this integration pattern during the pilot phase creates the infrastructure for coverage expansion without architectural rework.

Step 6 — Expand coverage systematically based on pilot evidence.

Using pilot results as the scaling model, expand agent coverage systematically — additional workflows, additional integration points, additional compliance scenarios — using the governance and integration architecture established in the pilot. Coverage expansion should be driven by the same high-value targeting logic used to select the initial pilot scope.

Step 7 — Measure, report, and iterate on a defined cadence.

AI QA agent deployments improve with use. Agents that have run more test cycles have identified more defect patterns and built more accurate predictive models. Establish a monthly review cadence covering: defect detection rate trend, maintenance overhead trend, coverage expansion progress, and ROI versus baseline. This cadence also provides the reporting structure for compliance and governance stakeholders.

The Path Forward for Enterprise QA Teams

The market context for enterprise AI QA agents is clear. Development velocity powered by AI-assisted coding is increasing the volume and speed of software change faster than traditional QA infrastructure can track.

The teams that adapt their quality infrastructure to match this velocity — deploying AI agents that test at the speed of development, across the full integration surface area of modern enterprise applications — will accumulate a compounding advantage: faster releases, fewer production defects, lower QA operational cost, and the ability to maintain compliance coverage without proportional headcount growth.

The teams that do not adapt will face a widening gap between development speed and quality confidence — a gap that eventually expresses itself as production incidents, compliance failures, or the inability to release at the cadence that competitive markets require.

The case for AI agents in enterprise QA testing is not a prediction about where the market is heading. It is a description of what the leading deployments have already demonstrated across financial services, healthcare, manufacturing, retail, and logistics environments. The technology is ready. The governance frameworks are established. The deployment models are proven. What remains is execution.

Ready to see AI agents in your QA pipeline?

If your engineering team is spending more time maintaining test scripts than catching defects — or if your current QA coverage simply cannot keep pace with your release cadence — it is time to see what a purpose-built enterprise AI agent can do in your environment.

assistents.ai deploys in four weeks, integrates with your existing stack including SAP, Salesforce, ServiceNow, and 300+ other enterprise systems, and comes with SOC 2, HIPAA, GDPR, and ISO 27001 compliance built in from day one.

No rip-and-replace. No months-long implementation. Just comprehensive, self-healing, audit-ready QA coverage that scales with your development velocity.

Book a demo and see a live deployment walkthrough tailored to your industry and integration environment.

Frequently Asked Questions

What is an AI agent for QA testing?

An AI agent for QA testing is an autonomous software system that perceives the state of an application, generates and executes test cases, evaluates results, and adapts its behaviour across subsequent test runs — without requiring step-by-step human instruction between cycles. Unlike AI-assisted testing tools, which still require engineers to define each test step, AI agents operate against goal-level objectives: validating that a workflow executes correctly under all conditions, including edge cases the agent discovers autonomously.

How do AI agents improve test coverage in enterprise applications?

AI agents improve test coverage in three primary ways. First, they generate test cases from requirements documents, user stories, and API specifications — including negative paths and edge cases that manual test authors consistently under-represent. Second, they operate continuously across CI/CD pipelines, covering every commit rather than periodic regression cycles. Third, they apply predictive defect analytics to prioritise coverage intensity in historically high-risk areas, achieving better effective coverage per test run than uniform scripted approaches.

Can AI agents replace QA engineers?

No — and the most effective enterprise deployments do not attempt this. AI agents replace the repetitive, script-maintenance-intensive work that consumes the majority of QA engineering time: writing and maintaining test scripts, running regression suites, and triaging false positives. QA engineers shift toward higher-value work: defining quality objectives, overseeing agent outputs, designing exploratory testing for edge cases agents do not cover, and managing governance and compliance requirements. The result is higher quality output from the same or smaller team — not headcount elimination.

How long does it take to deploy AI agents for QA in an enterprise environment?

For enterprise environments with significant integration depth and compliance requirements, production-ready deployments typically take four to six weeks for an initial bounded scope — significantly faster than traditional QA infrastructure implementations, which often require eight to twelve weeks for comparable scope. The key variables affecting timeline are integration complexity, the number of compliance requirements that must be addressed in governance architecture, and the maturity of existing CI/CD infrastructure.

What compliance standards do enterprise AI QA agents need to meet?

In regulated industries, AI QA agents must meet the same compliance standards as any other enterprise software handling sensitive data in the testing environment. SOC 2 Type II certification is baseline for enterprise software vendors. Healthcare environments require HIPAA compliance for any testing involving patient data or protected health information. Multinational deployments require GDPR compliance for data handling in test environments. Many enterprise procurement requirements also include ISO 27001 certification. Verify all applicable certifications before deployment.

What is self-healing test automation and how does it work?

Self-healing test automation is the capability of an AI testing agent to automatically detect, diagnose, and repair broken tests when application changes cause test failures. When an application element changes — a button's identifier is updated, a form field is moved, an API response schema is modified — a self-healing agent detects the mismatch between the test's expectation and the application's current state, identifies the correct target using contextual analysis of the application structure and the test's intent, and updates the test. Advanced self-healing extends to intent-level changes: when a multi-step workflow is restructured, the agent updates the full test logic, not just the element locator.

What ROI should enterprise teams expect from AI QA agents?

ROI realisation depends on the baseline cost of current QA operations and the scope of deployment. The clearest ROI driver is the reduction in test maintenance overhead — in traditional automation environments, maintenance consumes 40 to 60 percent of QA engineering capacity. Eliminating this overhead redirects engineering time to higher-value work. Secondary ROI drivers include earlier defect detection (defects caught at commit stage cost a fraction of defects caught post-deployment), expanded coverage without proportional headcount increase, and reduced release cycle time from faster QA feedback loops. Enterprise teams with significant integration complexity typically see positive ROI within three to six months of production deployment.

How do AI QA agents handle integrations with enterprise systems like SAP, Salesforce, or ServiceNow?

Enterprise AI QA agents must validate workflows that span multiple integrated systems — not just individual application interfaces. Platform capability for SAP, Salesforce, ServiceNow, and similar enterprise system integration means: the ability to validate end-to-end workflows across system boundaries, generate test data that satisfies the constraints of each integrated system, and produce audit records that capture the full workflow validation chain. When evaluating platforms, require demonstrated, production-proven integration capability for your specific stack — not generic API integration claims.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us