Single Agent vs Multi-Agent AI: How to Choose the Right Architecture for Your Enterprise (2026 Guide)

The short answer: Single-agent AI systems handle roughly 80% of enterprise use cases at lower cost and with simpler implementation. Multi-agent architectures become necessary when your workflows cross three or more distinct domains, require parallel execution across systems, or operate under compliance boundaries that demand separate audit trails per function.

If you are evaluating AI agents for your enterprise right now, that decision — one agent or many — shapes everything that follows: your cost structure, your governance model, your deployment timeline, and your ceiling for capability.

This guide gives you a framework built from real enterprise deployments across financial services, logistics, retail, and healthcare, not from benchmarks run in a lab.

What is a single-agent AI system?

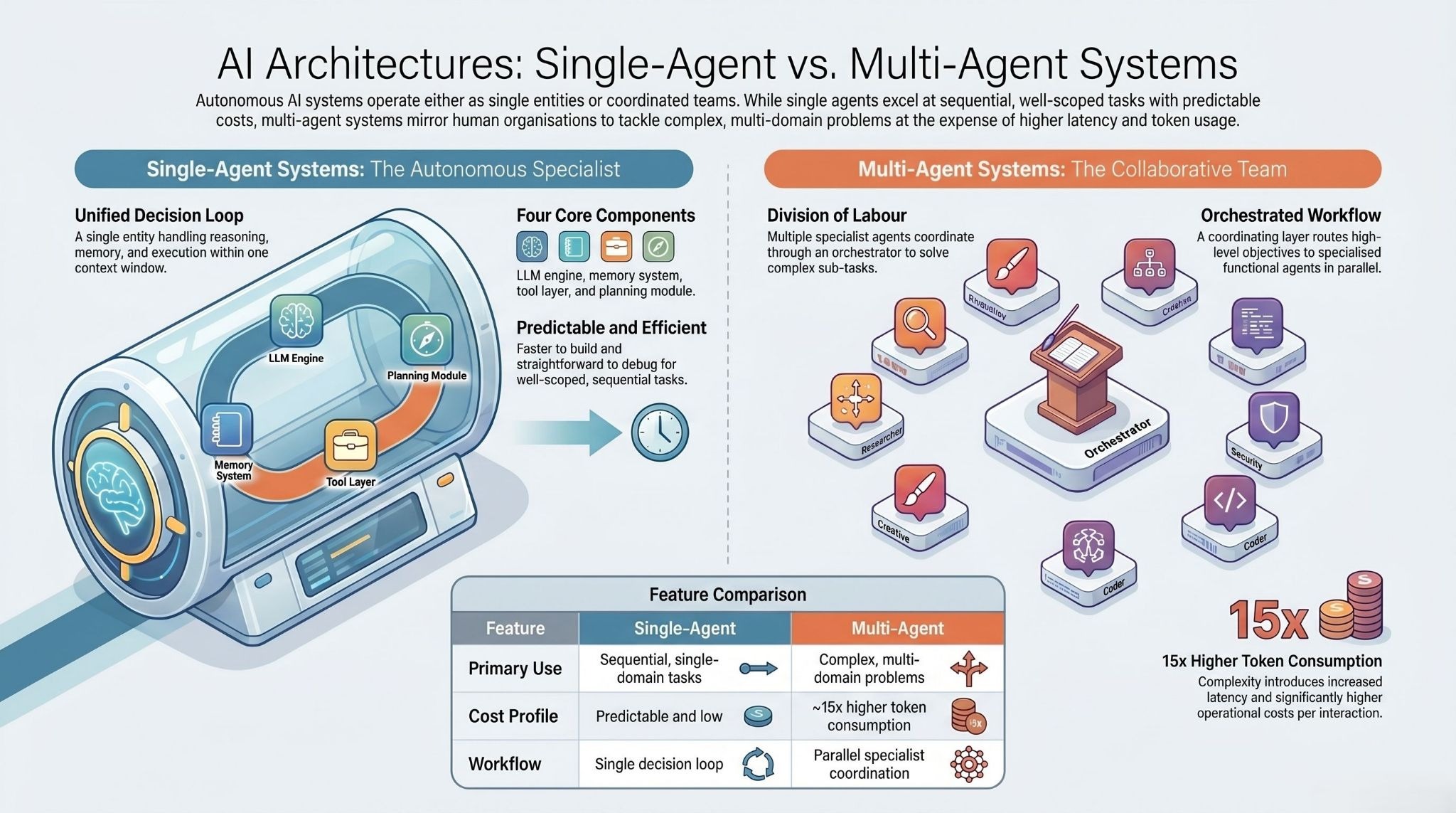

A single-agent AI system is one AI entity that handles reasoning, memory, tool use, and execution within a single decision loop. It receives a task, plans how to complete it, calls the tools or APIs it needs, and delivers an output — all within one context window, without coordinating with other agents.

This is not the same as a simple chatbot. A capable single agent can analyse large datasets, process documents, route customer queries with contextual understanding, generate reports, and trigger downstream actions in connected systems — all autonomously, with minimal human oversight.

A functional single-agent system has four components working together: an LLM reasoning engine that processes inputs and generates decisions; a memory system that maintains context across a session or across sessions; a tool integration layer that connects the agent to external APIs, databases, and enterprise systems; and a planning module that breaks complex goals into executable steps.

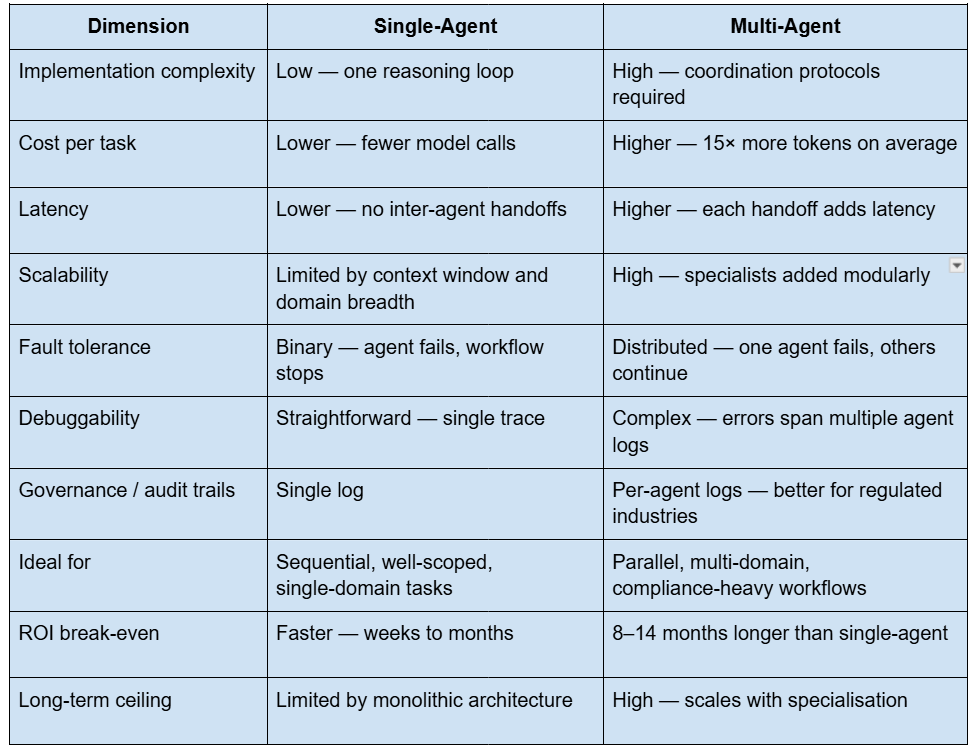

Single agents are fast to build, straightforward to debug, and predictable in cost. For well-scoped, sequential tasks within a single domain, they are often the right and sufficient answer.

What is a multi-agent AI system?

A multi-agent AI system coordinates multiple AI agents — each with its own reasoning loop, memory, and tool access — to complete tasks that no single agent could handle efficiently alone. The agents communicate through defined protocols, dividing complex problems into sub-tasks matched to each agent's specialisation.

The architecture mirrors how human organisations divide labour. A logistics operation does not ask one person to handle procurement, compliance, customs documentation, and fleet scheduling simultaneously. It builds teams. Multi-agent AI does the same thing, at machine speed.

In practice, a multi-agent system typically has an orchestrator agent — a coordinating layer that receives the high-level objective and routes sub-tasks to specialist agents. Those specialists might include a document extraction agent, a compliance validation agent, a data analysis agent, and a reporting agent, each optimised for its function and operating in parallel where tasks allow.

The coordination layer is also what introduces the architecture's main costs: latency at every handoff, explicit state management between agents, and token consumption that runs approximately 15 times higher than single-agent interactions. Those costs have to be justified by measurable performance gains — and in the right use cases, they are.

Single agent vs multi-agent AI: side-by-side comparison

When single-agent AI is the right choice

Start with a single agent unless you have a specific reason not to. This is not a conservative recommendation — it is what the data supports.

Stanford University research published in early 2026 found that single-agent systems match or outperform multi-agent architectures on complex reasoning tasks when both are given the same compute budget. The reason is information efficiency: a single agent reasoning within one continuous context avoids the fragmentation that happens every time information is summarised and handed off between agents. Each handoff risks data loss.

Each summarisation step is a compression that throws something away.

Google Research found that on tasks requiring strict sequential reasoning, multi-agent performance degrades by 39 to 70% compared to a single agent. The coordination overhead fragments the reasoning chain rather than augmenting it.

Single-agent architecture is the right choice when:

The task is well-defined and sequential. If the workflow follows a predictable path — ingest document, extract data, validate against rules, output to system — a single agent with the right tools handles this faster and cheaper than a coordinated team.

You are operating within one domain. A customer support agent that handles queries within a defined knowledge base, a financial reporting agent that consolidates data from one system, a research assistant summarising documents from a single source — these are single-domain tasks. One agent, correctly configured, is sufficient.

Budget and speed of deployment matter. Single agents deploy in days to weeks. They cost less to run, less to monitor, and far less to debug when something goes wrong. For teams proving out an AI use case internally, starting single is always faster.

The task does not require parallelism. If sub-tasks are dependent on each other — step two cannot start until step one completes — distributing those steps across multiple agents adds coordination overhead with zero performance benefit.

A useful rule of thumb from Anthropic's own guidance: if your use case requires fewer than three to five distinct functions and does not cross security or compliance boundaries, a single agent is your starting point.

When multi-agent AI becomes necessary

There is a specific point at which single-agent architecture stops being a practical option. Recognising that point before you build is significantly cheaper than rebuilding after you have deployed.

Multi-agent architecture becomes necessary when:

Workflows span three or more distinct domains. When a task genuinely requires expertise across market research, legal compliance, financial modelling, and creative execution simultaneously, a monolithic single agent becomes a bottleneck. Each capability competes for context window space and attention. Specialist agents with dedicated context perform better.

Parallel execution is required. Analysing hundreds of contracts simultaneously, monitoring competitor pricing across dozens of channels in real time, running fraud detection while processing payments — these are inherently parallel workloads. A single agent processes sequentially. Multi-agent systems execute in parallel, reducing total processing time proportionally.

Compliance boundaries demand separation. In financial services, healthcare, and regulated industries, different functions often need to operate under different access controls, produce separate audit trails, and be attributable to distinct processes for regulatory review. A single agent with access to everything violates least-privilege security principles. Separate agents with bounded permissions do not.

Fault tolerance is non-negotiable. Customer-facing transaction systems, supply chain coordination platforms, and healthcare workflows cannot tolerate binary failure. When the single agent goes down, everything stops. Multi-agent systems degrade gracefully — one specialist agent fails, the orchestrator routes around it, and core operations continue.

The task complexity exceeds a single context window. A Cornell University study found that coordinated multi-agent systems achieved a 42.68% success rate on complex planning tasks where a single-agent GPT-4 setup scored 2.92%. The difference is not the model — it is the architecture's ability to distribute cognitive load across multiple context windows operating in parallel.

Real-world enterprise deployments: what actually happened

The theoretical case for each architecture is well documented. What is rarer is an honest account of what enterprises actually chose, and why, when they deployed AI agents in production. The following examples are drawn from real deployments across industries — anonymised by agreement with the organisations involved.

Logistics and supply chain: why multi-agent was the only answer

A global logistics and supply chain enterprise operating across multiple regions and business entities needed to consolidate analytics across its entire operation. The challenge was not the volume of data — it was the heterogeneity. Different entities ran different systems with different data models, different KPI definitions, and different reporting cadences.

A single agent was evaluated first. It could not span the system boundaries or maintain consistent governance logic across entities with conflicting data structures. The team moved to a multi-agent architecture: a cross-entity KPI standardisation layer, an operational dashboard agent, and a variance explanation agent working in parallel, with a governance layer enforcing consistent definitions across all of them.

The result was a single operational view across all entities, faster leadership reporting, and consistent operational metrics — outcomes that a single agent, however capable, could not have delivered given the structural complexity of the underlying systems.

National retail at scale: three domains, three agents

A national retail operation with hundreds of stores across the country needed AI support across three distinct functions: customer and staff support via voice, real-time inventory intelligence across store locations, and on-demand knowledge and training access for store employees.

Each function was a separate domain with its own data sources, system integrations, and user interactions. A single agent would have required permissions across all three systems, produced a single audit trail that mixed operational and training queries, and been unable to handle the concurrent load across stores.

The deployment used three specialist agents — a voice support agent, an inventory intelligence agent, and a knowledge and training agent — coordinated through a central admin console with unified analytics and ticketing integration. Store-level inventory visibility improved significantly, manual helpdesk burden dropped, and onboarding time for new staff reduced through on-demand training guidance.

Financial services: compliance made the architectural decision

An enterprise financial services operation needed to deploy omnichannel AI support — handling customer intake across chat, email, and phone, providing agent-assist summarisation for human support staff, and maintaining full auditability for regulatory review.

The compliance requirement made the architecture non-negotiable. A single agent with access to customer communications, internal case data, and agent-assist functions would have created an audit trail that regulators could not parse by function. The deployment separated intake, summarisation, and compliance logging into distinct agents with separate permissions and separate logs — each attributable to a defined function in the workflow.

The outcome was faster case handling, reduced operational load through automation, and compliance readiness through per-function audit trails that satisfied regulatory requirements. It was not the complexity of the task that required multi-agent architecture — it was the governance obligation.

Healthcare staffing: the natural progression from single to multi

A healthcare staffing platform began with a single-agent deployment handling the core matching logic — connecting nursing professionals with healthcare facilities based on credentials, availability, and location. For the initial scope, a single agent was the right choice. It deployed quickly, worked reliably, and solved the primary problem.

As the platform scaled, two new requirements emerged: scheduling and compliance workflow management. Both were functionally distinct from matching and required access to different systems. Adding them to the existing single agent would have created an agent with access to too many systems, too many functions, and a context window stretched across incompatible tasks.

The team added two specialist agents — a scheduling agent and a compliance agent — coordinated through the original matching agent, which became the orchestrator. Fill cycles shortened, workforce utilisation improved, and staffing responsiveness to facilities increased.

This is the pattern that most enterprise deployments follow. You do not choose between single and multi-agent at the start — you start single, identify where the architecture breaks under load or complexity, and introduce specialists precisely where they are needed.

The enterprise decision framework: five questions

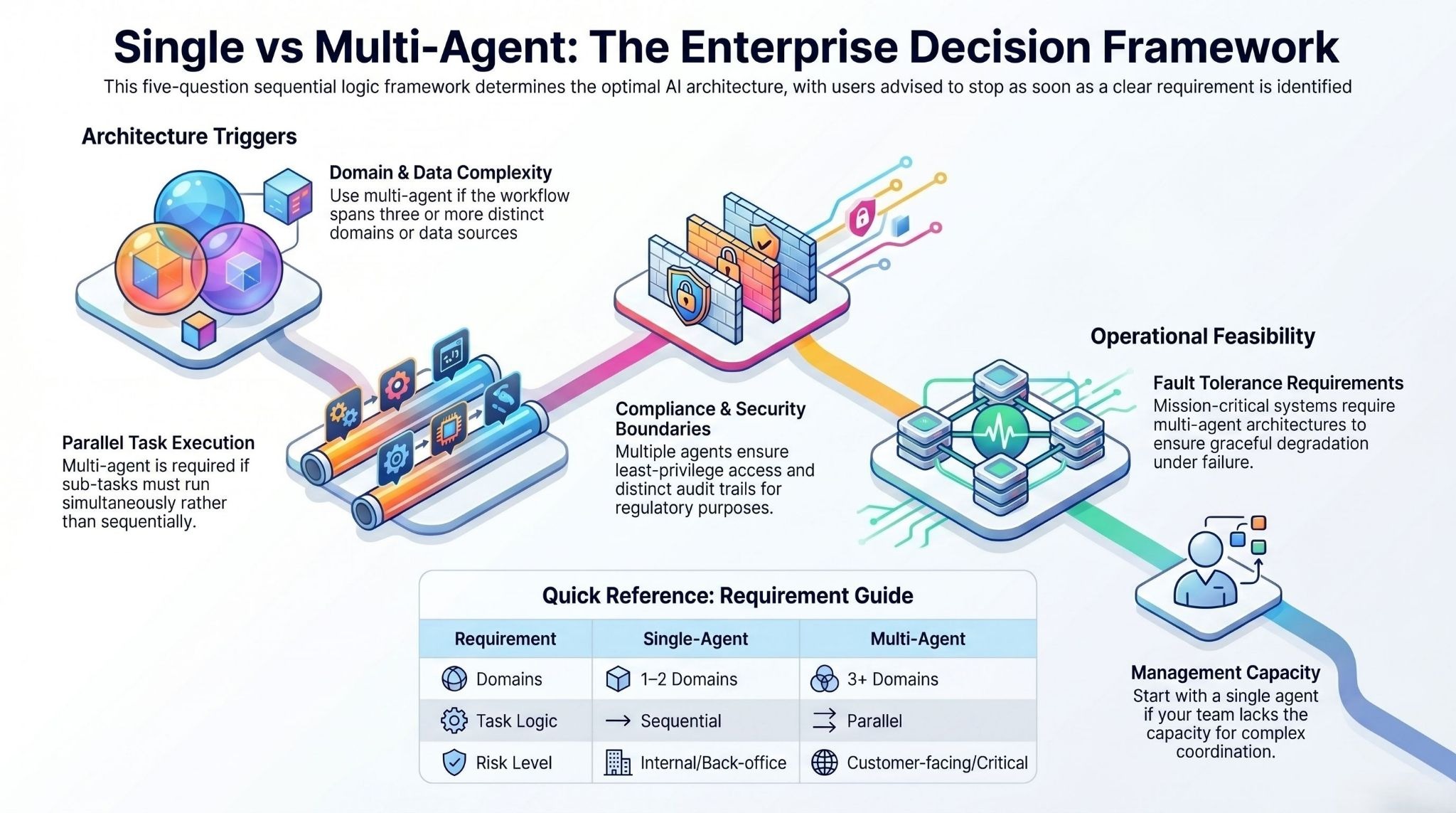

Before you decide on an architecture, answer these five questions in order. Stop when you have a clear answer.

1. How many distinct domains does your workflow span?

If the answer is one or two, start with a single agent. If three or more — especially if those domains have different data sources, different system access requirements, or different compliance obligations — design for multi-agent from the start.

2. Do your sub-tasks need to run in parallel?

If sub-tasks are sequential and dependent, a single agent processes them in the correct order without coordination overhead. If sub-tasks are genuinely independent and time-sensitive — monitoring, processing, and reporting happening simultaneously — parallelism requires multiple agents.

3. Do compliance or security boundaries require separation?

If different functions in your workflow need different access permissions, separate audit trails, or distinct attributability for regulatory purposes, a single agent with access to everything violates least-privilege security. Multi-agent is required.

4. What is your fault tolerance requirement?

For internal analytics tools and back-office workflows, binary failure is acceptable — you fix it and restart. For customer-facing systems, payment processing, or supply chain coordination, graceful degradation under failure is a hard requirement. Multi-agent architecture delivers it; single-agent does not.

5. What is your team's capacity to manage coordination complexity?

Multi-agent systems require investment in observability, debugging across distributed logs, and ongoing management of agent interactions. If your team cannot currently support that infrastructure, start single and build toward multi-agent as capability grows. A well-configured single agent is always better than a poorly managed multi-agent system.

Cost and ROI: what the numbers actually show

Enterprise AI budgets are not unlimited, and architecture decisions have direct financial consequences that most vendor comparisons avoid quantifying honestly.

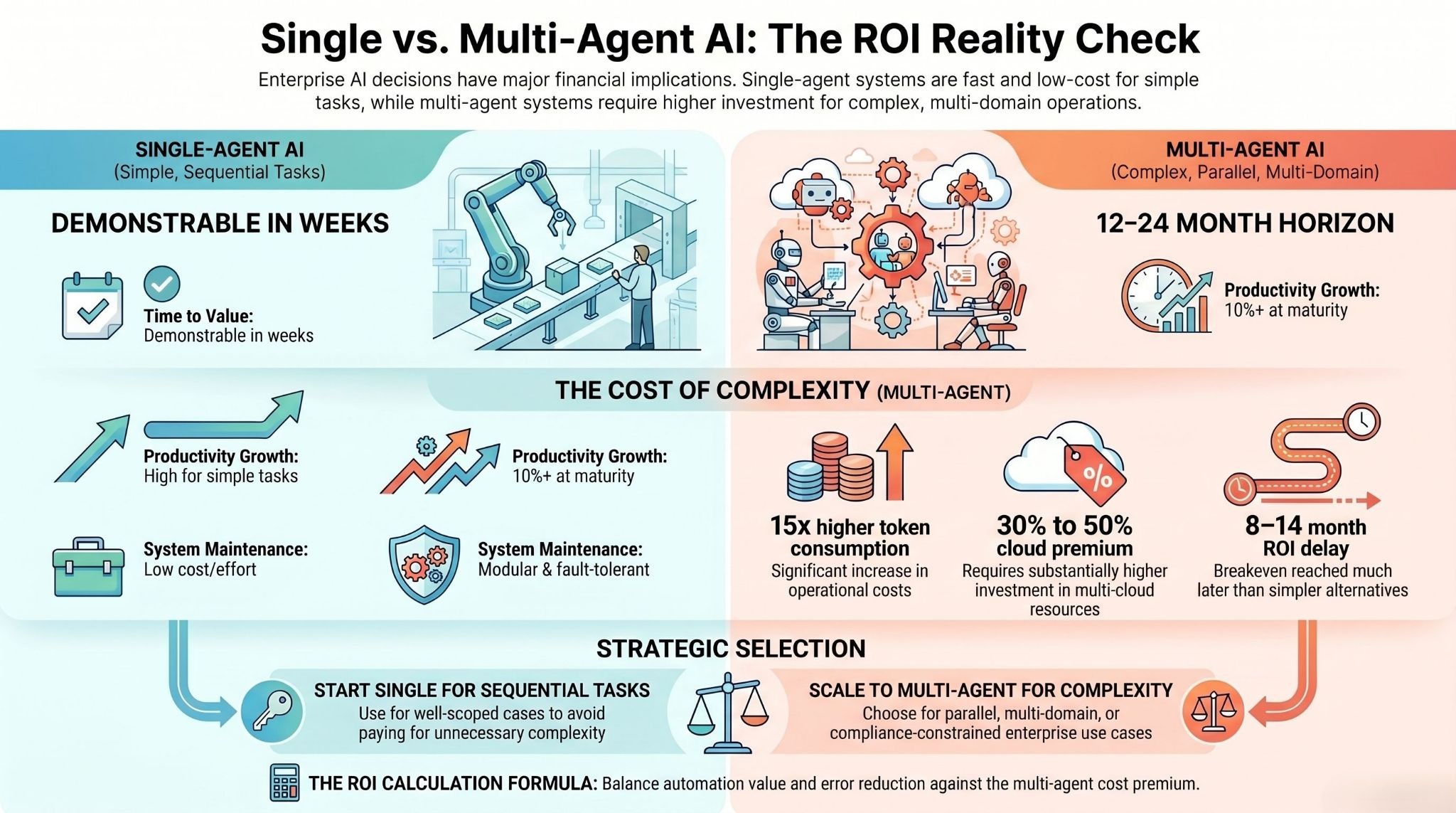

Single-agent systems have a faster ROI path. They cost less to build, less to run, and less to maintain. For well-scoped use cases, value is demonstrable within weeks.

Multi-agent systems carry a higher initial investment. Token consumption runs approximately 15 times higher than single-agent interactions. Multi-cloud infrastructure for parallel agents increases cloud costs by 30 to 50% compared to single-agent deployments. ROI break-even arrives 8 to 14 months later than an equivalent single-agent deployment — though this depends heavily on the automation value being unlocked.

The long-term curve favours multi-agent for complex use cases. Well-developed multi-agent systems can deliver 10% or more in enterprise productivity growth at maturity. Modular architecture means new specialist agents can be added without redeploying the entire system. Fault tolerance reduces the cost of unplanned downtime.

The calculation for enterprise buyers is straightforward: if your use case is genuinely multi-domain, parallel, or compliance-constrained, multi-agent architecture will outperform single-agent over a 12 to 24-month horizon. If your use case is sequential and well-scoped, the additional cost of multi-agent architecture produces negative returns — you are paying for complexity you do not need.

Calculate your break-even based on three variables: the automation value per task (time saved × volume), the error reduction value (cost of errors in the current manual process), and throughput improvement (output produced per unit time). If the multi-agent premium pays back within your planning horizon at realistic assumptions, it is the right architecture. If it does not, start single and revisit when the use case grows.

Governance and compliance: the dimension most evaluations miss

Enterprise AI deployments are not evaluated on performance alone. They are evaluated by procurement teams, legal teams, compliance officers, and CISOs who need answers to questions that most AI vendor comparisons do not address.

Multi-agent architectures offer a structural advantage in regulated environments that goes beyond technical capability: per-function audit trails. When a compliance agent, a risk agent, and a customer interaction agent each produce their own logs, regulatory review can examine each function independently. A single agent producing one unified log makes that separation impossible.

For organisations operating under HIPAA, SOC 2, GDPR, or ISO 27001, this is not a nice-to-have — it is often a requirement. The healthcare staffing deployment described above needed scheduling and compliance workflows separated not because of technical necessity, but because compliance documentation required distinct attribution.

Least-privilege security is also significantly easier to implement in a multi-agent architecture. Each agent receives only the permissions it needs for its specific function. A compliance agent has read access to regulatory documentation and write access to audit logs. It has no access to customer payment data. A single agent with all capabilities combined requires all permissions simultaneously — a security posture that most enterprise security teams will not approve for production systems.

Before choosing an architecture, ask your compliance and security teams two questions: what audit trail structure does regulatory review require, and what is the maximum permission scope a single process is allowed to hold? The answers frequently determine the architecture.

How to start: a practical implementation path

The most common mistake enterprises make with AI agent deployments is designing the final architecture before proving the core capability. The second most common mistake is starting with a single agent and then trying to retrofit multi-agent coordination onto an architecture that was never designed for it.

The path that consistently produces the best outcomes across enterprise deployments follows this sequence.

Start with a single agent for the core use case. Identify the highest-value, most clearly scoped problem. Build a single agent to solve it. Measure the outcome. This proves the capability, demonstrates value to stakeholders, and gives you a production baseline.

Identify the breaking point before you hit it. As the use case expands, map the domains, system access requirements, and compliance obligations of potential new functions. If expansion would push the agent across three or more domains, or would require access to systems with conflicting permission requirements, plan the multi-agent architecture before deployment, not after.

Introduce specialist agents one at a time. Do not decompose an existing single agent into ten specialists simultaneously. Add one specialist agent, define its boundaries, observe its behaviour in production, and only then add the next. Each addition to the coordination layer introduces new failure modes — manage them incrementally.

Invest in observability before you scale. Multi-agent systems fail in ways that are significantly harder to diagnose than single-agent failures. Build logging, tracing, and alerting infrastructure for each agent before you put the system into production at scale. The cost of debugging a poorly instrumented multi-agent system in production is high.

Plan for a four to twelve-week deployment horizon. Enterprise AI agent deployments that are well-scoped, with clear integration requirements and governance frameworks established upfront, typically reach production in four to six weeks for single-agent and eight to twelve weeks for initial multi-agent deployments.

The bottom line

Single-agent AI is not a stepping stone to something better. It is the right architecture for the majority of enterprise use cases — and deploying it well, quickly, and with clear governance produces more value than a poorly planned multi-agent system that takes three times as long to build.

Multi-agent AI is not a more advanced version of single-agent. It is a different architecture for a different class of problem — and when the problem genuinely requires it, multi-agent systems deliver capabilities that no single agent can replicate.

The decision between them is not a technical one. It is a business one: how many domains does your workflow span, does your compliance environment require functional separation, and can your team manage the coordination complexity that a multi-agent system introduces?

Start with a single agent. Know exactly what would cause you to need more than one. Build toward multi-agent when the use case demands it — not before.

If you are evaluating AI agent architecture for an enterprise deployment, the assistents.ai platform supports both single-agent and multi-agent configurations across 12 industries and 300+ integrations, with enterprise governance built in.

[See how we approach this for your industry →]

Frequently asked questions

What is the main difference between a single agent and a multi-agent AI system?

A single-agent system consolidates all reasoning, memory, tool use, and execution in one AI entity operating within a single context window. A multi-agent system distributes those capabilities across specialist agents that coordinate through defined protocols. The practical difference is that single agents are faster to deploy and cheaper to run, while multi-agent systems can handle greater complexity, parallelism, and compliance separation.

When should an enterprise choose multi-agent AI over single-agent AI?

Multi-agent architecture becomes the right choice when workflows span three or more distinct domains, when sub-tasks need to run in parallel, when compliance or security boundaries require functional separation, or when fault tolerance is a non-negotiable requirement. For most initial deployments, a single agent is the correct starting point.

Does multi-agent AI always outperform single-agent AI?

No. Stanford University research published in 2026 found that single-agent systems match or outperform multi-agent architectures on complex reasoning tasks under equal compute budgets. Google Research found that multi-agent performance degrades by 39 to 70% on sequential reasoning tasks due to coordination overhead. Multi-agent systems outperform on genuinely parallel, multi-domain workloads — not universally.

How much more does multi-agent AI cost compared to single-agent?

Token consumption in multi-agent systems runs approximately 15 times higher than single-agent interactions. Cloud infrastructure costs increase by 30 to 50% due to coordination overhead and redundant instances. ROI break-even arrives 8 to 14 months later than single-agent deployments. For use cases where multi-agent is architecturally required, these costs are justified by long-term capability and productivity gains.

Can an enterprise start with a single agent and migrate to multi-agent later?

Yes — and this is the pattern that most successful enterprise deployments follow. Start with a single agent for the core use case, prove value, identify where the architecture breaks as scope expands, then introduce specialist agents one at a time. The healthcare staffing deployment described in this article followed exactly this path.

How do multi-agent AI systems handle compliance and audit requirements?

Multi-agent architectures produce per-function audit trails — each agent generates its own log, attributable to a defined function in the workflow. This is a structural advantage in regulated industries where compliance documentation requires functional separation. Single agents produce unified logs that cannot be parsed by function, which can create challenges for regulatory review under frameworks like HIPAA, SOC 2, GDPR, and ISO 27001.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us