Enterprise Agentic AI: The Complete Guide to What Works, What Fails, and What Production-Ready Actually Looks Like in 2026

Here is a number worth sitting with: 62% of enterprises are actively experimenting with agentic AI. Only 14% have anything running in production.

Gartner projects that more than 40% of enterprise agentic AI projects will be cancelled by 2027. McKinsey reports that while 88% of organisations are using AI in some form, only 23% are successfully scaling autonomous agent systems. Deloitte's 2025 enterprise survey identifies the root cause — and it isn't model quality, compute cost, or integration complexity.

It is context. Every time.

Agents aren't failing because the technology is immature. They're failing because they're being asked to make enterprise-grade decisions on 20% of the information they actually need. The other 80% — the contracts, the email threads, the negotiated rates, the policy documents, the Slack conversations where the real decisions were made — is completely invisible to them.

This guide covers what enterprise agentic AI actually is, why the gap between experimentation and production is so persistent, what a full-context architecture looks like, and what production evidence across industries actually proves. If you are evaluating agentic AI for your organisation in 2026, this is the framework that separates deployments that survive from demos that don't.

What Is Enterprise Agentic AI? (And Why the Definition Changes Everything)

The term is everywhere. Most of what is being sold under it is not what enterprises need.

Enterprise agentic AI refers specifically to AI systems that combine three capabilities simultaneously: the ability to reason over complex, multi-source information; the ability to execute multi-step workflows autonomously across enterprise systems; and the governance layer that makes both of those safe enough to trust with real business decisions.

That three-part definition matters because the market currently offers each of those capabilities in isolation — and sells each one as if it were the complete solution.

Copilots, the most widely deployed category (Microsoft Copilot, Salesforce Einstein, and their equivalents), are strong at reasoning. They read data, synthesise it, and generate recommendations. But they don't execute. A human still has to act on whatever they suggest, which means the bottleneck hasn't moved — it has just been dressed up with better summaries.

RPA tools (UiPath, Automation Anywhere, and similar platforms) can execute. They are very good at executing. But they cannot reason. They run scripted sequences on structured data, and they break — consistently and expensively — when they encounter an exception, an unstructured input, or a scenario the script didn't anticipate.

LangChain and DIY agent frameworks sit in the middle, giving technical teams the building blocks to combine reasoning and execution. But governance has to be built from scratch. The data access layer has to be engineered custom. And the organisations deploying these are usually discovering the hard way that the problem isn't the agent framework — it's the data foundation underneath it.

What the enterprise market is missing is the combination: reasoning, governed execution, and complete context — all operating together on the full information picture, not a structured subset of it.

That gap is the defining enterprise AI problem of 2026.

Why Enterprise Agentic AI Projects Fail: The Data Nobody Talks About

The numbers cited at the top of this article are not abstract projections. They reflect what is happening right now inside enterprise AI programmes, and the patterns are consistent enough that analysts across Deloitte, McKinsey, Gartner, and the major CIO survey bodies are all saying the same thing.

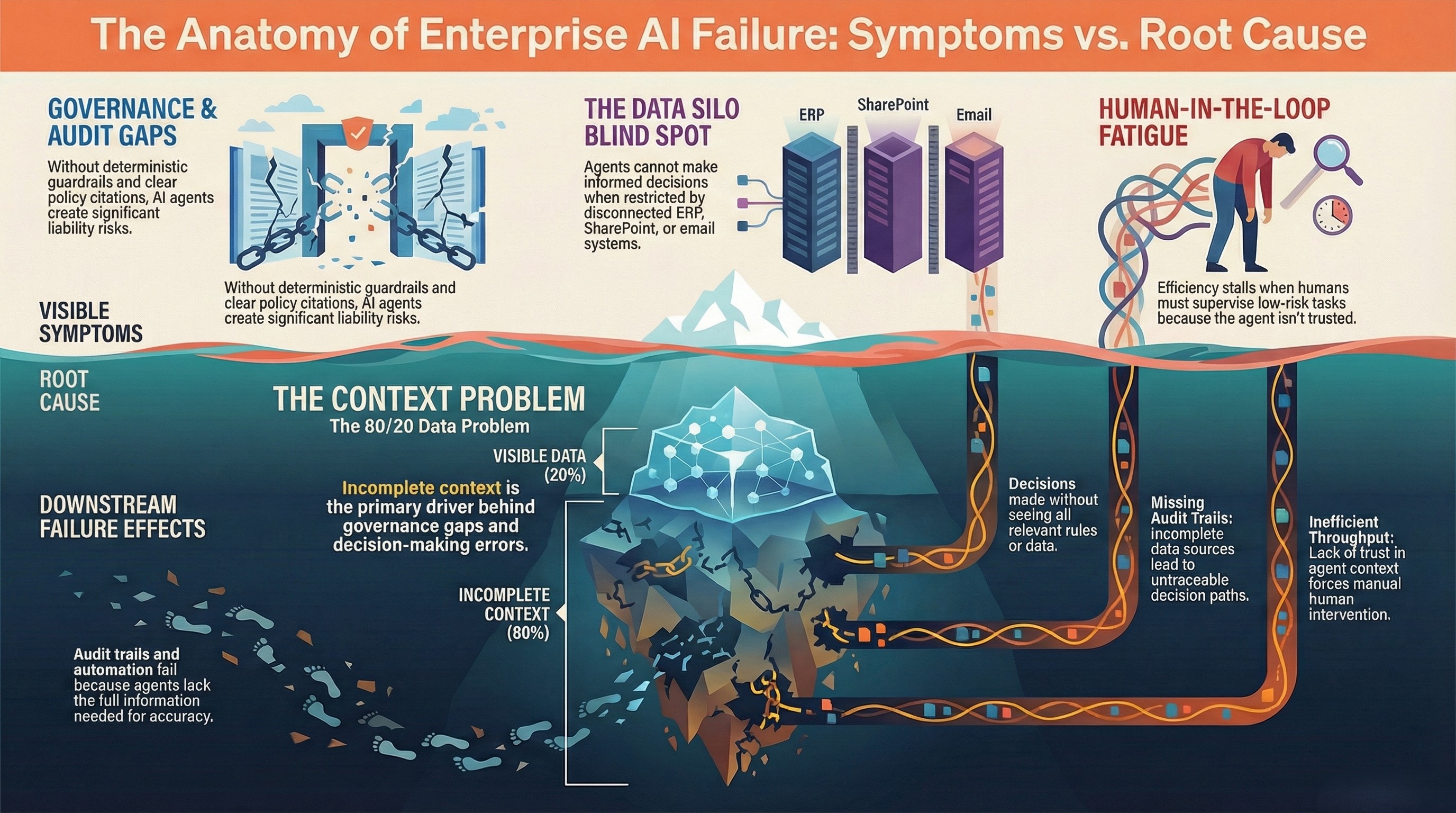

Enterprise agentic AI fails for five reasons. Four of them are symptoms. One is the root cause.

Symptom 1: Governance gaps. Agents executing without hard rules — approval hierarchies, compliance thresholds, escalation paths — create liability, not efficiency. When an agent can act on any decision without a deterministic guardrail, the enterprise is one wrong inference away from a significant and potentially irreversible error.

Symptom 2: Data silos. Enterprise data is distributed across dozens of systems, most of which were never designed to talk to each other. An agent that can query your ERP cannot, by default, also read your SharePoint contracts or your email negotiation threads. Each silo it cannot access is a blind spot in every decision it makes.

Symptom 3: No audit trail. Enterprises operating in regulated industries — banking, healthcare, logistics, manufacturing — cannot deploy agents that cannot explain themselves. If a decision was made and cannot be traced to a policy citation, a rule, a data source, and a timestamp, it is not an enterprise-grade decision. It is a liability.

Symptom 4: Humans stuck in the loop for the wrong reasons. Human oversight is necessary and appropriate for high-stakes decisions. But when humans are in the loop for every decision — including low-risk, repeatable ones — because the agent cannot be trusted to act without supervision, the automation has delivered no real change to throughput or cycle times.

The root cause underlying all four: the 80/20 data problem.

Every one of those symptoms is downstream of agents operating on incomplete context. Governance gaps exist because agents are making decisions without seeing all the information that should inform those rules. Data silos create the blind spots that cause wrong decisions. Audit trails are incomplete because the data sources consulted were incomplete. Humans stay in the loop because nobody trusts an agent that might be missing critical context.

Fix the context problem, and the other four become manageable. Leave it unsolved, and no amount of governance tooling or workflow orchestration will make your agents production-ready.

The ₹12 Crore Story — Why This Is Not Theoretical

This is the most important enterprise AI case study you are likely to read in 2026, because it happened, it is real, and it will happen to more organisations that deploy agents without solving the context problem first.

A financial services enterprise deployed an AI agent to manage vendor payment workflows. The agent was integrated with their ERP system. It could read invoice amounts, payment due dates, and vendor records. It was accurate. It was fast. By every technical measure, it performed exactly as designed.

What it could not see: the contract PDFs stored in SharePoint that contained negotiated discount terms. The email thread where rates had been renegotiated with a specific vendor three weeks earlier. The Slack messages from the treasury team flagging a cash flow concern for that quarter and recommending payment holds.

The agent processed the payment queue. It approved ₹12 crore in early vendor payments. Contract discount terms were violated. Negotiated rates were ignored. Cash flow was impacted at exactly the moment the treasury team had flagged as a concern.

The agent did not malfunction. It did not hallucinate. It executed perfectly — on 20% of the information it needed to execute correctly.

This is what the 80/20 data problem looks like at enterprise scale. This is why the gap between 62% experimenting and 14% in production is not closing on its own. The demos work because demo environments have clean, limited, structured data. Production fails because production environments have messy, distributed, mostly unstructured data — and agents built on structured-only foundations cannot see most of it.

The 80/20 Data Problem — Why Your Agents Are Flying Blind

Enterprise data breaks down like this, and the numbers matter:

Structured data — your ERP tables, CRM fields, POS records, transaction logs, financial databases — represents approximately 10–20% of total enterprise data volume. This is the data that BI tools, dashboards, and most agentic AI platforms can actually access. It is fast to query, easy to index, and well-understood by analytics systems.

Semi-structured data — application logs, API responses, JSON exports, NoSQL records, system events — represents roughly 5–10%. It is increasingly accessible to AI systems but still rarely fused with structured sources in real-time agent decisions.

Unstructured data — documents, emails, chat logs, PDFs, media files, meeting notes, policy documents, contracts, recordings — represents 70–85% of everything your organisation actually knows. It is where the real business truth lives: the negotiated exception, the verbal commitment, the compliance ruling, the cash flow warning.

Most enterprise AI platforms operate at the waterline. They see the 10–20% above it. The 80–90% below — the hidden opportunity that Assistents.ai's platform architecture calls "the buried context" — is invisible to them.

The compounding problem is this: AI agents are amplifiers. They don't create order from chaos. They multiply what already exists. An agent operating on clean, complete data multiplies efficiency. An agent operating on partial, siloed data multiplies the errors embedded in that partial view. By the time those errors surface on a dashboard, the agent has already acted on them hundreds of times.

The 80/20 Data Problem is not a data quality issue. It is an architecture issue. It requires a solution at the data fusion layer — before the agent reasons, before it decides, before it acts.

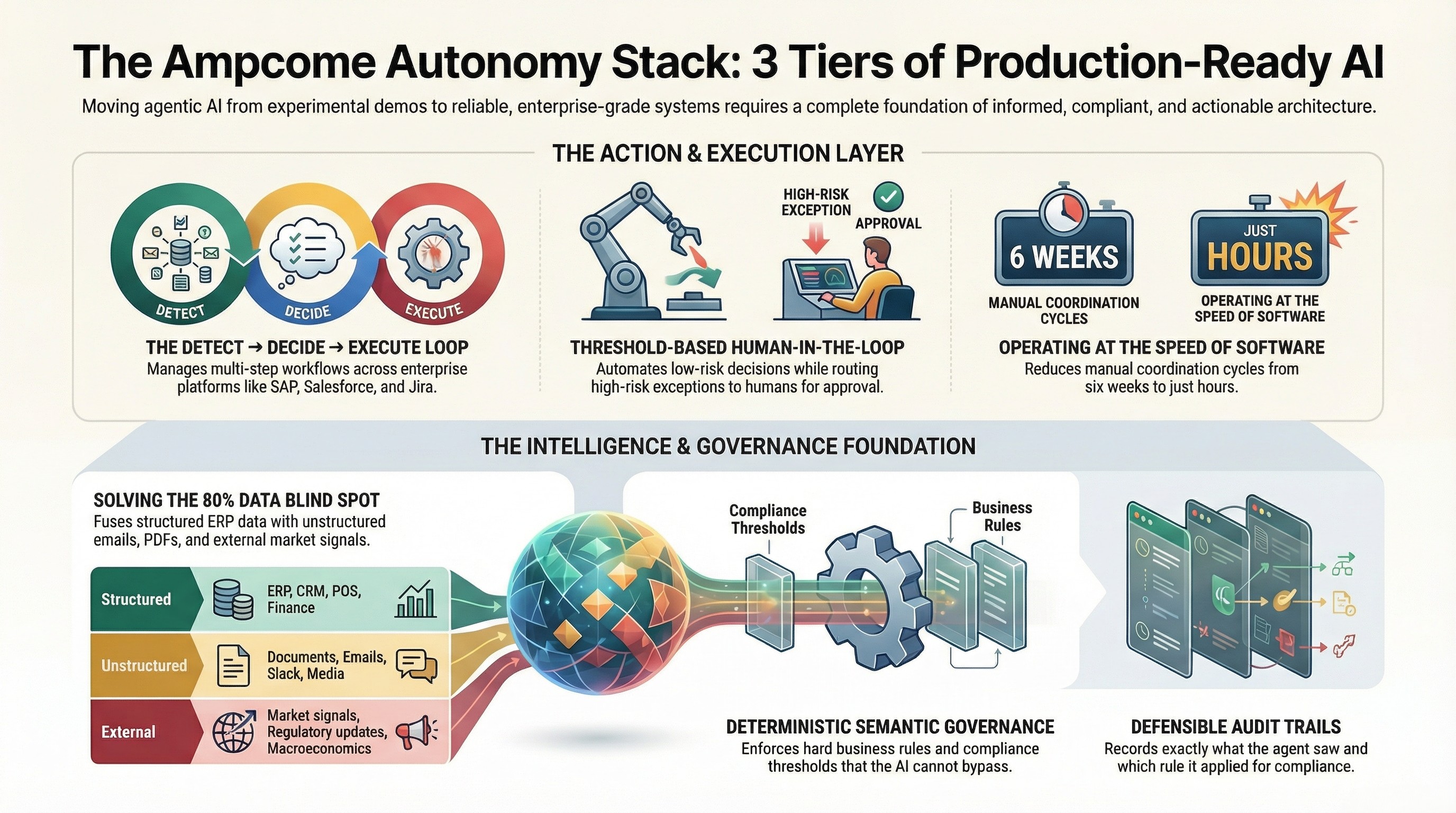

The Three-Tier Architecture That Separates Pilots From Production

The enterprises that have successfully moved agentic AI into production share a common architecture. It has three layers, and all three have to work together. Missing any one of them produces a demo-layer product, not a production-ready system.

Tier 1: The Unified Context Engine — Solving the 80% Blind Spot

The first tier is the data foundation. Before an agent reasons about anything, it needs to see everything relevant to that decision — not just the structured records in the connected ERP, but the contract PDF, the email thread, the Slack channel, and the external market signal that changes the picture entirely.

The Unified Context Engine fuses structured data (ERP, CRM, POS, Finance), semi-structured data (logs, events, APIs), and unstructured data (documents, emails, chat, media) into a single semantic layer. It also ingests external signals — competitor pricing data, macroeconomic indicators, regulatory updates, supply chain disruptions, environmental factors — that internal systems cannot capture on their own.

The output is not a query result. It is a complete contextual picture, built automatically before every agent decision, so that the agent is never reasoning from a partial map.

This is what "context-complete agentic AI" means in practice. It is not a feature. It is the prerequisite for every other capability to work correctly.

Tier 2: The Semantic Governor — Solving the Trust Problem

The second tier is governance. Not probabilistic, LLM-native governance (asking the model to "be careful"). Deterministic governance — hard rules, encoded business logic, approval hierarchies, compliance thresholds, and if-then decision trees that execute independently of the model's inference.

The Semantic Governor encodes your organisation's actual business rules. A payment above a certain threshold requires human approval. A vendor contract change requires legal review. A pricing decision outside a defined range requires executive sign-off. These rules are not suggestions to the model — they are constraints it cannot bypass.

Every decision the agent makes under the Semantic Governor is auditable, defensible, and policy-cited. There is a record of what the agent saw, what rule it applied, what it decided, and why. That record is not a log file — it is the audit trail that compliance, legal, and operations teams need to trust autonomous execution.

This is what eliminates hallucinations as an enterprise risk. The agent can infer whatever it infers. The Semantic Governor enforces what it can actually do with that inference.

Tier 3: The Active Orchestrator — Solving the Execution Gap

The third tier is execution. Governed, multi-step workflow execution across the actual enterprise systems where work happens — SAP, Salesforce, Jira, ServiceNow, Slack, and the dozens of other platforms your organisation runs on.

The Active Orchestrator manages the Detect → Decide → Execute loop. It routes approvals to the right humans when thresholds are exceeded. It triggers parallel workflows. It escalates exceptions. It learns from outcomes. And it operates at the speed of software — hours, not the six-week cycle times that manual coordination produces.

The human-in-the-loop controls are threshold-based, not blanket. Decisions below a defined risk level execute autonomously. Decisions above it route for approval. The threshold is set by your governance rules, not by the agent's confidence score.

Together, the three tiers form the Ampcome Autonomy Stack: context completeness at Tier 1, deterministic governance at Tier 2, and governed execution at Tier 3. All three are required for production-ready agentic AI. Any platform offering fewer than all three is selling you part of the architecture and asking you to build the rest yourself.

Enterprise Agentic AI in Production — Evidence Across Six Industries

The following case studies are drawn from live deployments. No client names are included. Sector, scale, context gap, and measured outcome are the story — not brand association.

Manufacturing / HVAC — From 20% Visibility to 93% Answerability

A major Indian manufacturing enterprise competing in highly price-sensitive markets needed strategic intelligence across 10 million-plus data points spanning their ERP system, live market feeds, and competitor product catalogs. Their existing BI tools could answer 2 of the 31 strategic questions their leadership team needed answered on a regular basis.

The context gap: competitor pricing data, market signals, and catalog intelligence lived outside their structured systems entirely. Their BI layer could not see it.

After deploying full-context agentic AI: 93% answerability across all 31 strategic questions. A 12–26% pricing gap identified and corrected immediately upon deployment. Insights delivered 100 times faster than the manual analysis processes they replaced.

Retail / Multi-Store Operations — 700+ Stores, Zero Training Required

A rapidly scaling value retail enterprise with more than 700 stores across hundreds of cities had no unified intelligence layer across their POS systems, inventory data, SOPs, and field operations. Store managers were operating with partial information. Knowledge queries required manual escalation. Inventory visibility was fragmented.

After deployment: standardised action logic across all stores, voice and text support agents handling inventory queries and training questions in real time, and a measurable reduction in helpdesk burden — without retraining store staff on a new system.

Ports & Global Logistics — Terminal to Rail, Full Visibility

A global ports and logistics operator with record annual revenues exceeding $20 billion had terminal and rail operations running in silos. Terminal data was invisible to inland logistics agents. Coordination between port operations and rail scheduling required manual intervention at every handoff.

After deployment: terminal-to-rail throughput improved, full workflow digitisation achieved within weeks, and executive visibility across operations delivered through real-time dashboards with automated exception alerts.

Smart Cities / Utilities — 150 Million Lives, Proactive Grid Intelligence

A smart infrastructure operator running more than 25 city operation centres and managing over 2 million connected assets across urban infrastructure was operating reactively — responding to grid issues after they surfaced, not before.

After deployment: proactive monitoring and alerting replaced reactive dashboards. Grid exceptions are identified and routed for resolution before they escalate. The operational shift is from "what happened?" to "here is what is about to happen and here is the recommended action."

Banking / Fintech — Auditability at Compliance Scale

A global fintech provider serving banks and credit unions needed omnichannel AI agents for dispute, fraud, and compliance workflows. The challenge was not automation — it was auditability. Every agent action in a regulated banking environment needs a complete, defensible record.

After deployment: omnichannel intake across chat, email, and phone with automated workflow routing, agent-assist summarisation, SLA monitoring, and a full audit trail on every action — designed to satisfy compliance requirements, not just operational efficiency targets.

Real Estate / Property Management — 24/7 Tenant Intelligence

A major UAE real estate portfolio operator managing diversified assets across multiple emirates deployed a 24/7 tenant support agent covering query triage, rental and payment support, ticketing, and escalation workflows — all governed by a knowledge base built from tenancy documents, policies, and SOPs.

After deployment: consistent 24×7 tenant experience, faster response times, lower call-centre load, and better SLA adherence through automated routing and tracking.

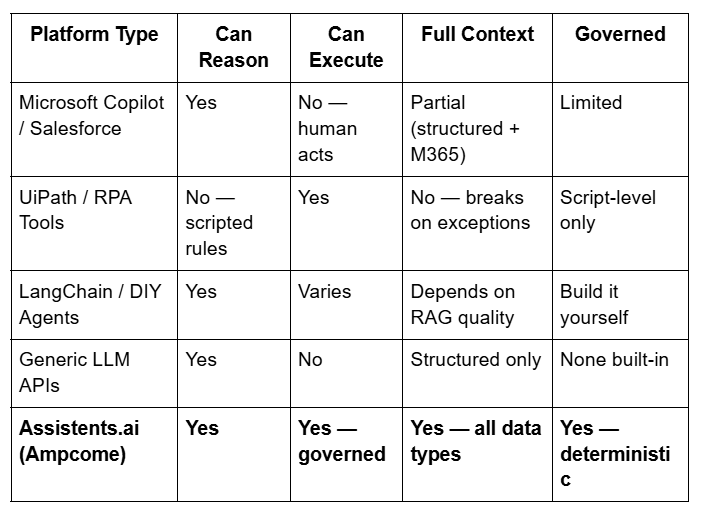

The Differentiation Matrix — What Separates Assistents.ai From Every Other Option

When enterprise buyers evaluate this category, they are usually comparing across four platform types. This table is the honest comparison:

The differentiator is not any single column. It is the only row where all four columns are "Yes" simultaneously, by design, in production.

10 Questions to Ask Any Enterprise Agentic AI Vendor

Before selecting a platform or committing to a pilot, ask these questions. The answers will tell you more than any demo:

- What data types does your platform ingest — and can you show me a live example with unstructured data included?

- What percentage of enterprise data can your agent actually access across structured, semi-structured, and unstructured sources?

- Is your governance layer deterministic, or are you relying on the LLM to self-govern?

- Can you show me a production deployment — not a demo — at enterprise scale in my sector?

- What is your average time from pilot agreement to a live, governed agent in production?

- How does your platform handle conflicting signals across data sources — which source wins, and how is that decision logged?

- What does the audit trail look like? Can I see a real example from a production environment?

- How are human-in-the-loop thresholds set and modified? Who controls them?

- What deployment models do you support — cloud, private cloud, on-premise, hybrid?

- What happens when the agent encounters data it has never seen before — does it fail, escalate, or hallucinate?

A platform that cannot answer questions 1, 2, 3, and 6 with specific, demonstrable answers is a demo-layer product. A platform that cannot answer question 7 is not enterprise-grade.

From Pilot to Production in 30 Days — What the Deployment Reality Looks Like

The most common question enterprise buyers ask after deciding to move forward is how long this actually takes. The honest answer, based on 30+ live deployments across six continents and eight industries, is 30 days from discovery to a governed agent in production.

The deployment sequence is structured, not open-ended:

Week 1 is discovery and workflow mapping. What decisions is the agent going to make? What data does it need to make them correctly? What governance rules apply? What are the approval thresholds? This week produces a concrete pilot plan, a workflow definition, an ROI hypothesis, and the success metrics against which the deployment will be measured.

Weeks 2–4 build the Context Engine, configure the governance rules, connect to the relevant enterprise systems, and get the first agent live — governed, auditable, and operating within defined parameters.

Day 30 is a live, production-ready agent. Not a proof of concept. Not a demo in a sandbox environment. A governed agent making real decisions on real data with a full audit trail.

No rip-and-replace. Assistents.ai orchestrates the systems your organisation already uses — SAP, Salesforce, Jira, ServiceNow, Slack, and the rest. The agent works with your existing infrastructure, not instead of it.

The One Question That Predicts Whether Your Project Will Succeed

After reviewing where enterprise agentic AI succeeds and where it fails, the predictive question is not "which model are you using?" or "what workflows are you automating?" It is:

What percentage of the data your agent needs to make correct decisions can it actually access?

If the answer is somewhere around 20% — if your agent is connected to your ERP and your CRM and nothing else — you are building on the foundation that produced the ₹12 crore incident. The agent will be fast, accurate, and confident. And it will be wrong in ways that only become visible after the damage is done.

If the answer is closer to 100% — if your agent can see your structured records, your contracts, your emails, your policy documents, and your external market signals simultaneously — you have the foundation for production-ready agentic AI.

The context problem is solvable. The three-tier architecture — Unified Context Engine, Semantic Governor, Active Orchestrator — exists specifically to solve it. The production evidence across manufacturing, retail, logistics, utilities, banking, and real estate proves it works at enterprise scale, in 30 days, without replacing the systems your organisation already runs on.

Your agents don't have to fly blind.

So, if you want to see what your enterprise context gap looks like — and what a 30-day deployment to close it would involve — book a pilot assessment. Within 48 hours you will have a concrete pilot plan, workflow definition, ROI hypothesis, and success metrics. If we don't surface real, new value, we walk.

[Book a Pilot Assessment →]

Frequently Asked Questions

What is enterprise agentic AI?

Enterprise agentic AI refers to AI systems that combine reasoning, governed autonomous execution, and complete context access to take independent actions on behalf of enterprises. Unlike copilots (which recommend but don't execute) or RPA tools (which execute scripted actions but can't reason), enterprise agentic AI does both — governed by deterministic rules that make autonomous action safe enough to trust at scale.

Why do enterprise agentic AI projects fail?

The primary cause is the 80/20 Data Problem: agents are connected to structured data sources representing 10–20% of enterprise information, while 70–85% of real business context lives in unstructured formats — contracts, emails, documents, chat logs — that most platforms cannot access. Agents acting on partial context execute correctly on the wrong information. Gartner projects 40%+ of agentic AI projects will be cancelled by 2027 for this reason.

What is the 80/20 Data Problem?

The 80/20 Data Problem describes the gap between the data an AI agent can access (structured records: ~10–20% of enterprise data) and the data it needs to make accurate decisions (which includes unstructured sources: ~70–85% of enterprise data). Agents operating without solving this problem make decisions that are technically precise but factually incomplete.

What is context-complete agentic AI?

Context-complete agentic AI is the term for agent systems that fuse structured, semi-structured, and unstructured enterprise data — plus external signals — into a unified semantic layer before acting. Rather than operating on the 20% visible in databases, context-complete agents see everything: ERP records, contract terms, email threads, market signals, and policy documents simultaneously.

What is the Ampcome Autonomy Stack?

The Ampcome Autonomy Stack is the three-tier production architecture for enterprise agentic AI. Tier 1 is the Unified Context Engine — fusing all data types into a complete semantic layer. Tier 2 is the Semantic Governor — deterministic governance rules, approval hierarchies, compliance thresholds, and a full audit trail for every decision. Tier 3 is the Active Orchestrator — executing multi-step workflows across enterprise systems with human-in-the-loop controls at configurable thresholds.

How is Assistents.ai different from Microsoft Copilot?

Microsoft Copilot reasons over Microsoft 365 data and recommends actions; humans execute those recommendations manually. Assistents.ai executes governed actions autonomously across all enterprise systems. Copilot is limited to structured and M365 data. Assistents.ai fuses structured, semi-structured, unstructured, and external data. The critical difference is not capability — it is completeness of context and governance of execution.

How long does enterprise agentic AI deployment take?

Based on 30+ live enterprise deployments, the timeline from discovery to a governed agent in production is 30 days. Week 1 covers workflow mapping and data access scoping. Weeks 2–4 build the Context Engine, configure governance rules, and get the first agent live. No rip-and-replace of existing systems is required.

What governance does enterprise agentic AI require?

Enterprise-grade governance requires deterministic rules — not probabilistic AI self-governance. This means hard approval thresholds (decisions above a defined value require human sign-off), complete audit trails with policy citations for every action, access controls scoped to role and data type, compliance rules encoded as if-then logic, and configurable human escalation paths for edge cases and exceptions.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us

.webp)

.jpg)

.webp)