The CIO's Complete Guide to Selecting an Enterprise AI Agent Platform in 2026

Gartner predicts that 40% of enterprise applications will embed AI agents by the end of 2026 — up from less than 5% in 2025. The global AI agents market, valued at $7.8 billion in 2025, is projected to exceed $10.9 billion this year, growing at over 45% CAGR. More than half of enterprises already have AI agents running in production environments.

And yet, Gartner also warns that over 40% of agentic AI projects are at risk of cancellation by 2027 — not because the technology failed, but because governance, observability, and ROI clarity were never established.

The gap between those two numbers is where most CIO decisions get made poorly.

Choosing an enterprise AI agent platform is no longer a software subscription decision. It is an infrastructure bet — one that will shape how your organization executes work, governs autonomous systems, and scales intelligence across departments for the next five to seven years. The platform you choose determines how quickly you can move from one agent to twenty, whether your legal team can sleep at night, and whether the CFO approves the next phase of investment.

This guide gives you the framework to make that decision well. It covers how AI agents differ from the automation tools you already run, the five non-negotiable criteria that separate enterprise-grade platforms from demo-stage products, a 10-point procurement checklist you can take directly into vendor conversations, and deployment evidence drawn from real enterprise rollouts across 12 industries and four continents.

Nothing in this guide is theoretical. Every recommendation is grounded in the pattern of what works at scale — and what fails once the pilot becomes production.

Why 2026 Is the Decisive Year for Enterprise AI Agents

The window for low-stakes experimentation is closing. For most CIOs, 2024 and 2025 were years of pilots: isolated use cases, limited scope, controlled environments. In 2026, boards and CEOs are expecting AI to show up in operating results, not slide decks.

The structural pressure is real. According to research across enterprise deployments, 71% of CIOs must prove measurable AI value by mid-2026 or face budget reductions. Meanwhile, competitors who established AI infrastructure earlier are compounding those gains — faster reporting cycles, lower manual overhead, earlier detection of anomalies, and consistently better decision cadence.

The risk of waiting has flipped. For most of the last two years, moving slowly was defensible. In 2026, slow is the risk. Not because AI is hype — but because the enterprises building now are accumulating deployment experience, governance infrastructure, and institutional knowledge that becomes harder to replicate with each passing quarter.

This is not about replacing humans or chasing the latest technology trend. It is about deciding, deliberately, which workflows should have intelligence embedded in them permanently — and choosing a platform architecture that allows you to do that safely, at the pace your organization can actually absorb.

The CIOs navigating this well share a common characteristic: they chose their platform on the basis of architecture first, capability second. What the agent can do today matters less than whether the underlying infrastructure can govern, scale, and evolve with your organization over three years.

That is the lens this entire guide is written through.

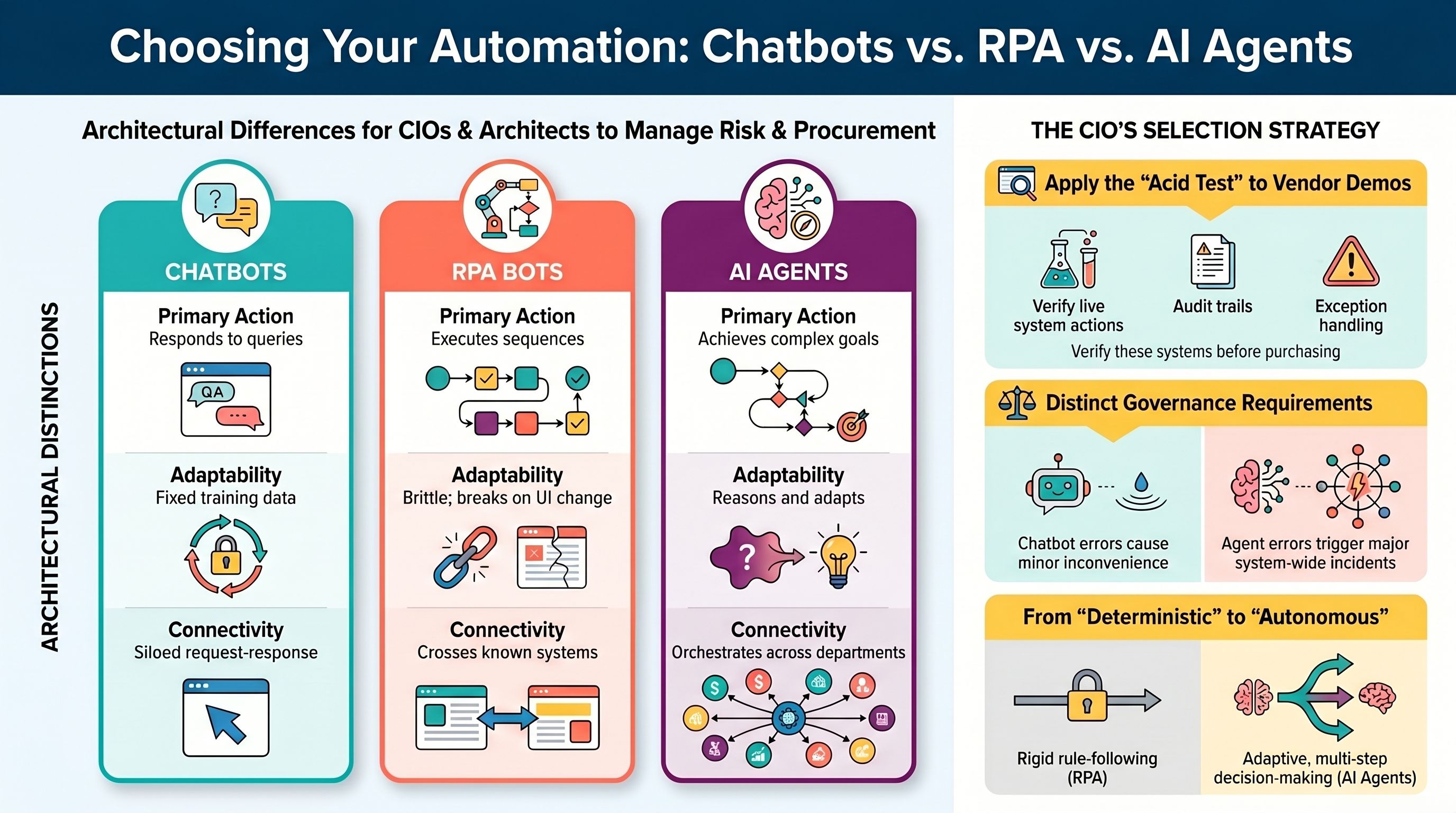

AI Agents vs. Chatbots vs. RPA — What CIOs Actually Need to Know

Before evaluating any platform, you need clarity on what you are actually buying. The market in 2026 is crowded with vendors rebranding chatbot products and RPA bots as "AI agents." The mislabeling is not accidental — it is a commercial strategy. Understanding the architectural differences protects your procurement process from being misled by demo impressions.

Here is the clear distinction across the three categories:

Chatbots respond to queries. They operate on a request-response model: a user asks a question, the chatbot retrieves a pre-defined answer or generates a response. Chatbots do not initiate action. They do not cross systems. They do not maintain state across a multi-step workflow. If a chatbot hits a scenario it was not trained for, it either produces a wrong answer or escalates to a human. Their ceiling is conversation quality.

RPA bots follow rules. Robotic Process Automation executes predefined sequences — log into System A, extract data field B, paste it into System C. RPA is effective for high-volume, highly repetitive tasks in stable environments. Its fatal weakness is brittleness: when a UI changes, a field moves, or an exception appears that the rule did not anticipate, the bot breaks and requires manual intervention to restart. RPA is deterministic by design. It cannot reason, adapt, or handle ambiguity.

Enterprise AI agents execute goals. They perceive inputs from multiple data sources simultaneously, reason across them using an AI model, make multi-step decisions, take actions in live systems — creating SAP sales orders, updating CRM records, triggering compliance flags, dispatching notifications — and produce a complete audit trail of every decision and action taken. Unlike RPA, they adapt to variation. Unlike chatbots, they act. Unlike both, they can orchestrate across departments and systems that were never designed to talk to each other.

The practical test for distinguishing an agent from a chatbot in any vendor demo is simple: ask the vendor to show you the agent taking an action in a live system — not just generating a response. Ask to see the audit trail of that action. Ask what happens when an exception occurs. If the demo cannot answer all three, you are looking at a chatbot wearing an agent's clothing.

This distinction matters enormously for CIOs because the organizational impact — and the governance requirements — are fundamentally different. A chatbot failing to answer a question is a minor inconvenience. An agent taking the wrong action in your ERP, billing system, or compliance workflow is an incident.

The 5 Non-Negotiable Criteria for Enterprise AI Agent Platform Evaluation

Most platforms look impressive in a 45-minute demo. The five criteria below are designed to expose the gap between demo-stage capability and production-grade architecture — the gap that procurement committees, security teams, and legal departments surface after the demo ends.

Platforms that satisfy all five are ready for enterprise deployment. Platforms that satisfy three or fewer will create operational and governance problems at scale.

Criterion 1: System Integration Depth — Can It Connect to Your Actual Stack?

The single most common reason enterprise AI agent deployments stall is integration failure. The agent is capable. The platform is well-designed. But it cannot connect bidirectionally to the ERP, the HRIS, the proprietary operational database, or the industry-specific system that sits at the center of the workflow you need to automate.

When evaluating integration depth, the questions that matter are not "how many integrations do you have?" — any vendor can claim a large number. The questions are: are those connections bidirectional, meaning the agent can both read and write? Do they connect to the specific systems your organization actually runs — SAP, NetSuite, Oracle, Salesforce, ServiceNow, Workday — or to a set of generic productivity tools that look impressive in a diagram? And can your team add a new integration without waiting for the vendor's engineering queue?

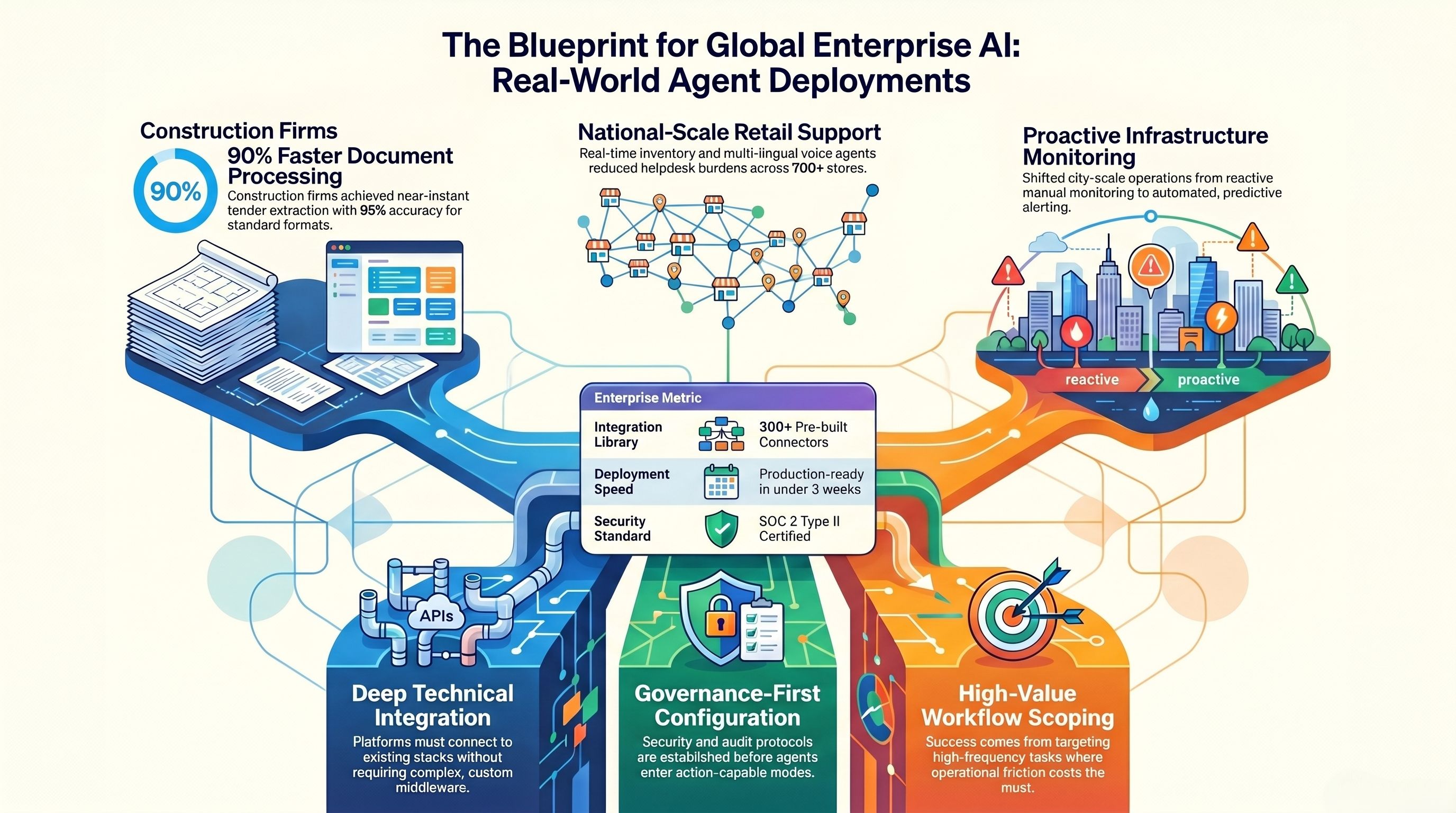

A production-ready enterprise AI agent platform should offer at minimum 300 pre-built connectors covering ERP and finance systems, CRM and sales platforms, data warehouses and analytics environments, workflow and RPA tools, identity and security systems, communication platforms, and cloud infrastructure. It should provide a unified API layer that eliminates point-to-point custom middleware — the sprawl that consumes engineering capacity and creates long-term maintenance debt.

The reason integration depth matters so much is that an agent is only as intelligent as the context it can access. An agent that cannot see your inventory system cannot meaningfully answer inventory questions. An agent that cannot write to your ERP cannot automate order creation. Intelligence without connection is expensive chat.

Across enterprise deployments we have worked with — spanning global logistics, large-scale retail, financial services, and healthcare — the organizations that achieved the fastest time to measurable value were those that chose platforms with deep, pre-built connectivity to their existing stacks. They did not spend the first three months building integrations. They spent those months configuring governance and deploying agents.

One example: a global logistics provider with complex port and inland rail operations needed a terminal management system that could digitize and optimize the connection between port operations and inland logistics — a workflow that crossed multiple operational systems that had never been connected. The deployment was possible precisely because the agent platform provided pre-built connectors across the relevant systems and a unified integration layer that eliminated the need for custom middleware development. The result was higher throughput predictability and more efficient coordination across terminal and inland logistics operations — outcomes that would have taken years of custom integration work to achieve through traditional means.

What to ask vendors: Show me the bidirectional integration with [your specific ERP]. How many engineering hours does it take to add a new integration not on your standard list? What happens when a system update breaks a connector — who fixes it, and how quickly?

Criterion 2: Governance and Auditability — What Happens When Something Goes Wrong?

This is the criterion that separates platforms IT leaders are willing to stand behind from platforms they quietly regret six months into production.

When an AI agent takes an action in a live system — creates a purchase order, flags a compliance issue, routes a customer escalation, updates a patient record — someone in your organization needs to be able to answer three questions afterward: Who authorized this action? What data did the agent access? What was the reasoning chain that led to this decision?

If you cannot answer those questions quickly and completely, you cannot pass an audit. You cannot meet GDPR, HIPAA, SOX, or EU AI Act requirements in regulated industries. You cannot respond credibly to an incident. And your legal team will not approve expanding the platform's scope — which caps your ROI permanently.

Enterprise-grade governance requires several specific architectural elements: role-based access control (RBAC) that defines what each agent can access and do at the field level, not just at the system level; immutable audit logs that record every agent action with full context — the data accessed, the decision made, the reasoning applied; approval workflows for high-impact actions that route to human review before execution; and SIEM export capability so your security operations team can include agent activity in their existing monitoring environment.

The governance layer also needs to be proactive, not just retrospective. The best implementations include policy enforcement that prevents agents from taking actions outside their defined scope — not just logging that they did. The difference is the difference between a speed bump and a guardrail.

Across regulated industry deployments — banking, healthcare, energy transmission, government utilities — the consistent finding is that governance architecture should be designed before the first agent goes into production, not retrofitted after the second. Organizations that cut corners on governance in the pilot phase spend significantly more time and budget rebuilding that infrastructure before they can scale. The technical debt is real and it compounds.

A mid-size financial services organization deploying AI agents for banking support — handling disputes, fraud workflows, compliance checks, and operational routing — required every agent interaction to be fully auditable for regulatory review. The deployment was built around omnichannel intake across chat, email, and phone, with audit trails, SLA monitoring, and integration with core banking systems. The audit trail capability was not a feature request — it was a procurement prerequisite. Without it, the project would not have passed legal review.

What to ask vendors: Show me the audit trail for this specific agent action. Can I define access at the field level, not just at the system level? How do I approve or block an action before it executes? What compliance certifications do you hold — and can I see the audit report?

Criterion 3: Multi-Agent Orchestration — Can It Scale Beyond One Use Case?

The first agent most enterprises deploy proves the concept. It handles a well-defined workflow, it saves time, and it generates enough organizational confidence to fund the next phase. The question is: does your platform architecture support the next phase — and the twenty phases after that?

Single-agent deployments are relatively straightforward. The governance is simple because the scope is narrow. The integration surface is limited. The failure modes are well-understood. But enterprise operations are not single-workflow problems. They are interconnected systems of processes that span multiple departments, multiple data sources, and multiple systems of record. Realizing the full value of AI agents in an enterprise environment requires deploying coordinated networks of agents that can pass context between them, respect each other's scope boundaries, and escalate to humans at the right point in a workflow.

Multi-agent orchestration is the architectural capability that makes this possible. It means the platform can coordinate specialized agents — a procurement monitoring agent, a vendor performance agent, and a finance alert agent — into a single workflow that spans three departments and four systems, with clear governance at every handoff and a unified audit trail across the entire process.

This is not a theoretical capability. It is already in production across complex enterprise environments. A holding group managing 30-plus companies across retail, building, industrial, and services portfolios deployed an AI platform to automate procurement and finance KPI alerts across group entities — purchase price trend monitoring, gross margin impact, vendor delivery and returns performance, and working capital indicators — across all entities simultaneously. The deployment required agents that could operate across multiple business units, aggregate intelligence from disconnected financial systems, and surface alerts to the right leadership stakeholders at the right time. Single-agent architecture would not have supported this. Multi-agent orchestration made it possible.

Another example comes from a smart infrastructure operator managing city-scale operations touching more than 150 million urban lives across 25-plus smart city operation centres, connecting 2 million-plus assets and applications. The deployment required agentic analytics that could monitor, route, and respond across an infrastructure of this scale — with automated operational alerting that replaced the manual monitoring previously required. The orchestration layer was not optional. It was the product.

What to ask vendors: Show me a live deployment where multiple agents are coordinating across more than two systems. How does context pass between agents? What happens when one agent in an orchestrated workflow fails — does the whole workflow fail, or does it route correctly? How do you version multi-agent workflows when you need to update one component?

Criterion 4: Speed to Production — What Is the Real Deployment Timeline?

Time to value is a CFO metric as much as it is a technical one. The organization approving the platform investment wants to see measurable operational impact before the next budget cycle. That creates a legitimate constraint that platform architecture either respects or ignores.

The industry average for enterprise AI agent deployment — from contract signing to production — is 8 to 12 weeks at the faster end, and significantly longer for platforms that require substantial custom integration work, extensive security review, or heavyweight professional services onboarding before anything goes live.

The benchmark you should hold vendors to is four weeks: initial architecture review, system integration, governance configuration, read-only validation, and controlled rollout to action-capable agents. That timeline is achievable when the platform has pre-built integrations to your existing stack, a well-designed governance configuration layer that does not require custom engineering, and a deployment methodology that sequences the work correctly.

The sequencing matters as much as the timeline. The correct sequence is: establish system connectivity first, configure governance and access controls second, validate with read-only agents that observe without acting third, and expand scope to action-capable agents fourth. Organizations that skip the read-only validation phase — in a rush to demonstrate value — disproportionately experience the governance incidents that erode executive confidence and stall broader deployment.

What slows deployments most is not the AI capability itself. It is integration complexity (choosing a platform without pre-built connectors to your stack), governance configuration (trying to retrofit audit trail requirements that the platform was not designed to support), and organizational readiness (deploying agents before the teams responsible for reviewing exceptions have been trained to do so). A platform that is genuinely built for enterprise deployment minimizes all three.

What to ask vendors: What is your median time from contract to production for a deployment of comparable scope to ours? Can you walk me through the specific steps and who owns each one? What are the most common causes of deployment delay, and how does your architecture minimize them? Can I speak with a reference customer who deployed on the timeline you are quoting?

Criterion 5: Compliance Posture — What Certifications Should You Require?

For enterprises in financial services, healthcare, energy, government, and any organization operating across multiple jurisdictions, compliance posture is a procurement gate — not a nice-to-have. A platform that cannot demonstrate the right certifications does not make it to the shortlist.

The baseline certification requirements in 2026 are as follows: SOC 2 Type II certification confirms that the platform's security controls have been independently audited over a sustained period — not just a point-in-time snapshot. HIPAA readiness is required for any deployment touching health data. GDPR compliance is required for any deployment with European data subjects. SOX controls are required for deployments touching financial reporting workflows. ISO 27001 is increasingly expected by enterprise procurement teams as evidence of systematic information security management.

Beyond certifications, compliance posture requires architectural capabilities: end-to-end encryption for data in transit and at rest; data masking and DLP (data loss prevention) to prevent agents from surfacing sensitive data outside authorized contexts; data residency controls so that data processed in Europe stays in Europe; on-premise and private cloud deployment options for organizations with strict data sovereignty requirements; and retention policies that allow you to define how long agent activity logs are stored and under what conditions they are purged.

The EU AI Act, entering broader enforcement in 2026, adds a layer of requirements specifically for agentic AI systems operating in high-risk categories — financial decisions, healthcare applications, infrastructure management, employment-related processes. Organizations in these categories need platforms that can demonstrate human oversight mechanisms, transparency of reasoning, and the ability to contest or override agent decisions. Governance architecture that was designed with these requirements in mind is architecturally different from governance that was bolted on after the fact.

A practical test: ask any vendor for their compliance documentation before the demo. A vendor confident in their compliance posture will send it immediately. A vendor who needs to "check with the team" is giving you important information about the maturity of their compliance architecture.

What to ask vendors: Can you provide your SOC 2 Type II audit report today? What is your data residency policy — where does data processed by agents actually reside? Do you offer on-premise deployment, and what does that architecture look like? How does your platform support EU AI Act compliance for high-risk applications?

The CIO's 10-Point Vendor Evaluation Checklist

Use this checklist in every vendor conversation. Each item represents either a conversion blocker — something that will prevent the deployment from passing procurement review — or a scaling constraint that will limit your return on investment over time. Score each item before you move a vendor from shortlist to final evaluation.

1. Bidirectional integration with your core systems. Does the platform connect to your specific ERP, CRM, and HRIS with bidirectional data flow — not just read access? Agents that cannot write to your systems of record cannot automate meaningful workflows.

2. Field-level access control. Can you define what each agent can access at the field level within each system — not just which systems it can connect to? Coarse-grained access control is an audit and compliance risk.

3. Complete, queryable audit trails. Is there an immutable log of every agent action — what data was accessed, what decision was made, what reasoning was applied — that you can query for any specific incident within minutes?

4. Named compliance certifications. Does the vendor hold SOC 2 Type II certification? Is the platform HIPAA-ready, GDPR-compliant, and capable of supporting SOX controls? Can they provide documentation today?

5. Multi-agent orchestration at scale. Does the platform support coordinated multi-agent workflows that span multiple departments and systems, with governed handoffs and a unified audit trail across the full workflow?

6. On-premise or private cloud deployment. Can you run the platform entirely within your own infrastructure — your data center or private cloud — if your data sovereignty or security requirements demand it?

7. Demonstrated time to production. What is the vendor's actual median deployment timeline for enterprises of comparable size and complexity? Four weeks should be achievable. Anything beyond eight weeks for a standard deployment signals integration or governance complexity the vendor has not solved.

8. Human-in-the-loop design. How does the platform handle exceptions, edge cases, and high-impact actions that require human approval before execution? Is human oversight a native architectural feature or an afterthought?

9. Model portability. Can you replace the underlying AI model — switching providers, or upgrading to a newer model — without rearchitecting the platform? Model lock-in is as limiting as vendor lock-in.

10. Reference customers at comparable scale. Can the vendor provide at least two referenceable enterprise customers — not SMBs — who have deployed in your industry or at your organizational scale, with measurable outcomes they will describe on a reference call?

What Real Enterprise AI Agent Deployments Look Like — Evidence Across 12 Industries

Theory is useful. Pattern recognition is more useful. The following examples are drawn from actual enterprise AI agent deployments across industries, geographies, and organizational scales. Client names are not published — but the problems, solutions, and outcomes are real.

The pattern across all of them is consistent: the deployments that produced the fastest and largest measurable impact shared three characteristics. The platform connected deeply to the existing technology stack without requiring custom middleware. Governance was configured before agents went into action-capable mode. And the deployment was scoped to a high-frequency, high-value workflow — not the most impressive use case, but the one where operational friction was costing the organization the most time and money every week.

Luxury Hospitality — East Africa. A luxury lodge and camp collection operating across iconic safari destinations needed to handle complex guest booking requests — itinerary customization, real-time inventory across 16 properties, alternative date negotiation — without the back-and-forth that degraded the guest experience and consumed staff capacity. A digital booking agent was deployed with email intake, intent classification, conversational data capture for missing details, real-time inventory checks, and automated invoice and document generation. The result: faster booking turnaround, higher accuracy on complex multi-property requirements, and scalable operations without compromising the service quality that defines the brand.

Construction and Remediation — Australia. A waterproofing diagnostics and commercial works specialist with a high bid volume needed to process complex tender documents — extraction, revision analysis, integration into operational systems — faster and with greater accuracy. A multi-agent Intelligent Document Workbench was deployed with tender retrieval, workflow determination, vision-LLM extraction from complex PDFs, and deep integration with their core operational platform. Engineering target: approximately 90% faster tender document processing with approximately 95% extraction accuracy for standard formats. Reduced bid risk through automated revision and change detection.

Retail at National Scale — India. A value retail operator with more than 700 stores across hundreds of cities needed to reduce manual helpdesk burden, improve store-level inventory visibility, and accelerate onboarding for new store staff. Three AI agents were deployed simultaneously: a voice support agent handling queries in Hindi and English; an inventory intelligence agent providing real-time pricing, stock, and promotional data per store; and a knowledge and training agent built on a RAG architecture over point-of-sale and SOP documentation. The deployment reduced manual helpdesk load, improved store-level inventory visibility across the national footprint, and enabled faster onboarding through on-demand training guidance.

Banking and Financial Services — Global. A global fintech provider serving banks and credit unions needed to modernize dispute handling, fraud workflow support, compliance monitoring, and operational routing across omnichannel intake — chat, email, and phone. AI agents were deployed with full audit trail generation, SLA monitoring, and integration with core banking systems. The deployment reduced manual case handling load, improved consistency of responses, and built the compliance audit trail infrastructure that regulatory teams required. The architecture was specifically designed for financial services auditability requirements — not retrofitted to meet them.

Energy and Utilities — India (State Level). A state power transmission utility responsible for delivering reliable power across an entire state needed to monitor, forecast, and respond to transmission system performance — detecting anomalies, predicting maintenance requirements, and alerting field operations — at a scale that manual monitoring could not sustain. AI was deployed for energy management: utility and sensor data ingestion, anomaly detection, forecasting, optimisation recommendations, and proactive alerting. The result was improved energy visibility, faster detection of inefficiencies, reduced manual monitoring effort, and more predictable operations through early-warning alerts.

Smart Infrastructure — India (City Scale). A smart infrastructure operator running city-scale operations — 25-plus smart city operation centres, 2 million-plus connected assets and applications, touching more than 150 million urban lives — needed agentic analytics and automated operational alerting on top of existing smart utility systems. The deployment included smart grid data ingestion, operational dashboards, predictive analytics for outages, losses, and field issues, automated alert generation, and workflow routing for resolution. The outcome: higher operational visibility across grid operations, faster exception detection and response coordination, and a shift from reactive manual monitoring to proactive, always-on coverage.

Global Logistics — UAE and International. A global ports and logistics leader — with reported record revenue exceeding $20 billion in its most recent fiscal year — needed to digitize and optimize the connection between terminal and inland rail logistics operations. A terminal and rail management solution was deployed to handle workflow digitization, yard and rail operational dashboards, rail scheduling and visibility, exception management, and executive-level operational reporting. The result was improved predictability of terminal-to-rail throughput, more efficient coordination across logistics operations, and improved operational visibility for leadership.

Pharma Sourcing — India. A pharma sourcing and excipients platform handling more than 7,500 SKUs needed to automate request-for-quote workflows, supplier discovery, procurement decision support, and quality and regulatory document handling. AI agents were deployed to automate RFQ generation, supplier matching, and procurement analysis — reducing vendor coordination overhead and improving price and lead-time competitiveness through structured sourcing intelligence.

Healthcare Staffing — United States. A healthcare staffing platform connecting nursing professionals with healthcare facilities needed to reduce the friction in shift-matching, scheduling, and compliance management. AI agents were deployed across talent onboarding, credential capture, facility staffing request intake, matching logic, scheduling, compliance workflows, and fill-rate reporting. The result: faster fill cycles, lower scheduling friction, better workforce utilization, and improved staffing responsiveness for facilities.

Physician-Led Clinical Operations — United States. A physician-led clinical enterprise operating hospitalist programs needed better visibility into revenue management, operational performance, and care-program outcomes. Data analytics workflows were deployed for revenue and utilization analytics, performance dashboards with variance explanations, and operational revenue cycle visibility with exception alerts. The result was improved visibility into revenue leakage drivers, faster operational decision-making through unified reporting, and better decision support for leadership.

Real Estate Portfolio Management — UAE. A major real estate portfolio owner and manager with diversified office, retail, industrial, and residential assets across multiple emirates needed to automate tenant and customer support workflows — inquiry triage, rental and payment support, ticketing, escalation routing — while giving leadership better visibility into portfolio performance. A customer service AI agent was deployed across web, WhatsApp, and email channels, with tenant query triage, knowledge base coverage over policies and tenancy documentation, and automated escalation to human teams. The result was faster response times, consistent 24/7 tenant experience, and better SLA adherence through automated routing and tracking.

Market Research and Analytics — India. A financial market research platform using Elliott Wave theory and related indicators needed to automate the production of research packs and insight generation from market data. Data ingestion pipelines, indicator workflows, research automation, and thematic dashboards were deployed — producing faster market insight packs, more repeatable and consistent research workflows, and better signal visibility through automated analytics.

The geographic and industry range matters. These are not pilot deployments in controlled environments. They span Africa, Australia, India, the UAE, the UK, and the United States. They cover regulated industries — financial services, healthcare, energy — and high-velocity commercial operations. The common thread is not the industry. It is the platform architecture: deep integration, governance-first, multi-agent orchestration, and measurable operational outcomes.

assistents.ai is the enterprise agentic AI platform built for organizations that need to scale AI infrastructure without rearchitecting. 300+ integrations. SOC 2 Type II certified. Production in under three weeks. Learn more at assistents.ai/solutions/cto

Common Mistakes CIOs Make When Buying AI Agent Platforms

Pattern recognition works in both directions. The deployments that stalled, required rebuilding, or failed to scale beyond the pilot share a consistent set of root causes. Understanding them before you select a platform is significantly cheaper than learning them after.

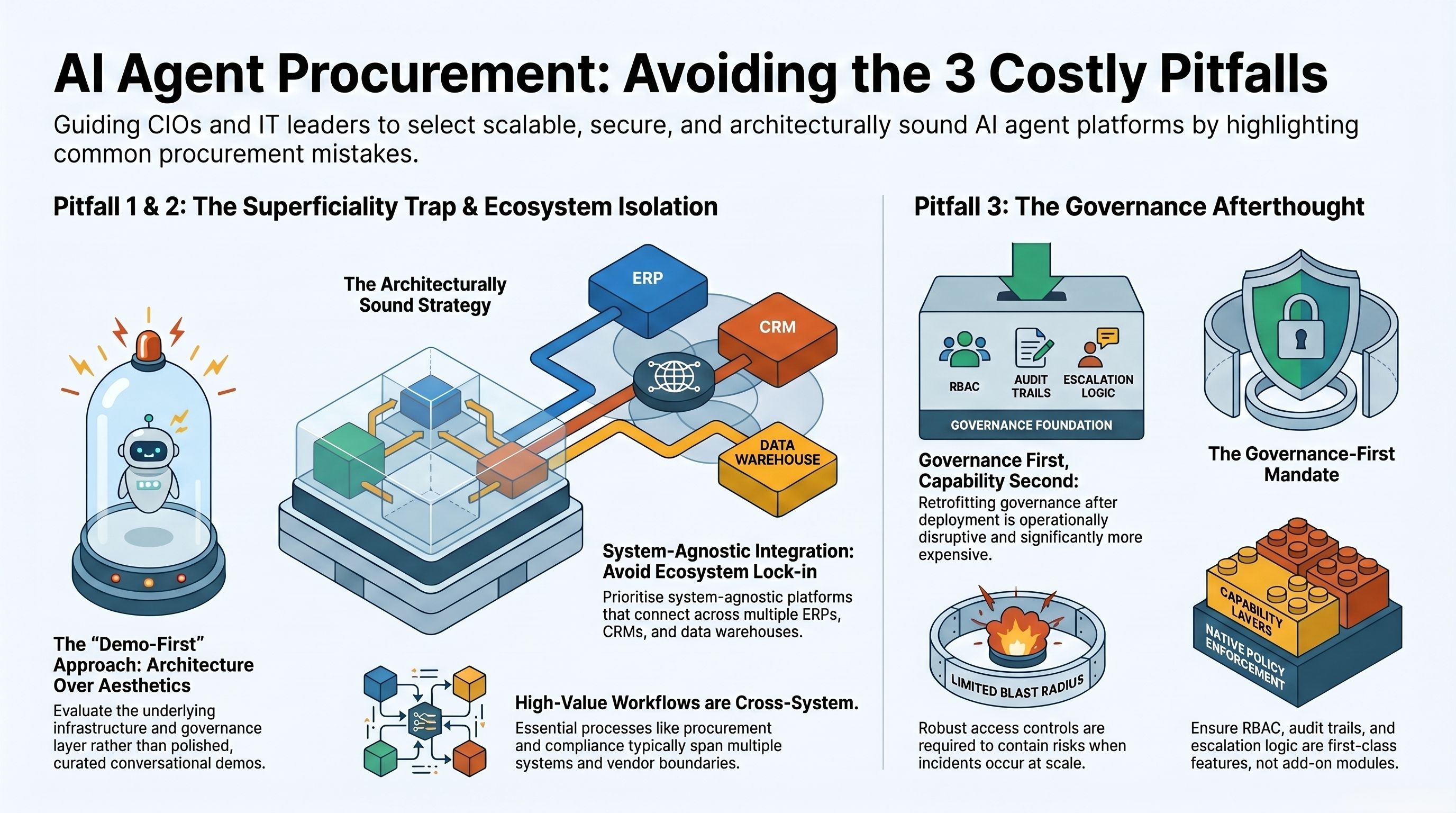

Mistake 1: Prioritising demo fluency over architecture depth.

The most compelling demos in enterprise AI almost always feature polished conversational interfaces, impressive response quality, and carefully curated scenarios. What they rarely show is the audit trail of the action just taken, the RBAC configuration that limited what the agent was allowed to do, the escalation logic that kicked in when the agent hit an edge case, or the governance layer that will prevent the agent from accessing data it should not see in production.

The mistake is to evaluate a platform on the basis of how it performs in a controlled demonstration rather than whether its underlying architecture supports the governance, auditability, and integration requirements that your enterprise actually has. Ask every vendor to take you behind the demo and show you the infrastructure. The ones with strong architecture will welcome the question. The ones without it will redirect to another feature.

Mistake 2: Choosing ecosystem-locked platforms.

Several major enterprise software vendors offer AI agent capabilities that are deeply integrated with their own platforms and essentially non-functional outside them. An agent built on one CRM vendor's ecosystem works well if your entire operation runs on that vendor's stack. If your organization — like most large enterprises — operates across multiple ERPs, CRMs, data warehouses, and industry-specific systems, an ecosystem-locked platform will automate only the workflows that touch that vendor's products and stop at every boundary where another system begins.

The operational reality of enterprise AI is that the highest-value workflows are almost always cross-system: procurement that spans supplier management, ERP, and finance approvals; customer operations that span CRM, support ticketing, and billing; compliance monitoring that spans policy documentation, transaction systems, and reporting. Ecosystem-locked platforms cannot reach across those boundaries without expensive custom integration work — which defeats the purpose of choosing a pre-built platform.

The architecture you need is system-agnostic: a platform that connects to your entire stack regardless of vendor, with pre-built connectors that do not require your engineering team to maintain them.

Mistake 3: Deploying without a governance framework and trying to retrofit one later.

The most expensive mistake in enterprise AI agent deployment is treating governance as a post-launch problem. The reasoning is understandable: the pilot needs to demonstrate value quickly, governance configuration takes time, and the argument is made that you can "add proper governance once the use case is proven."

What happens in practice is that the pilot succeeds, scope expands, more agents go into production, and governance is still a collection of informal conventions rather than an enforced architecture. When an incident occurs — and at scale, incidents are not hypothetical — the absence of an audit trail means you cannot explain what happened. The absence of RBAC means you cannot contain the blast radius. The absence of escalation workflows means human review was not available at the decision point where it was needed.

Rebuilding governance infrastructure after agents are in production is operationally disruptive and organizationally expensive. The correct sequence is always governance first, capability second. Platforms that make this easy — that treat audit trails, RBAC, and policy enforcement as first-class features rather than add-on modules — make this sequencing practical rather than theoretical.

For a deeper treatment of governance architecture, the AI Agent Governance Playbook at assistents.ai/resources/ai-agent-governance-playbook covers the framework in detail.

Build vs. Buy vs. Assemble — The CIO Decision Framework for 2026

Every CIO evaluating AI agent platforms will eventually face the build vs. buy question. In 2026, that binary has largely been replaced by a three-way decision that better reflects the actual range of options available.

Build means constructing your agent architecture from foundational components — LLM APIs, orchestration frameworks like LangGraph or Microsoft's Agent Framework, custom integration code, and a governance layer your engineering team designs and maintains. This gives maximum architectural control and the ability to optimize for your specific domain. It is appropriate when the workflow is genuinely proprietary — where your competitive advantage depends on an approach no vendor has productized — and when you have the engineering capacity to build, maintain, and evolve the infrastructure over time. For most enterprises, the honest assessment is that neither condition is met for most workflows.

Buy means selecting a vendor suite that promises to handle everything — a single platform with pre-built agents, integrations, governance, and orchestration. The appeal is simplicity and speed. The risk is vendor lock-in: when your critical workflows run on a single vendor's architecture, your negotiating leverage, your ability to adopt better models as they emerge, and your capacity to integrate new systems on your own timeline are all constrained by that vendor's product roadmap.

Assemble means choosing an enterprise AI agent platform that provides the infrastructure layer — integrations, orchestration, governance, the context engine — while maintaining flexibility at the model layer, the agent configuration layer, and the integration layer. The platform handles the plumbing. Your team configures the agents, defines the governance policies, and deploys to your specific workflows. This is the dominant pattern in 2026 for enterprises that need to move fast without sacrificing architectural control.

The assemble model works because the hardest problems in enterprise AI agent deployment are not AI problems — they are integration problems and governance problems. Getting a large language model to reason effectively across a structured workflow is a solved problem. Getting that workflow connected to your SAP instance, your Workday environment, your proprietary database, and your compliance reporting system — with a governance layer that satisfies your legal team — is the hard work. A platform designed for enterprise assembly solves that hard work. You bring domain knowledge. The platform brings the infrastructure.

The practical test for whether a platform supports the assemble model: can your team add a new integration, define a new agent, and configure its governance policies without requiring a professional services engagement or a vendor engineering ticket? If the answer is no, you are buying, not assembling — and the lock-in implications apply.

How to Build Your Internal Business Case for an AI Agent Platform

Platform investment decisions in 2026 require a business case that finance will trust — not a technology proposal, but an operational ROI model with specific metrics, defensible assumptions, and a timeline tied to budget cycles.

The ROI model for enterprise AI agents operates across three levers.

Operational cost reduction is the most direct lever and the easiest to model. Identify the manual processes that agents will replace or augment — tender document processing, invoice matching, dispute handling, monitoring and alerting, report generation, scheduling and matching. Quantify the current cost: FTE hours per week multiplied by loaded labour cost, plus the cost of errors and delays that manual processes introduce. The comparison point is agent-handled volume at the platform's processing cost. Across the deployments described in this guide, manual operations reductions range from significant — in repetitive, high-volume document and data workflows — to transformational in monitoring and alerting scenarios where manual coverage was structurally impossible at the required scale.

Revenue and cycle-time improvement is the second lever and often the larger one over a three-year horizon. Faster tender processing means more bids submitted. Faster booking turnaround means higher conversion. Earlier anomaly detection means less revenue leakage. Always-on compliance monitoring means deals close faster because pre-screening is continuous rather than episodic. These are not hypothetical improvements — they are the outcomes documented across the deployments in this guide.

Headcount redeployment is the third lever and the one that requires the most careful communication internally. The correct framing is not replacement but redeployment: agents handling high-volume, repetitive cognitive work free the humans currently doing that work to focus on judgment-intensive tasks where human expertise compounds. A finance team spending 60% of its time on manual reporting and exception logging can spend that 60% on analysis, scenario planning, and strategic decision-making once agents handle the reporting and alerting. The productivity gain is real. The framing is everything.

The KPIs that finance teams trust are operational: time-to-close on disputes, SLA attainment rates, manual processing hours per workflow, error rate on data entry and extraction tasks, fill cycle time for staffing operations, and bid volume per sales representative. Build your business case around the specific metrics that your CFO already tracks and understands, and show how agents move those metrics. The ROI Calculator at assistents.ai/resources/ai-agent-roi-calculator provides a structured model for building this analysis.

For board and executive presentations, the 4-week deployment timeline is itself a risk-reduction argument. An investment that generates measurable operational impact within a single quarter — rather than requiring 12 to 18 months of implementation before any return is visible — is structurally different from traditional enterprise software investments. Frame it accordingly.

Ready to Evaluate an Enterprise AI Agent Platform Built for Your Stack?

Choosing the right enterprise AI agent platform is one of the most consequential technology decisions you will make in 2026. It determines not just which workflows you can automate this year, but whether your organization builds an AI infrastructure that compounds in value — or an expensive collection of pilot projects that cannot scale.

The five criteria in this guide — integration depth, governance and auditability, multi-agent orchestration, speed to production, and compliance posture — are not aspirational benchmarks. They are the specific architectural requirements that distinguish platforms that survive enterprise procurement, legal review, and production scale from those that look compelling in a demo and struggle in deployment.

assistents.ai was built to meet all five. The platform provides 300+ bidirectional enterprise integrations across ERP, CRM, data warehouses, identity systems, and workflow platforms. It is SOC 2 Type II certified with HIPAA, GDPR, and SOX compliance built into the architecture. Governance — RBAC, immutable audit logs, approval workflows, policy enforcement — is a first-class architectural feature, not an add-on module. Multi-agent orchestration at city scale, national retail scale, and global logistics scale is already in production. And the deployment timeline from architecture review to production is three weeks.

If you are evaluating enterprise AI agent platforms and want to understand how assistents fits your specific technology stack and use case requirements, the right starting point is a structured technical briefing — not a generic demo.

Explore the Governance Playbook

FAQs

What is an enterprise AI agent platform?

An enterprise AI agent platform is a production infrastructure that allows organizations to build, deploy, govern, and scale AI agents — software systems that autonomously execute multi-step tasks across enterprise applications, databases, and workflows without requiring human instruction at each step.

Unlike a chatbot, which responds to queries, an enterprise AI agent takes actions in live systems: creating records, routing workflows, triggering alerts, updating databases, and generating documents. An enterprise-grade platform adds the integration layer, governance architecture, compliance certifications, and orchestration capabilities required for safe, auditable deployment in large organizations.

How is an enterprise AI agent different from a chatbot or RPA bot?

A chatbot generates responses. An RPA bot follows pre-defined rules. An enterprise AI agent reasons, decides, and acts. The key architectural differences are: autonomous multi-step execution (the agent can complete a workflow end-to-end without a human at each step); cross-system integration (the agent can access and act across multiple enterprise systems simultaneously); adaptive reasoning (the agent handles variation and exceptions that would break an RPA bot); and complete auditability (every action is logged with full context for compliance and review). The practical test: if it cannot take a verifiable action in a live system and produce an audit trail of that action, it is not an enterprise AI agent.

What compliance certifications should an enterprise AI agent platform hold?

The minimum baseline for enterprise deployment in 2026 is SOC 2 Type II (independently audited security controls over a sustained period), HIPAA readiness (for any deployment touching health data), GDPR compliance (for any deployment with European data subjects), and SOX controls (for deployments touching financial reporting).

ISO 27001 is increasingly expected by enterprise procurement teams. For organizations in regulated industries or operating under the EU AI Act's high-risk application categories, additional requirements apply — including documented human oversight mechanisms, transparency of reasoning, and the ability to contest agent decisions. Always request compliance documentation before the vendor demo, not after.

How long does it take to deploy an enterprise AI agent in production?

With a platform that provides pre-built integrations to your existing stack and a well-designed governance configuration layer, the target timeline from contract to production is four weeks. This covers initial architecture review and system connectivity, governance configuration and access control setup, read-only validation where agents observe workflows without taking action, and controlled rollout to action-capable agents.

Deployments that take significantly longer typically reflect one of three root causes: the platform lacks pre-built connectors to the organization's specific technology stack; governance was not designed upfront and requires retrofitting; or the deployment sequence was inverted — capability before governance rather than governance before capability.

What does multi-agent orchestration mean in practice?

Multi-agent orchestration is the ability to coordinate specialized AI agents — each responsible for a defined scope of tasks and data access — into workflows that span multiple departments and systems, with governed handoffs and a unified audit trail across the entire process. In practice, this means a procurement agent, a vendor performance agent, and a finance alert agent can operate as a coordinated system — each staying within its defined scope, passing structured context at handoff points, and escalating to human review when the workflow requires it.

Multi-agent orchestration is what allows AI agents to handle the cross-departmental, cross-system workflows where the highest business value actually lives. Single-agent deployments hit a ceiling at the boundary of the first system they cannot reach.

How do you govern AI agents in a regulated enterprise?

Governance for enterprise AI agents requires four architectural elements: role-based access control (RBAC) that defines what each agent can access and do at the field level; immutable audit logs that record every action with full context — data accessed, decision made, reasoning applied — in a tamper-proof, queryable format; human-in-the-loop design that routes high-impact actions to human review before execution; and policy enforcement that prevents agents from operating outside their defined scope, not just logs when they do.

Governance should be configured before any agent goes into action-capable mode, not after the fact. Organizations in heavily regulated sectors — financial services, healthcare, energy, government — should additionally evaluate platforms against EU AI Act requirements for high-risk applications, including documentation of oversight mechanisms and decision transparency.

What is the ROI of enterprise AI agents?

ROI from enterprise AI agents operates across three primary levers: operational cost reduction (manual process replacement in high-volume, repetitive cognitive workflows), cycle-time improvement (faster processing of bids, orders, disputes, and compliance checks leading to measurable revenue or retention impact), and headcount redeployment (freeing skilled employees from manual processing to higher-value analysis and judgment work).

The range of outcomes documented across enterprise deployments is wide — from approximately 90% reduction in document processing time for tender management workflows to significant reductions in manual monitoring effort in energy and grid operations. ROI modelling should be anchored to the specific operational metrics your CFO already tracks, with conservative assumptions for the first deployment and higher projections for subsequent rollouts that leverage the same governance and integration infrastructure.

Transform Your Business With Agentic Automation

Agentic automation is the rising star posied to overtake RPA and bring about a new wave of intelligent automation. Explore the core concepts of agentic automation, how it works, real-life examples and strategies for a successful implementation in this ebook.

More insights

Discover the latest trends, best practices, and expert opinions that can reshape your perspective

Contact us